2 Moving Beyond Linearity

Literature:

- Moving Beyond Linearity (ISL CH7)

Recall that complexity = also means lower interpretibility. This subject extents the linear models with the following:

- Polunomial Regression - where polynomials of the variables are added.

- Step Functions - where the x range is cut into k distinct regions to produce a qualitative variable. Hence also the name, piecewise constant function.

- Regression Splines - a combination / extensions of number one and two. Where polynomials functions are applied in specified regions of an X range.

- Smoothing Splines - Similar to the one above, but slightly different in the fitting process.

- Local Regression - Similar to regression splines, but these are able to overlap.

- Generalized Additive Models - allows to extent the model with several predictors.

2.1 Models Beyond Linearity

Notice that all approaches despite GAM are extensions of simple linear regression, as it only takes on one predictor variable.

2.1.1 Polynomial Regression

Can be defined by the following

\[\begin{equation} y_{i\ }=\ \beta_0+\beta_1x_i+\beta_2x_i^2+...+\ \beta_dx_i^d\ +\ \epsilon_i \tag{2.1} \end{equation}\]

Rules of thumb:

- We don’t take on more than 3 or 4 degrees of d, as that yields strange lines

Note that we can still use standard errors for coefficient estimates.

2.1.1.1 Beta coefficients and variance

Each beta coefficient has its own variance (just as in linear regression).

It can be defined by a matrix of j dimensions, e.g., if you have 5 betas (including beta 0) we can construct the correlation matrix.

Covariance matrix can be identified by \(\hat{C}\).

Generally we get point estimates, but it is also interesting to show the confidence intervals (using 2 standard errors).

Notice, that we cant really interprete beta coefficients as we do with linear regression, hence we dont have the same ability to do inference as the coefficients are missleading.

2.1.1.2 Application procedure

- Use

lm()orglm() - Use DV~poly(IV,degree)

- Perform CV with

cv.glment()/ aonva F-test to select degree- This is basically either visually selecting the degrees that are the best using CV or using an ANOVA to assess if the MSE are significantly different from each other, hence an ANOVA test. Regarding ANOVA, if there stop being significance, then significant changes, e.g., in a poly 8, where the previous polynomials was not significant, then we can also disregard the 8’th polynomial.

- Fit the selected model

- Look at

coef(summary(fit)) - Plot data and predictions with

predict() - Check residuals

- Interpret

The lecture shows exercise number 6 in chapter 7.

2.1.2 Step Functions

This is literally just fitting a constant in different bins, see example on page 269. This is also called discretizing x. Thus it is not polynoomial, but it is non linear.

It is often applied when we see, e.g., five year age bins, e.g., a 20-25 year old is expected to earn so and so much etc. But we can only really use it, when there are natural cuts, hence one must be considerate using the model.

Major disadvantage: If there are no natural breakpoints, then the model is likely to miss variance and also generalize too much.

Remember, that the steps reflect the average increase in Y for each step. Hence the first bin (range) is defined by \(\beta_0\), and can be regarded as the average of that range of x. Thus each coefficient of the ranges of x is to be understood as the average increase of response.

In other words, \(\beta_0\) is the reference level, where the following cuts reflect the average increase or decrease hereon.

Note that we can still use standard errors for coefficient estimates.

2.1.3 Regression Splines

2.1.3.1 Piecewise Polynomials

This is basically polynomial regression, where the coefficients apply to specified ranges of X. The points where the coefficients changes are called knots. Hence a cubic function will look like the following.

Notice, that a piecewise polynomial function with no knots, is merely a standard polynomial function.

\[\begin{equation} y_{i\ }=\ \beta_0+\beta_1x_i+\beta_2x_i^2+\ \beta_3x_i^3\ +\ \epsilon_i \tag{2.2} \end{equation}\]

Where it can be extended to be written for each range.

The coefficients can then be written with: \(\beta_{01},\beta_{11},\beta_{21}\) etc. and for the second set: \(\beta_{02},\beta_{12},\beta_{22}\)

And for each of the betas in all of the cuts, you add one degree of freedom.

Rule of thumb:

- The more knots, the more complex = the more variance capture and noise trailed, hence low model bias but large model variance.

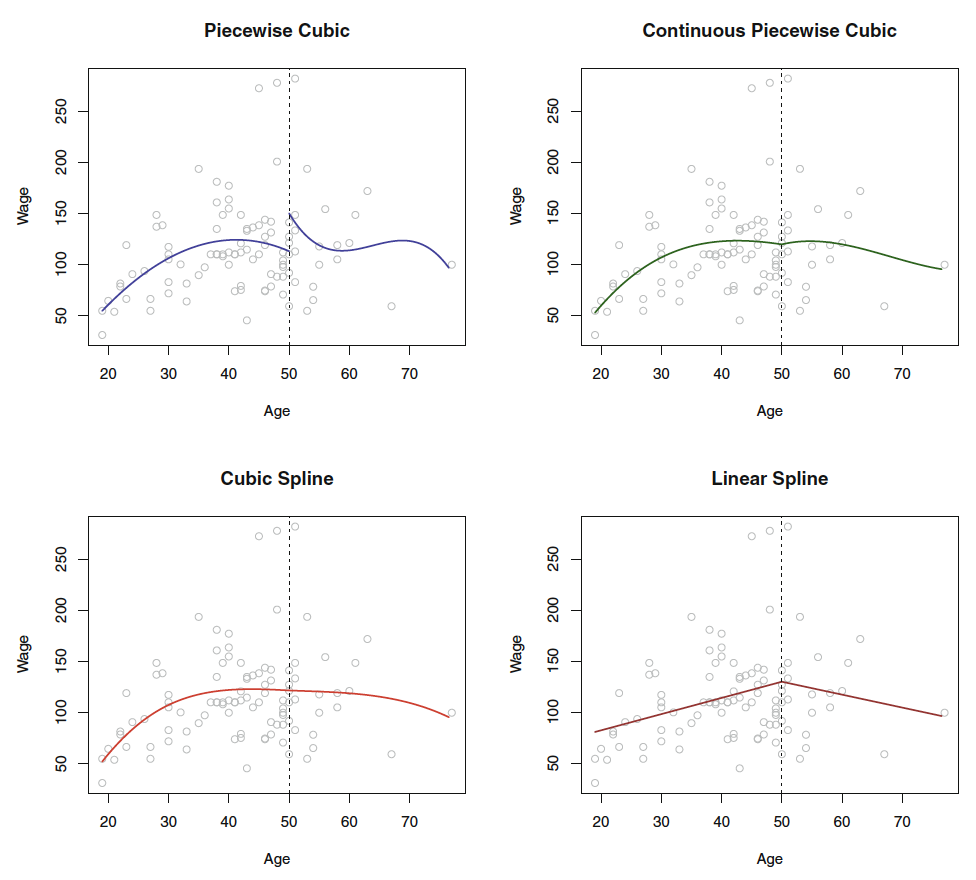

Figure 2.1: Piecewise Polynomials

2.1.3.2 Constraints and Splines

It is a spline when the piecewise polynomials have been imposed with restrictions for continouity and derivatives, so the knot don’t break or so to say, hence the knot will not be visible.

Figure 7.3 show how the splines look, as the top left window tell, the jump is rather odd. Hence, we can force the fit to be continuous, by imposing a constraint.

Notice, that it is a piecewise polynomial regression, when you merely fit polynomials onto bins of data. If you want to have the ends tied together, you impose contraints and thus created a spline.

We can further constrain the model, with adding derivatives of the functions, hence the first derivative and the second derivative (where it in this instance created linear splines, that is because we have added \(d-1\) i.e. 2 derivatives, if the function was to the power of 4, then we should have imposed 3 derivatives to achieve linearity in the splines.)

Hence, the linear spline can be defined by: It is piecewise splines of degree-d polynomials, with continuity in derivatives up to degree \(d-1\) at each knot.

Hence we have the following constraints:

- Continuity

- Derivatives

2.1.3.3 Choosing the number and location of the Knots

Choosing amount of knots? One may ask themself, how many degrees of freedom do you want to include in the model?

Amount of knots is therefore corresponding to amount of degrees of freedom.

We can let software estimate the best amount and the best locations. Here one can use:

- In-sample performance

- Out-of-sample performance, e.g. with CV, perhaps extent to K folds with K tests, to ensure, that each variable has been held out once.

This can be followed by visualizing the MSE for the different simulations with different amount of knots.

2.1.3.4 Degrees of freedom

You count the amount of coefficients in the piecewise polynomial. Then you deduct df as you impose restrictions.

e.g. with one knot, you impose continuity, then you deduct one 1 df. for each derivative that we impose we can subtract one df.

Therefore the example above: 8 df in the beginning less 1 for the cut and two for the two derivatives. Hence, we end up with 5 df.

2.1.3.5 Basis splines vs. natural splines

We see that in polynomial regression and splines we are able to apply the finction bs() and ns(), this stands for basis splines and natural splines. In principle they are very similar, although the natural spline just have an additional constraint in the regions below and above the first and last knot, where it is linear.

Notice, that a polynomial and step functions are basically basis functions

Natiral splies are often beneficiary as you often don’t have much information in these regions, hence a normal polynomial (basis functions) will become very wiggly, hence we seek for linearity here.

2.1.3.6 Comparison with Polynomial Regression

With regression splines we are able to introduce knots that account for variance as it slightly resets the model in each section, hence we can fit the model to the data without having to impose as much complexity as we would in normal polynomial regression.

Hence one often observes that regression splines have more stability than polynomial regression.

2.1.4 Smoothing Splines

In prediction this is slightly better than basic functions and natural splines.

This is basically attempting to find a model that captures the variance by a smoothing line. Doing so, we fit a very flexible model and impose restrictions upon this, to achieve a shrunken model, just as with Lasso and Ridge Regression. Thus, the smoothing (imposing restrictions) deals with overfitting.

Also as we havee discovered previously, that degrees of freedom is equivilant with amount of knots, e.g., in polynomial splines, then three knots in a cubic function leads to 6 degrees of freedom, hence an smooth spline with df = 6.8 can be said to approximately have 3 knots, but we will never really know.

This can be defined as a cubic spline with knot at every unique value of \(x_i\)

Hence we have the following model:

\[\begin{equation} RSS=\sum_{i=1}^n\left(y_i-g\left(x_i\right)\right)^{^2}+\lambda\int g''\left(t\right)^{^2}dt \tag{2.3} \end{equation}\]

i.e. Loss + Penalty

Recall that the penalty controls the curvature of the function

Where:

- We define model g(x)

- \((y_i-g(x_i))^{^2}\) = the loss, meaning the difference between the fitted model and the actual y’s

- \(\lambda\) = the tuning parameter, hence the restriction that we want to impose. If lambda is low, then much flexibility, if lambda is high, then low flexibility. Hence, controls the bias variance tradeoff.

- \(\int g''\left(t\right)^{^2}dt\) = a measure of how much \(g'(x)\) changes oer time. Hence, the higher we set \(\lambda\) the more imposed restrictions, meaning the smoother the model, as lambda gets closer to infinity, the model becomes linear.

2.1.4.1 Choosing optimal tuning parameter

The analytic LOOCV can be calculated, the procedure appears to be the same as for lasso and ridge regression. The book (page 279) describes this a bit in details. However it says that software is able to do this.

Basically what is done, is LOOCV and simulating different tuning parameters to assess what model that performs the best.

Notice, that in R we are not working with \(\lambda\), but we can control the df associated with lambda. Or we can just let the model choose.

With this, degrees of freedom is not the same as we are used to. This creates sparsity as we know from regularization.

We are not expected to explain this, but we should be able to interpret the results

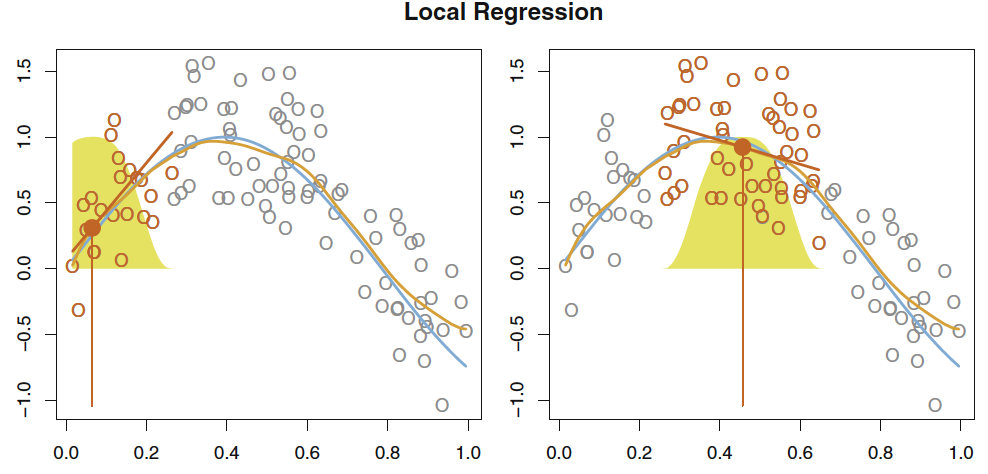

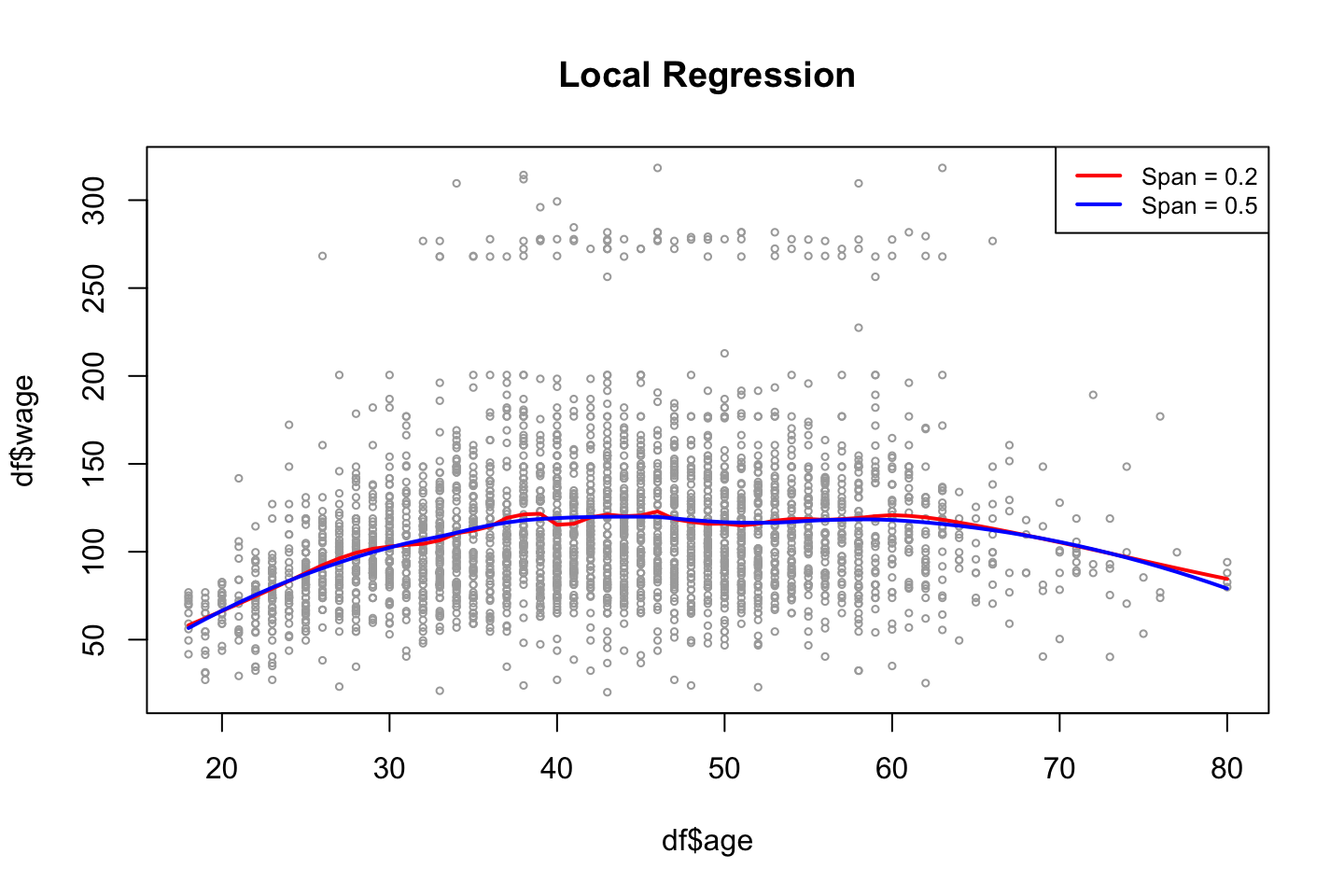

2.1.5 Local Regression

This i basically fitting a linear regression to each x, where s observations are included in the fitting procedure. Thus, one creates several fits, that are based on the observations weighted, where observations close to \(x_0\) (the center of the regression) are given the highest weight and then the weight is gradually decreasing.

This is often really good when you have outliers, as you define how big a neighborhood you want to evaluate (also called the span, e.g. span of 0.5 = 50% of the observations).

This can be visualized with:

Figure 2.2: Local regression

Doing local regression has the following procedure (algorithm)

- Gather the fraction \(s = k/n\) of training points whose \(x_i\) are closest to \(x_0\).

- Assign a weight \(K_{i0} = K(x_i, x_0)\) to each point in this neighborhood, so that the point furthest from x0 has weight zero, and the closest has the highest weight. All but these k nearest neighbors get weight zero.

- Fit a weighted least squares regression of the \(y_i\) on the \(x_i\) using the aforementioned weights, by finding \(\hat\beta_0\) and \(\hat\beta_1\) that minimize

\[\begin{equation} \sum_{i=1}^nK_{i0}\left(y_i-\beta_0-\beta_1x_i\right)^{^2} \tag{2.4} \end{equation}\]

- The fitted value at \(x_0\) is given by \(\hat{f}(x_0)=\hat\beta_0+\hat\beta_1x_0\)

Where we see how the model is

2.1.6 Generalized Additive Models

This can naturally both be applied in regression and classification problems, futher elaborated in the following.

It is called generalized, as the dependent variable can be both continuous (e.g. Gaussian) and categorical (e.g., binomial, Poisson, or other distributions) distributed

Additive = the model is adding different polynomials of the IDV toghether. Notice, as the model is additive, it does not account for interactions, then you have to specify the interactions.

Thus GAM is merely an approach to make a model, where we include the posibility of having non linear components. Hence we include more complex model (with the ability to trail the observations more than linear models).

But the advantage of linear regressions, are that we are able to quickly deduct the effects the variables. Although we dont always have a linear relationship, hence you can be forced to choose a more complex model. (see the R file “GAMs with discussion R”).

We have previously worked with non parametric models (e.g., KNN regression). GAM is in between linear regression and non parametric models.

That is the beuty of GAMs, as we preserve the ability of having transparancy in the model, despite it coming at a cost of worse prediction power than neural networks, but at such complex models, you are not able to deduct how the variables are interrelated, you can only say which are important and which are not.

What are the assumptions for GAMS??

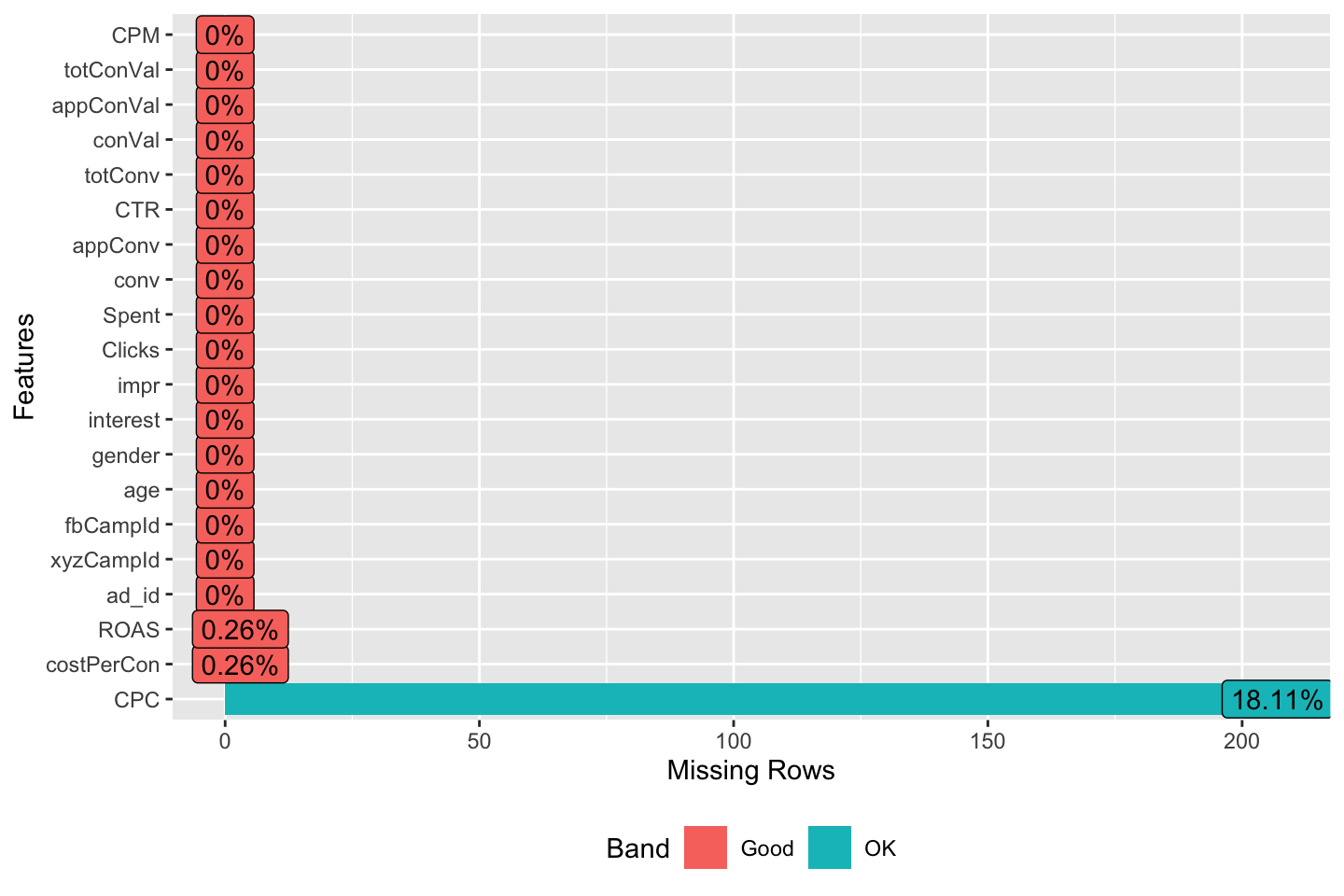

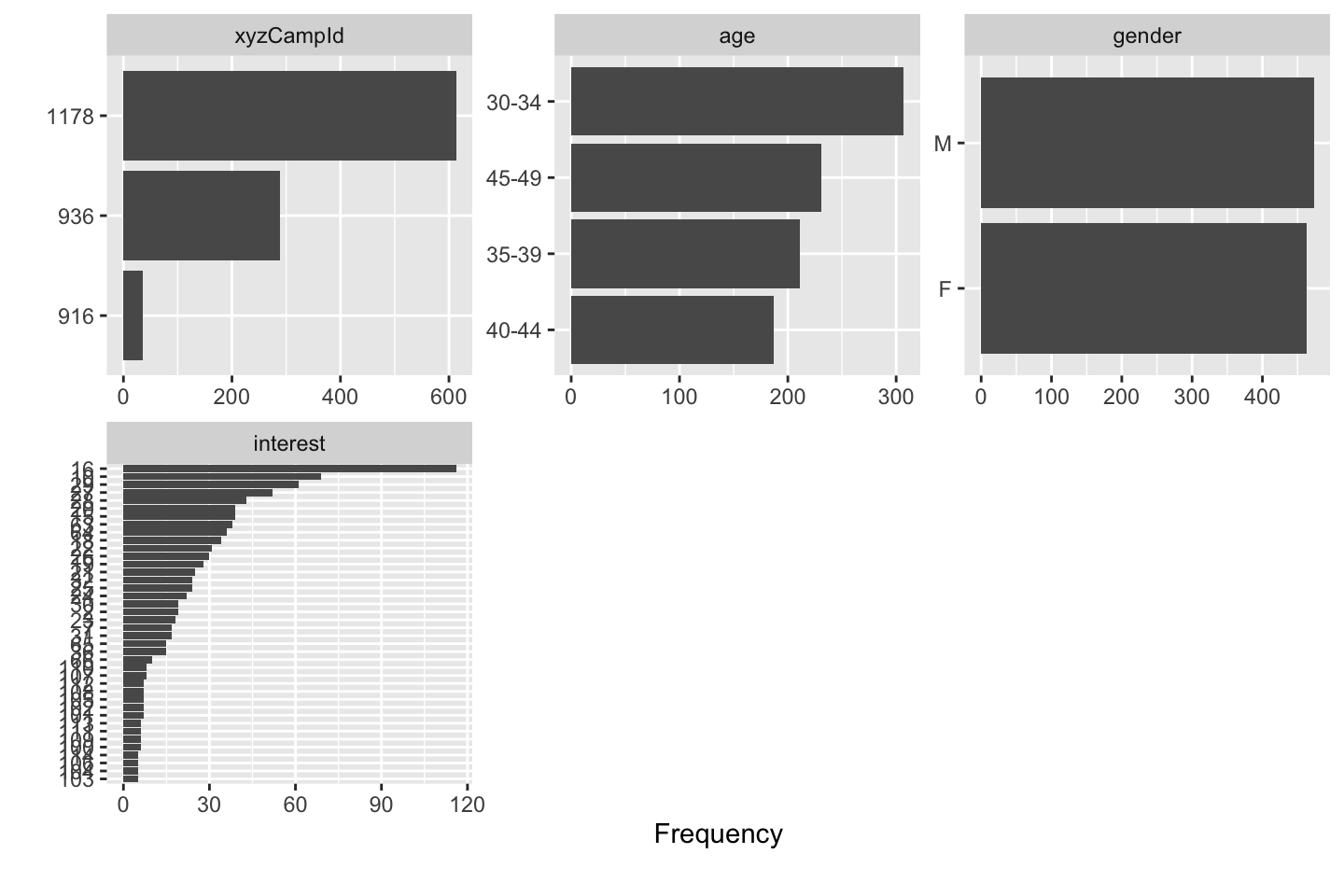

2.1.6.1 Feature Selection

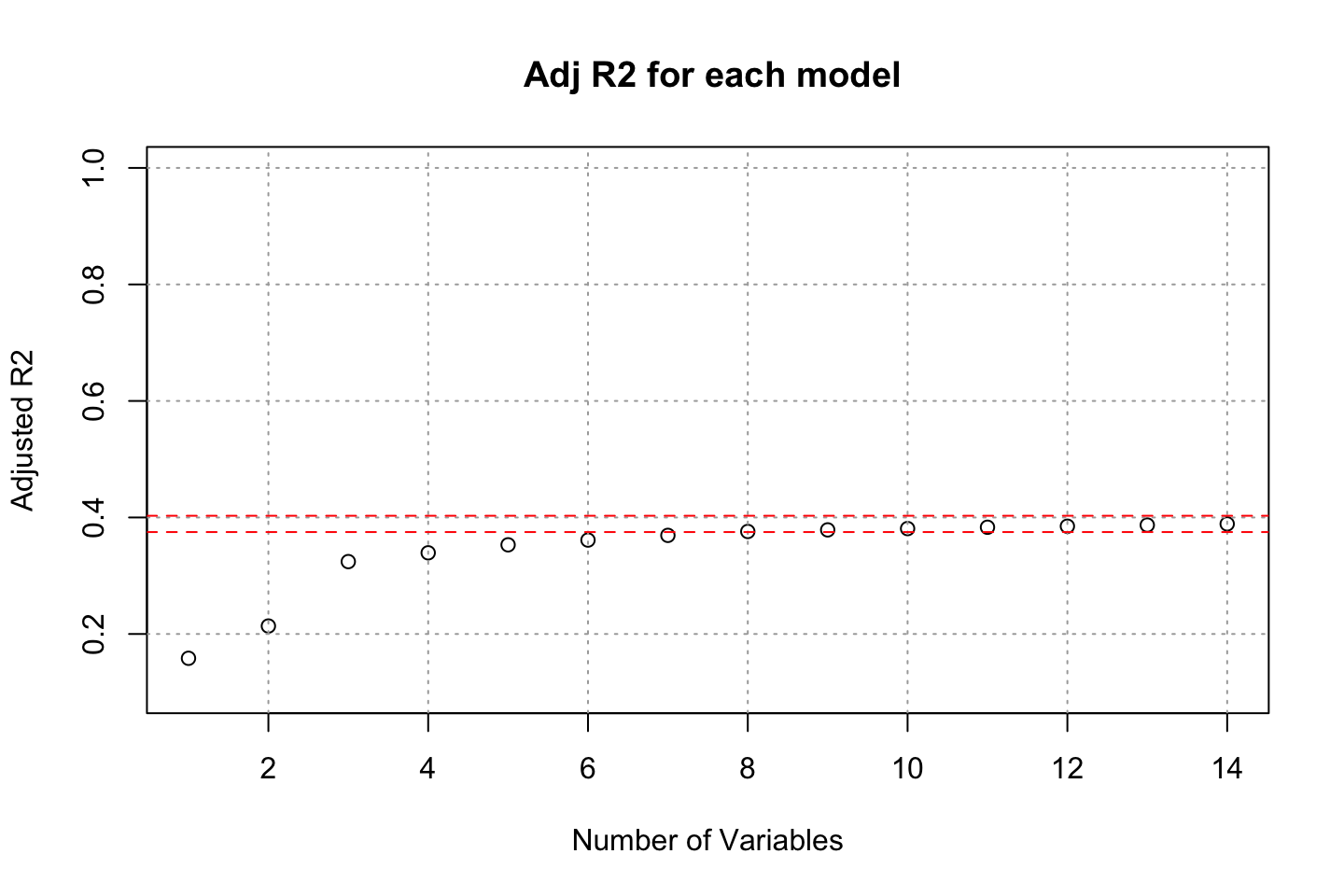

There are naturally a bunch of different approaches, we either manually assess this, running an automatic feature selection algorithm or both, see examples below. Also the casestudy in section 2.5 provides an example of all approaches to assess the features.

Feature selection approaches includes:

Generally looking at the variables “one by one,” to understand what features are important and to figure out how they contribute towards solving the problem.

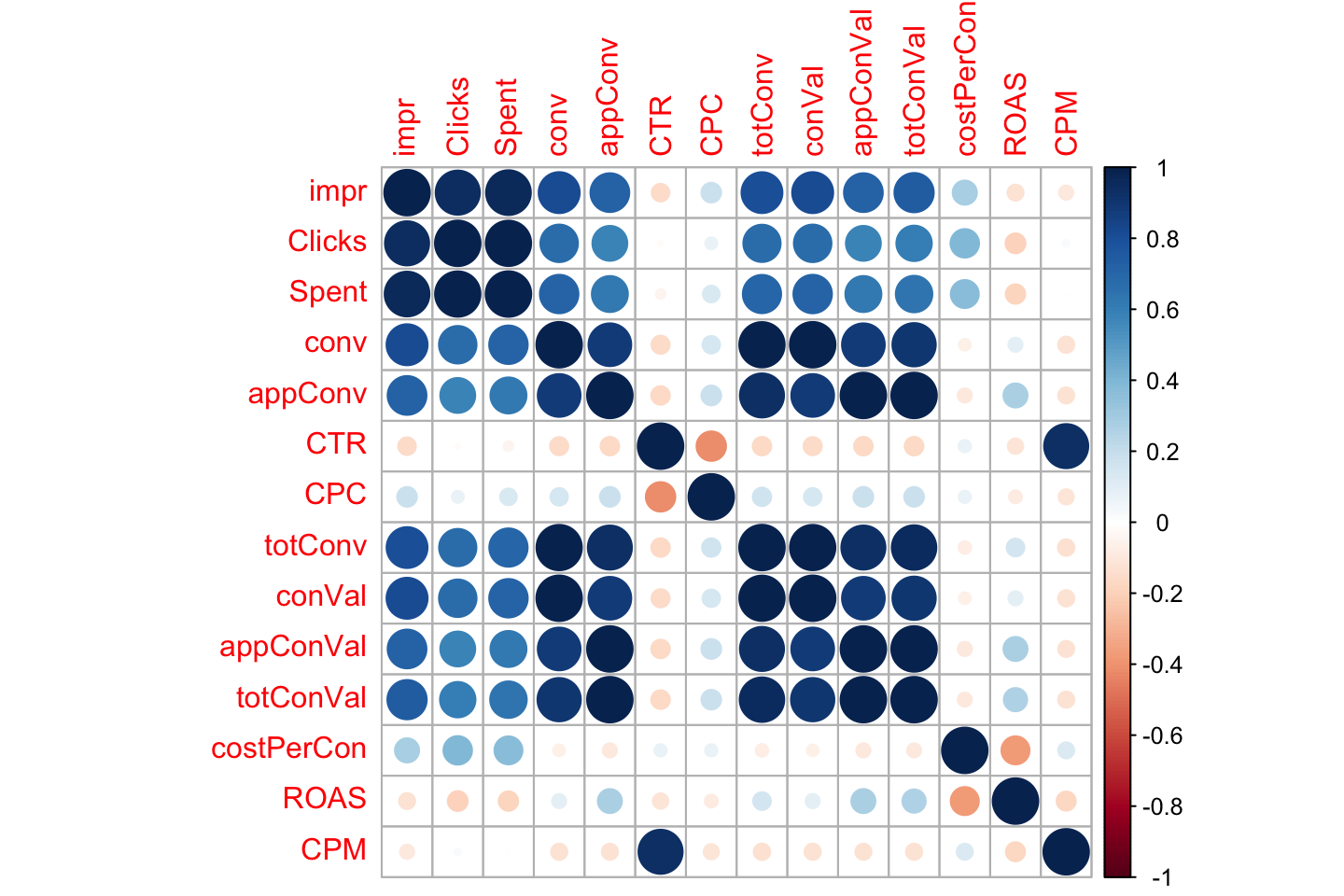

Looking at the correlation matrix: If we are working with a model which assumes a linear relationship between the dependent and the independent variables, corr matrix can help us come up with an initial list of variable importance. However, corr matrix also works as a “rough informative tool” for nonlinear modelling.

Running automatic feature selection algorithms. Functions in R include, among others:

- c1. regsubsets() function in “leaps” library (presented in ISL, p. 244); used to select the best size model that contains a given number of predictors, where best is quantified using Residual Sum of Squares (RSS). Although regsubsets() is based on testing linear models, it works as a “rough” list for nonlinear models.

- c2. step.Gam() function in “gam” library for stepwise selection of variables in GAM models. This is useful when the number of predictors is not very high.

- c3. advanced feature selection methods based on other data-mining techniques, including but not only: random forests, Bayesian Networks, Neural Networks, or other. Notice, that we can use very complex methods of feature selection, and then construct a model that is more transparant, for instance GAMs

We will end up with candidate models, during the case study a new approach was introduced, which was quite nice, this has the following procedure:

- Train the candidate model

- Do cross validation of k partitions on the train data.

- Select the best model.

- Run this on the test data. NB: Ideally we don’t want to run more than one model on the test data, as that will lead to data leakage and we may choose the best model based on the best fit to the test data. That is wrong.

2.1.6.2 GAM for regression problems

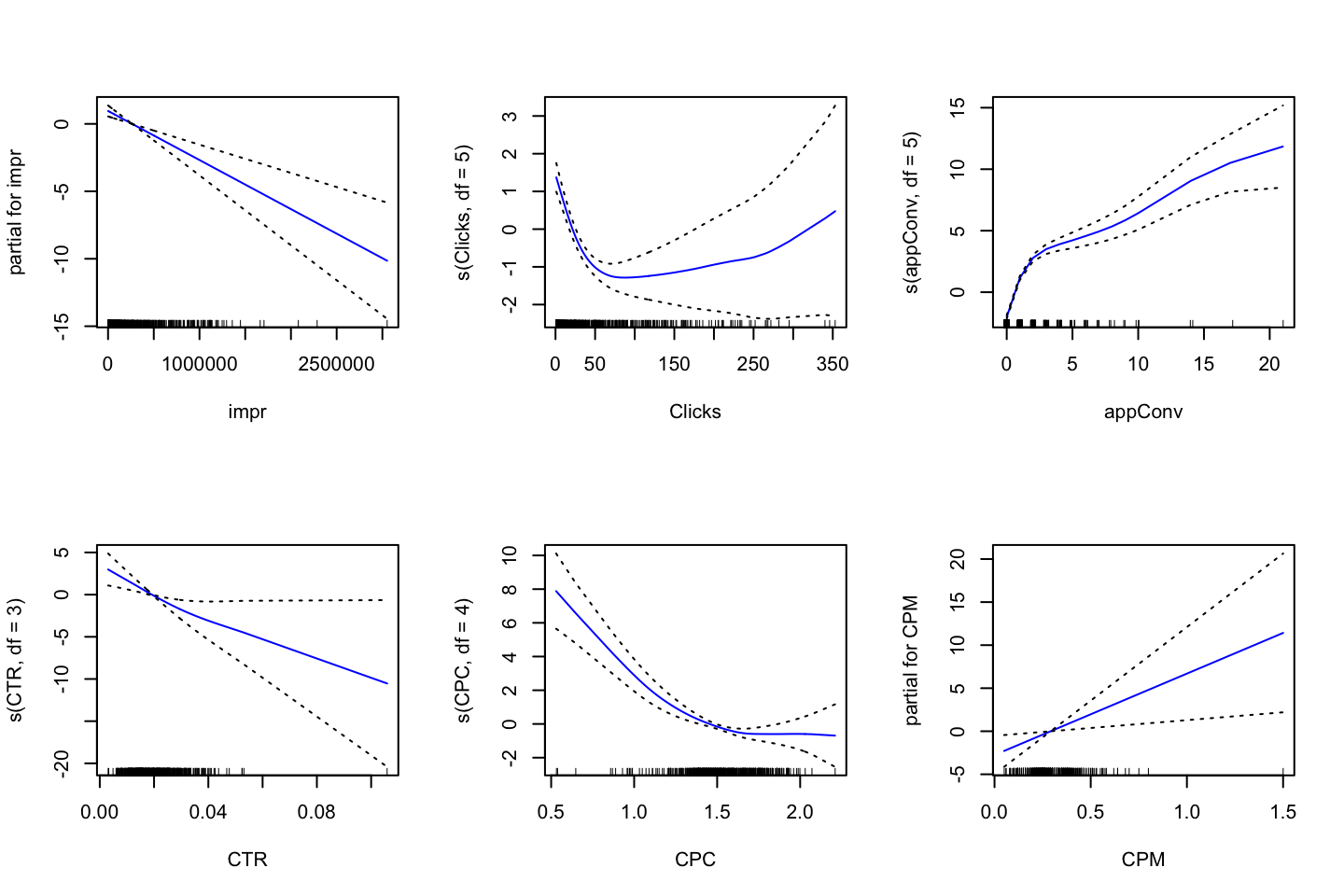

Now we move beyond being constrained to only one predictor variable, hence GAM can be seen more as an extension of multiple linear regression. Hence GAM is a combination of different functions, where they are each fitted while holding the other variables fixed. GAM can consist of any different non-linear model, e.g., we can just as well use local regression, polynomial regression, or any combination of the approaches seen above in this subsection.

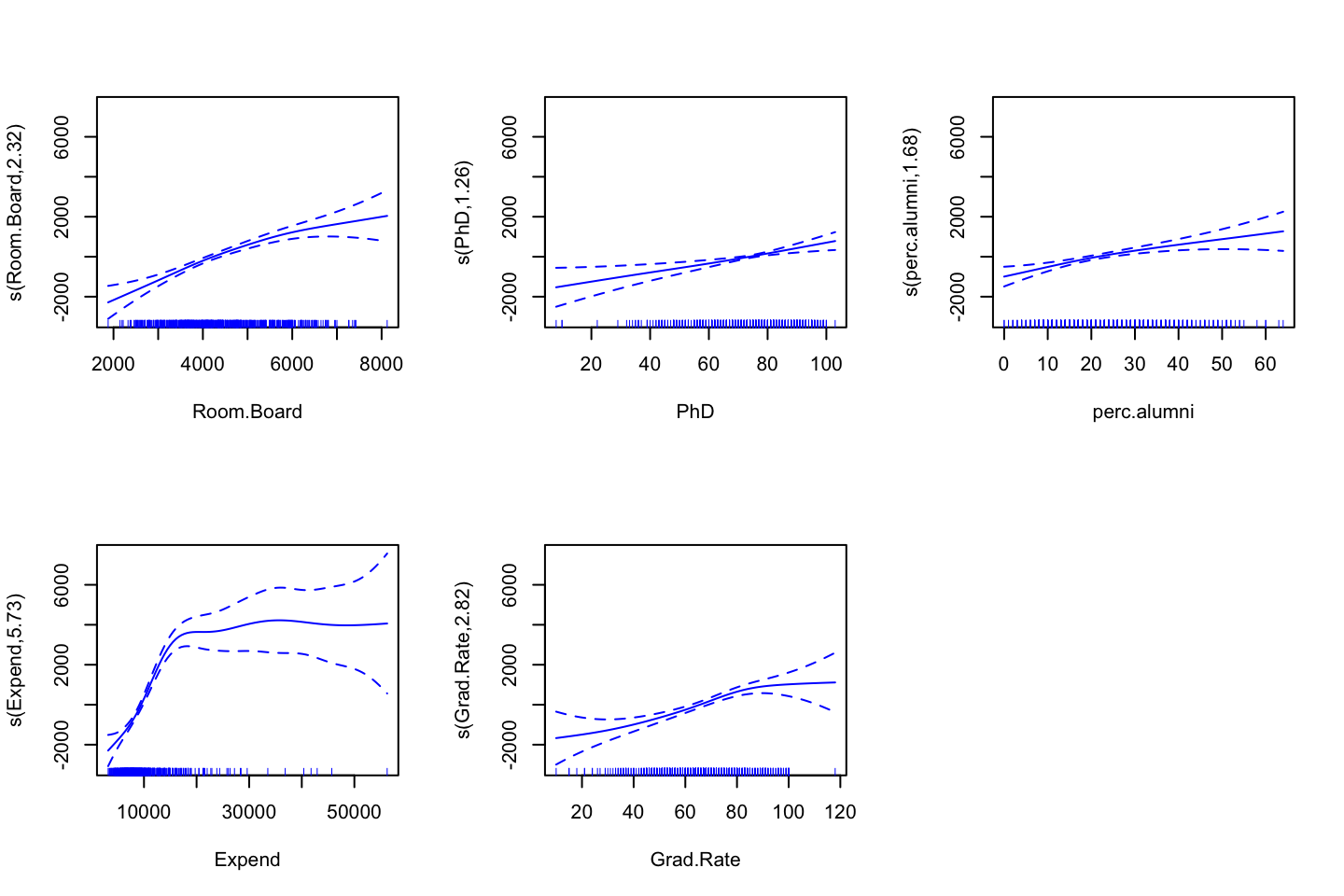

See section @ref(fig:GAMPlotLab7.8.3) for explanation of interpretation of the plots.

Disadvantages of GAM:

- The fitting procedure holds the other variables fixed, hence it does not count for interactions. Therefore, one may manually construct interaction variables to account for this, just like in mulitple linear regression.

- Prediction wise it is not competitive with Neural Networks and Support Vector Machines.

Advantages of GAM:

- Allowing to fit non linear function for j variables (\(f_j\))

- Has potential of making more accurate predictions

- As the model is additive (meaning that each function is fitted holding the other variables fixed) we are still able to make inference, e.g., assessing how one variable affects the y variable.

- Smoothness of function \(f_j\) can be summarized with degrees of freedom.

- Often applied when aiming for explanatory analysis (instead of prediction)

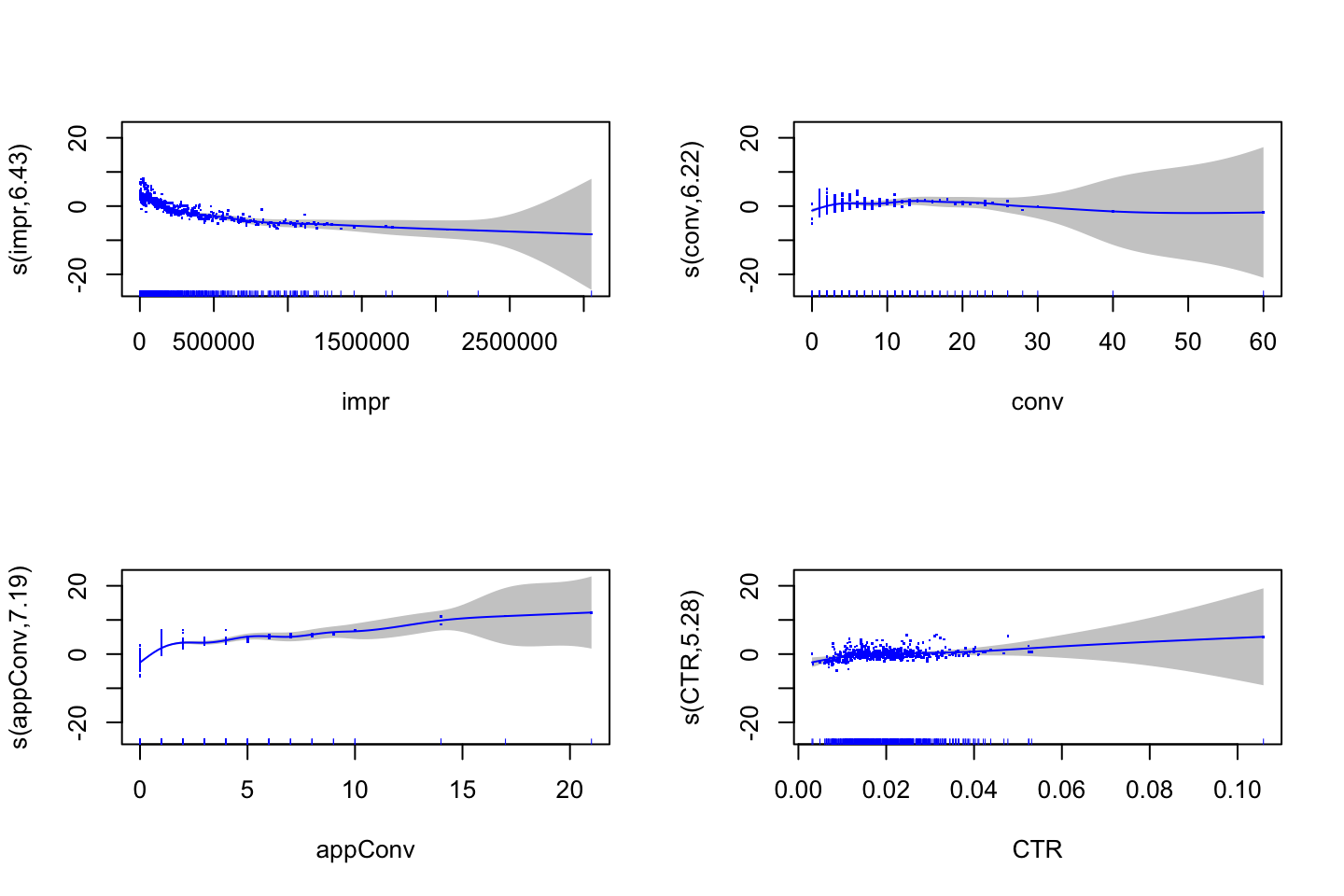

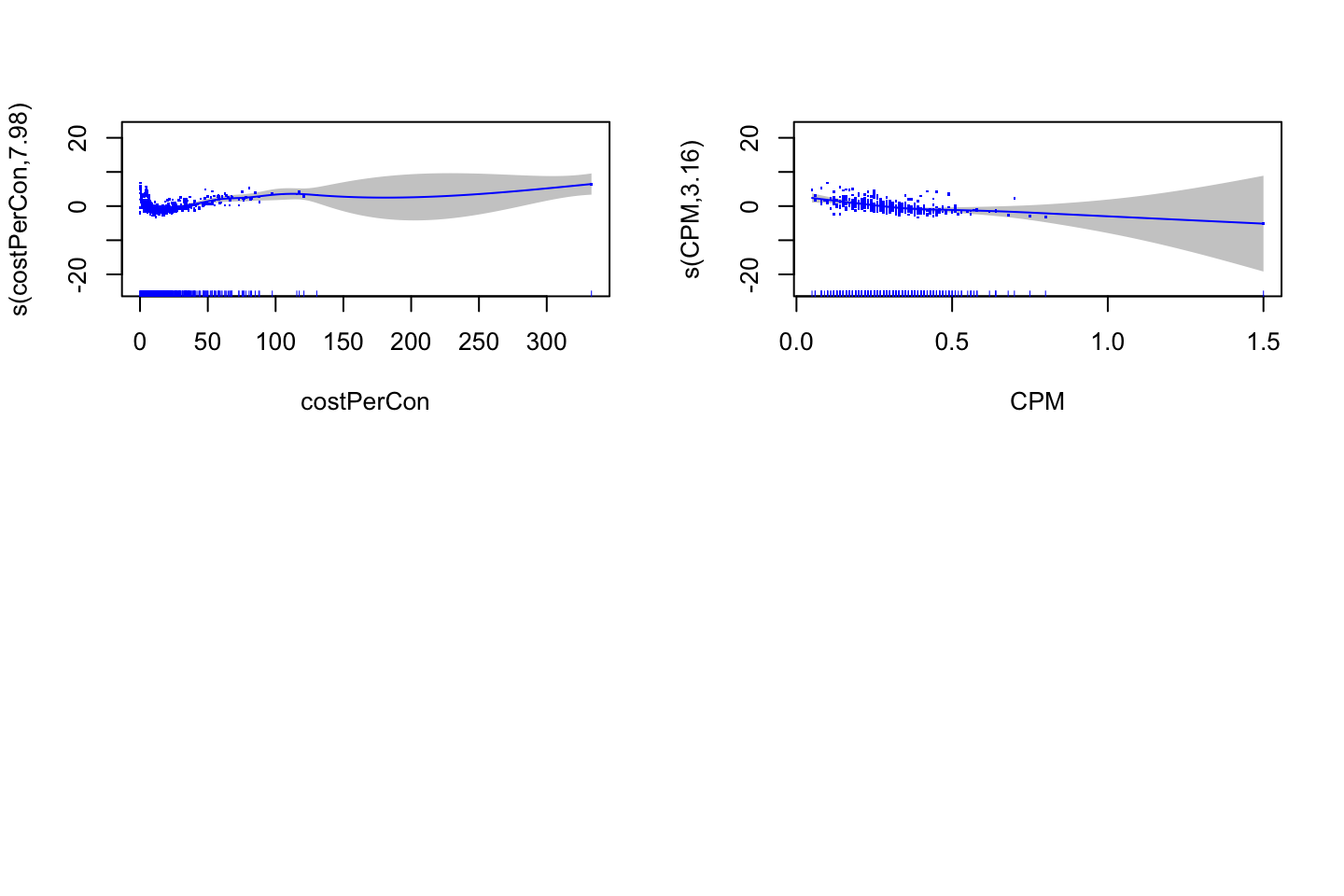

2.1.6.2.1 Interpretation of output

Remember GAM is a construct of M different models, hence there are different areas one must be aware of.

With smooth variables:

summary()will output approximate signifcance of smooth terms,- Here we can assess if there is statistical evidence for including the smoothing on the variable. But notice, that this is merely the p-value.

- We can also assess the

edf= estimated degrees of freedom, hence the flexibility of each smoothed variable.

With linear variables we get Parametric coefficients (from

summary()): These are what we know from the linear scenario. It assigns a p-value and coefficients to the linear variables. Interpretation wise, we should not pay too much attention to this, although it is always good to have the p-values in mind.With

summary()we also get the traditional information, such as \(R^2\) and adjusted. This can also be found in the documentation.

2.1.6.3 GAM for classification problems

When y is qualitative (categorical), GAM can also be applied in the logistical form.

As discovered in the classification section, we can apply logits (log of odds) and odds, see material from first semester.

The same advantages and disadvantages as in the prior section applies.

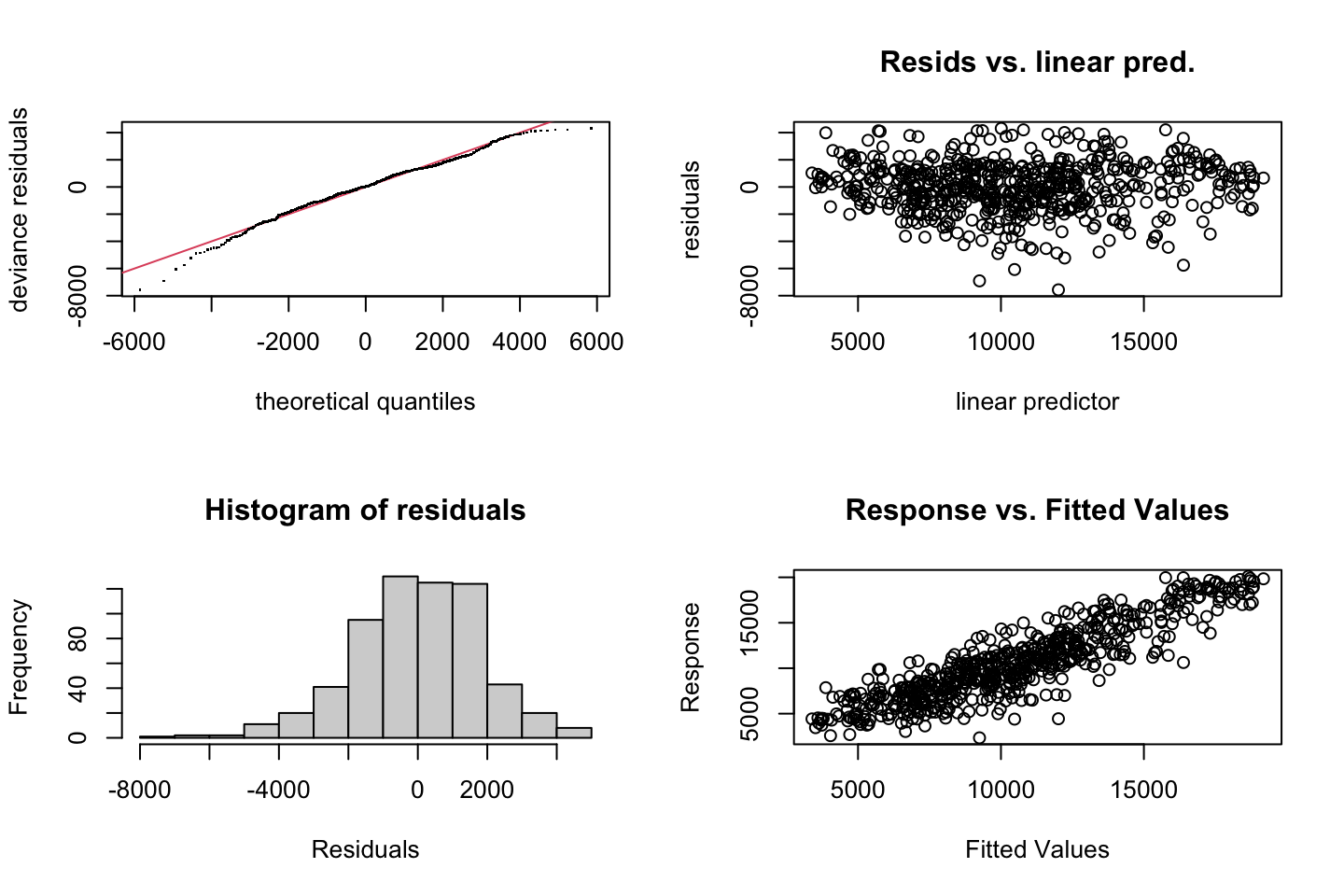

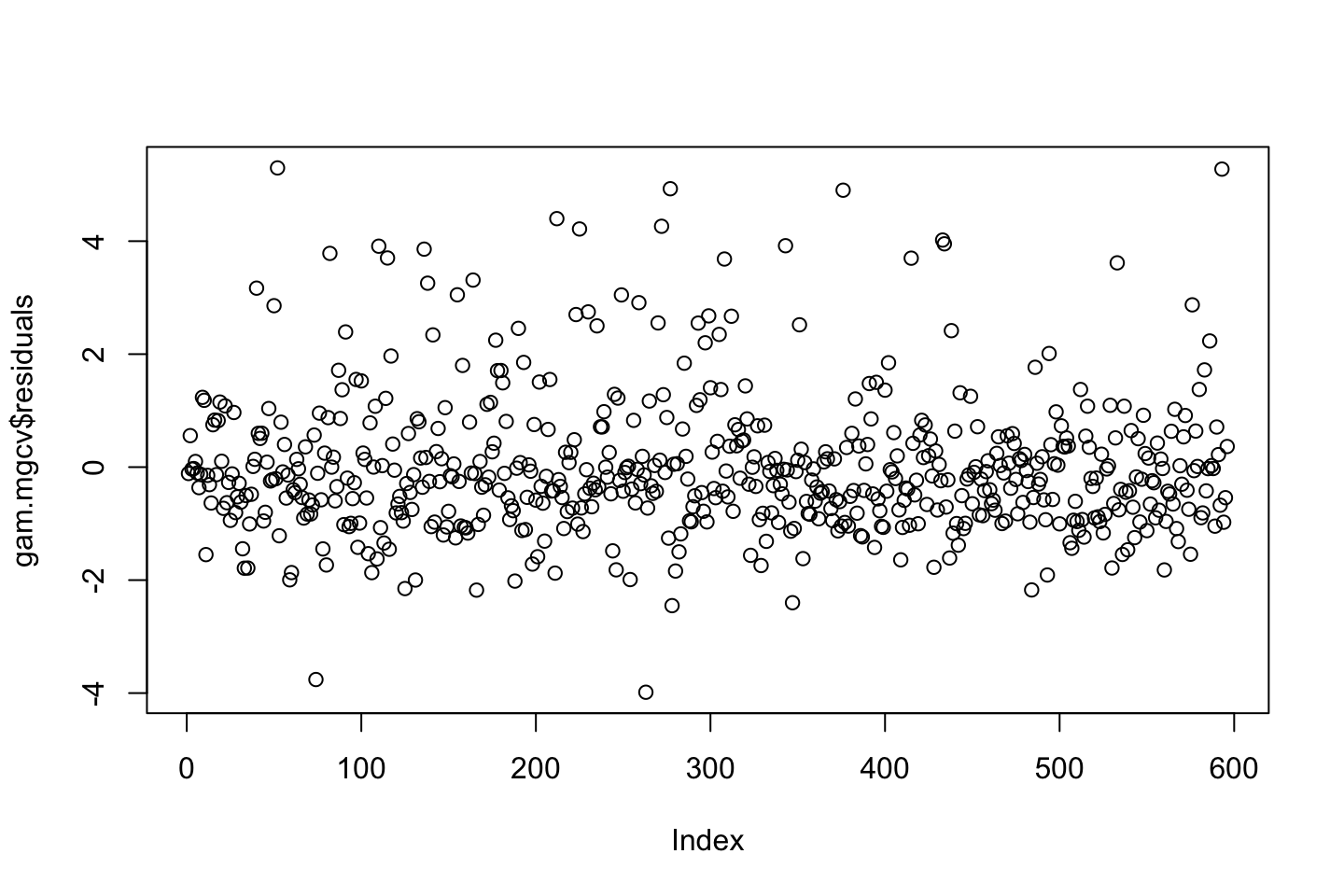

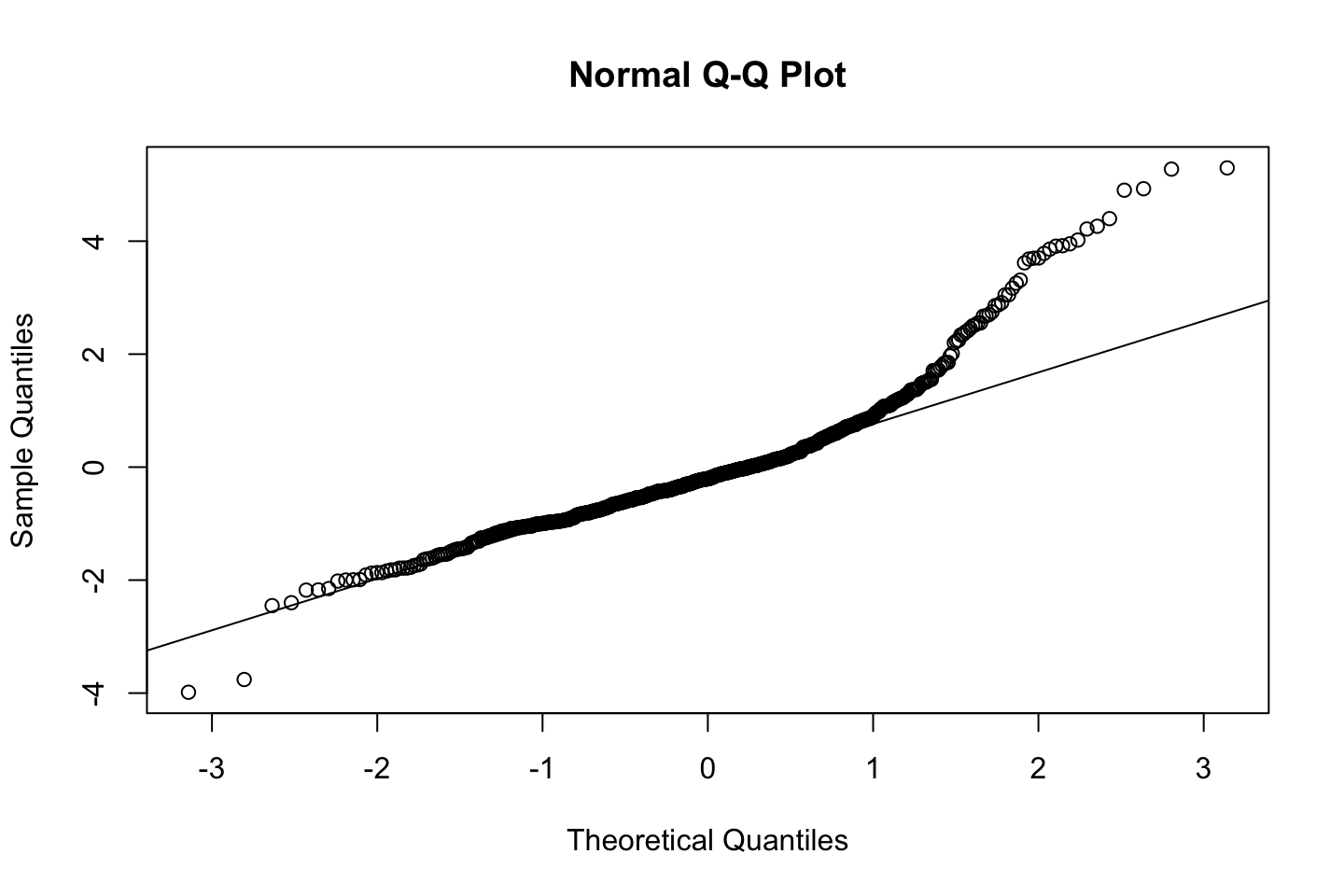

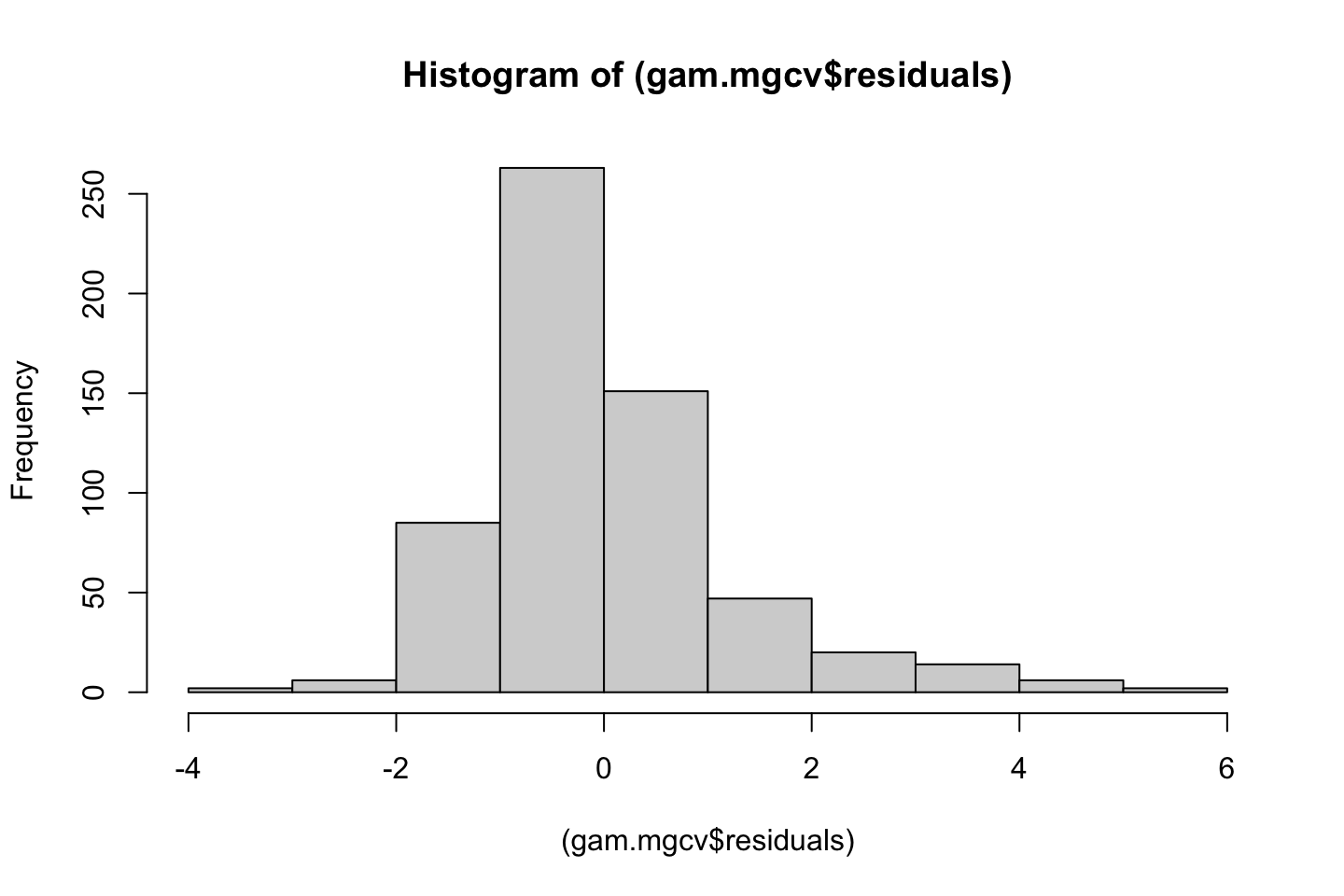

2.1.7 Model assessment

The same applies as in ML1, thus I refer to ML1.

Also one must always assess the residuals, e.g., for normality.

2.2 Lecture notes

Talking about polynomials and what they are, e.g., can be parabula, etc.

With splines we set polynomials in each of the X regions. Where just polynomial regression is fitted to the whole dataset, and not just in regions.

By default poly() will make orthogonal polynomials. Meaning that it tries to create orthogonal terms, where the polynomials are orthogonal (not related to each other). As that is default, then we have to define, that we want to use raw data, hence raw = TRUE, hence we will get the regular polynomials of the dataa.

See notes in her R file.

General Wrap-Up

- The approaches are similar

- Must be aware of why the models are used

- Try them out

- Assess how they look

2.3 Lab section

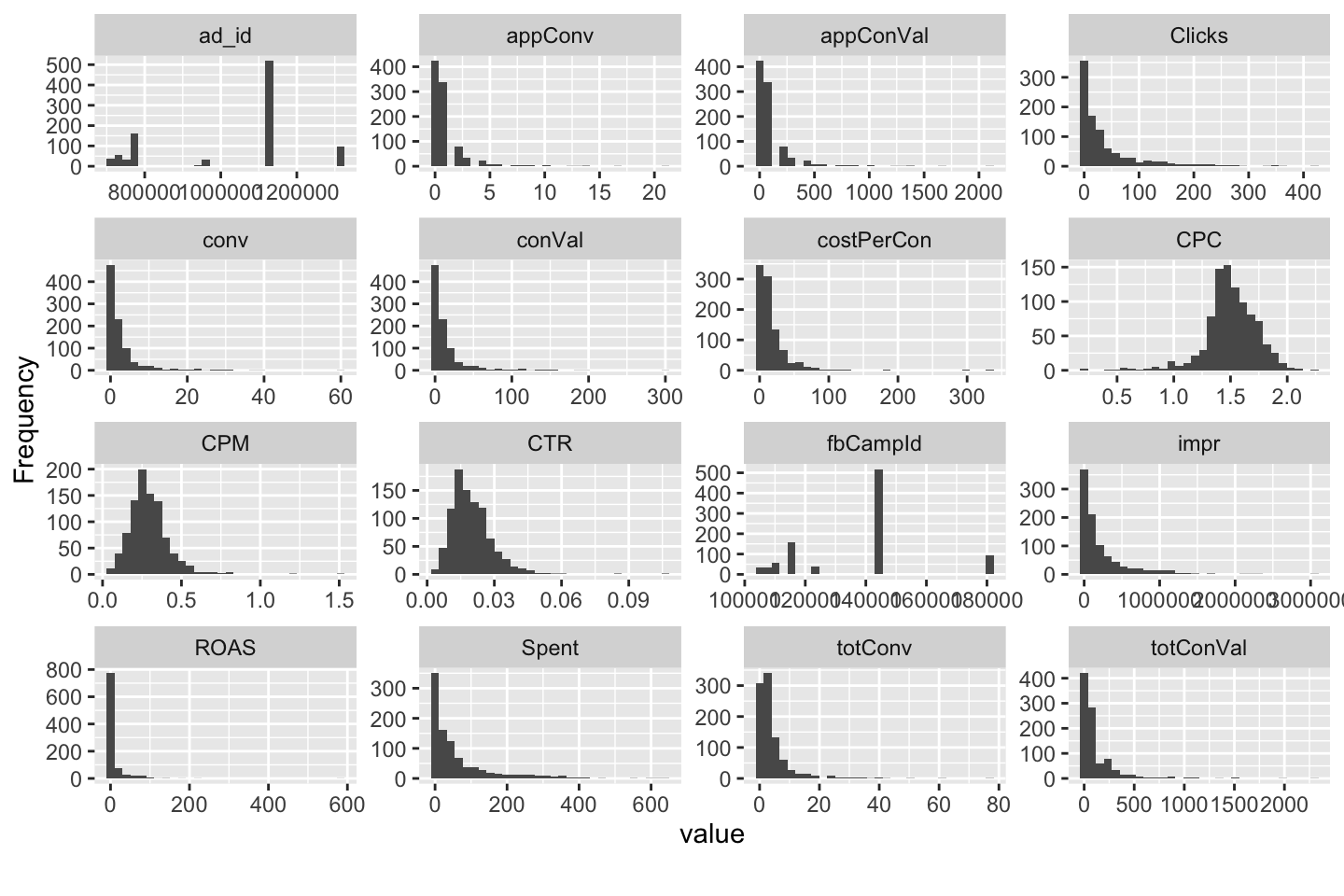

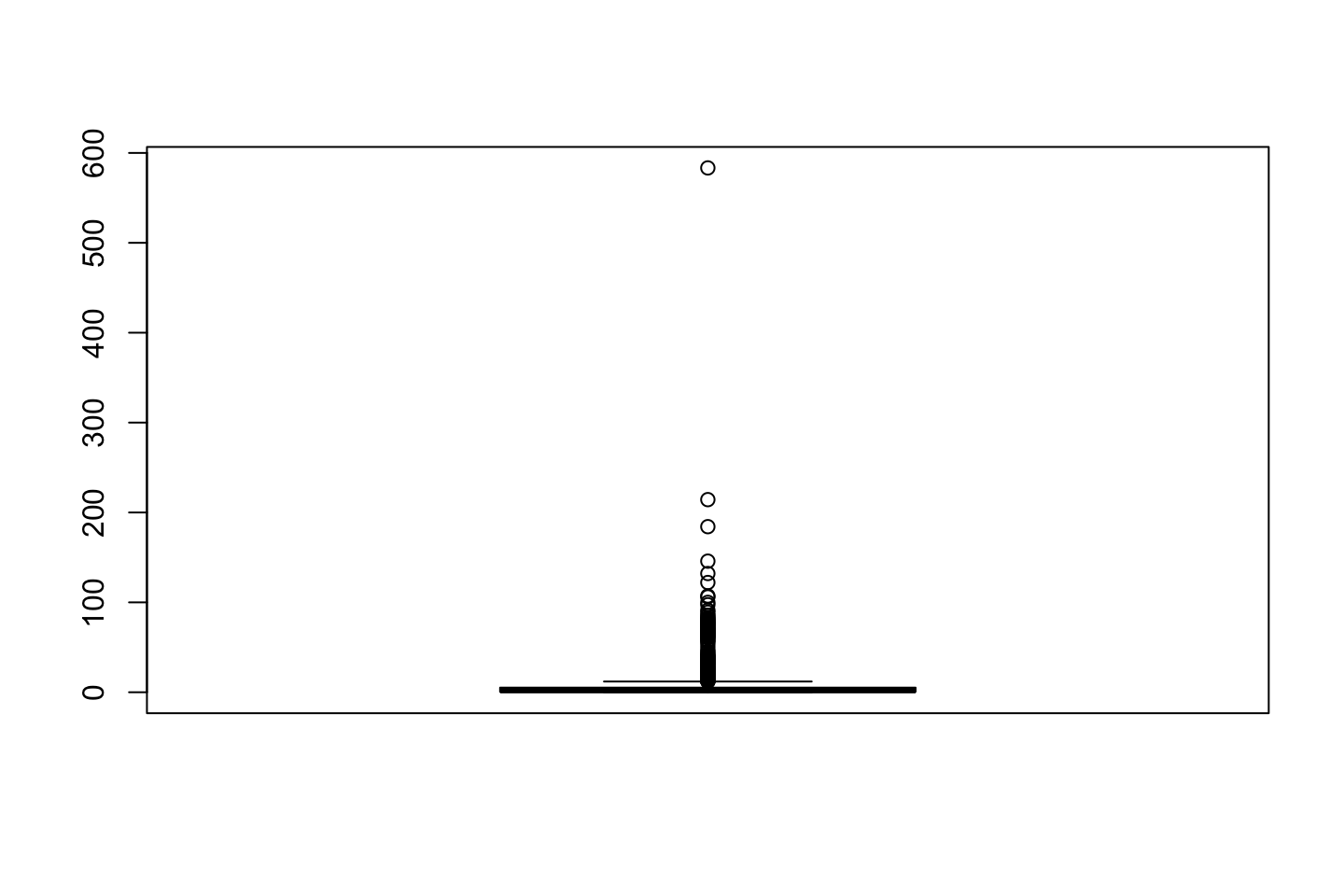

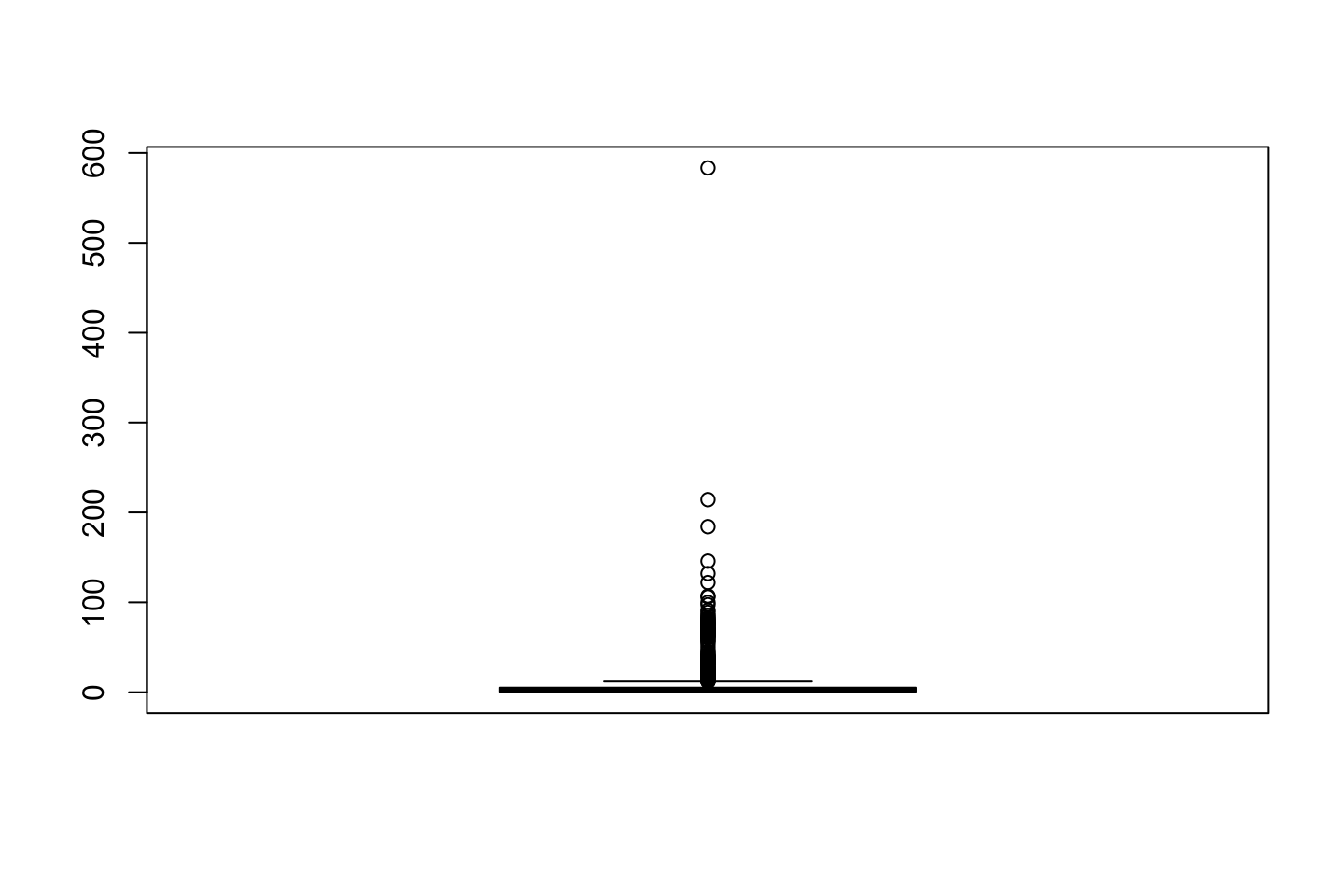

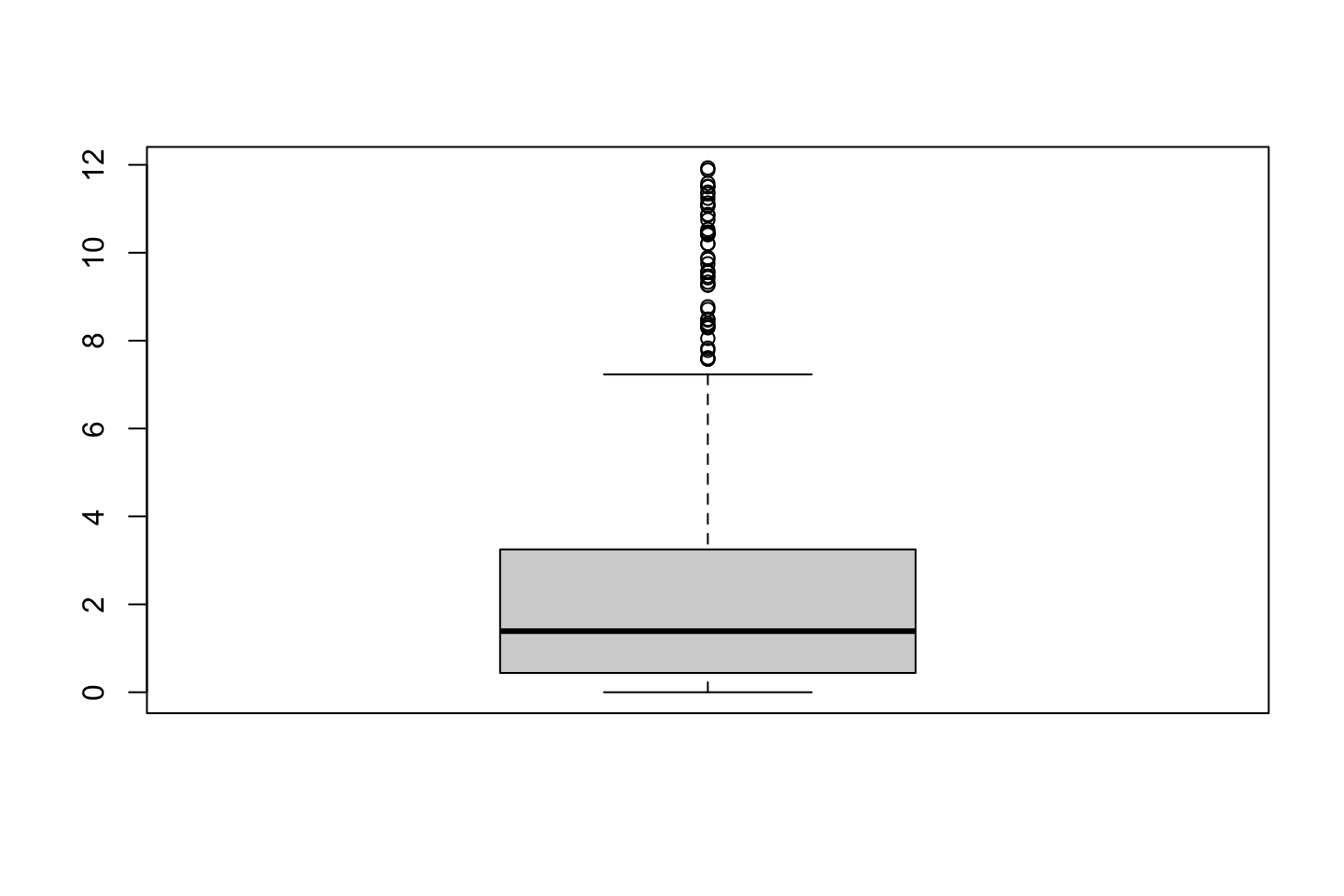

Loading the data that will be used throughout the lab section.

library(ISLR)

attach(Wage)

df <- Wage

2.3.1 Polynomial Regression and Step Functions

2.3.1.1 Continous model

Fitting the model:

fit <- lm(wage ~ poly(age,4) #Orthogonal polynomials

,data = df)

fit2 <- lm(wage ~ poly(age,4,raw = TRUE) #Orthogonal polynomials

,data = df)Note: poly() returns orthogonal polynomials, which is some linear combination of the variables to the d power. See the following two examples when using orthogonal and normal polynomials:

{

print("Orthogonal")

cbind(df$age,poly(x = df$age,degree = 4))[1:5,] %>% print()

print("Regular")

cbind(df$age,poly(x = df$age,degree = 4,raw = TRUE))[1:5,] %>% print()

}## [1] "Orthogonal"

## 1 2 3 4

## [1,] 18 -0.0386247992 0.055908727 -0.0717405794 0.08672985

## [2,] 24 -0.0291326034 0.026298066 -0.0145499511 -0.00259928

## [3,] 45 0.0040900817 -0.014506548 -0.0001331835 0.01448009

## [4,] 43 0.0009260164 -0.014831404 0.0045136682 0.01265751

## [5,] 50 0.0120002448 -0.009815846 -0.0111366263 0.01021146

## [1] "Regular"

## 1 2 3 4

## [1,] 18 18 324 5832 104976

## [2,] 24 24 576 13824 331776

## [3,] 45 45 2025 91125 4100625

## [4,] 43 43 1849 79507 3418801

## [5,] 50 50 2500 125000 6250000In the end, it does not have a noticeable effect.

options(scipen = 5)

{

coef(summary(fit)) %>% print()

coef(summary(fit2)) %>% print()

}## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 111.70361 0.7287409 153.283015 0.000000e+00

## poly(age, 4)1 447.06785 39.9147851 11.200558 1.484604e-28

## poly(age, 4)2 -478.31581 39.9147851 -11.983424 2.355831e-32

## poly(age, 4)3 125.52169 39.9147851 3.144742 1.678622e-03

## poly(age, 4)4 -77.91118 39.9147851 -1.951938 5.103865e-02

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) -184.1541797743 60.04037718327 -3.067172 0.0021802539

## poly(age, 4, raw = TRUE)1 21.2455205321 5.88674824448 3.609042 0.0003123618

## poly(age, 4, raw = TRUE)2 -0.5638593126 0.20610825640 -2.735743 0.0062606446

## poly(age, 4, raw = TRUE)3 0.0068106877 0.00306593115 2.221409 0.0263977518

## poly(age, 4, raw = TRUE)4 -0.0000320383 0.00001641359 -1.951938 0.0510386498Even though the coefficients are different and the p-values hereof, the fitted values will be indistinguishable (Hastie et al. 2013, 288). This is also shown later.

Alternatives to using poly()??

We have two alternatives:

Using

I()Using

cbind()Using

I()

fit2a <- lm(wage ~ age + I(age^2) + I(age^3) + I(age^4) #Note that 'I()' is added

,data = df)

coef(fit2a)## (Intercept) age I(age^2) I(age^3) I(age^4)

## -184.1541797743 21.2455205321 -0.5638593126 0.0068106877 -0.0000320383Notice I() as ‘^’ has another special meaning in formulas

Hence we see that the coefficients are the same.

- Using

cbind()

fit2b <- lm(wage ~ cbind(age,age^2,age^3,age^4)

,data = df)

coef(fit2b)## (Intercept) cbind(age, age^2, age^3, age^4)age

## -184.1541797743 21.2455205321

## cbind(age, age^2, age^3, age^4) cbind(age, age^2, age^3, age^4)

## -0.5638593126 0.0068106877

## cbind(age, age^2, age^3, age^4)

## -0.0000320383We see that we are now able to use ‘^’ within the cbind().

proceeding with the lab sections. We can now present a grid of values for age, at which we want predictions and then call the predict() and also plot the standard errors.

agelims <- range(df$age) #The min and max

age.grid <- seq(from = agelims[1],to = agelims[2]) #Creating a counter within the range

preds <- predict(object = fit

,newdata = list(age = age.grid) #Creating a list with the counter named age, so it fits the IV naming

,se.fit = TRUE)

se.bands <- cbind(preds$fit + 2*preds$se.fit #Upper band

,preds$fit-2*preds$se.fit) #Lower bandNotice that 2 SE (2 sd) = 95%, hence we expect to contain 95% of the data within confidence levels.

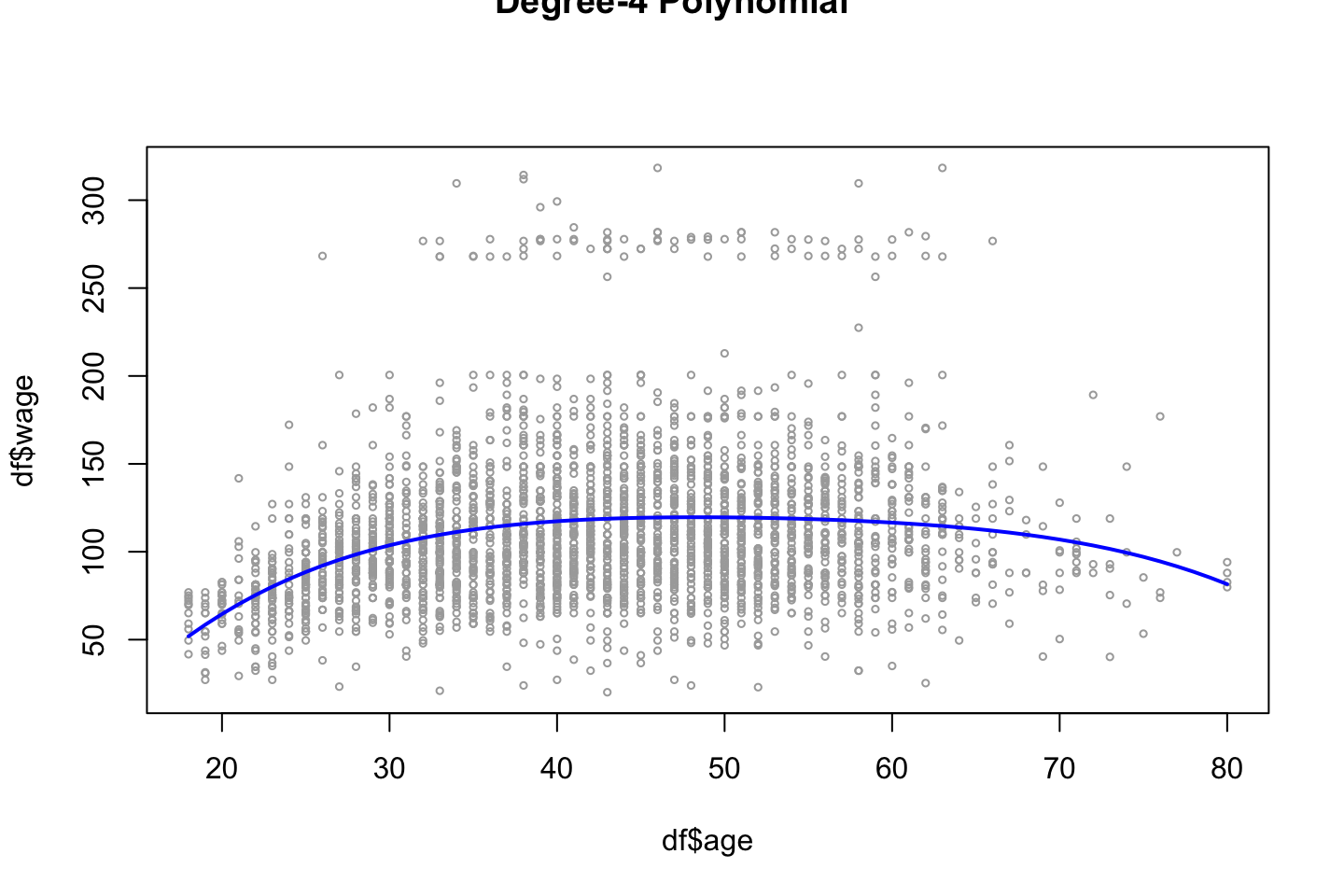

Now we can plot the data

plot(x = df$age,y = df$wage

,xlim = agelims

,cex = 0.5 #Size of dots

,col = "darkgrey")

title("Degree-4 Polynomial",outer = TRUE)

lines(x = age.grid,y = preds$fit

,lwd = 2

,col = "blue")

Comparison between raw polynomials and orthogonal polynomials

With the following we see that the difference of the fitted values are practically 0.

preds2 <- predict(object = fit2

,newdata = list(age = age.grid)

,se.fit = TRUE)

max(abs(preds$fit-preds2$fit))## [1] 7.81597e-11In terms of predictions, the two approaches are more or less the same, although the orthogonal polynomials removes some effect of collinearity.

Assessing what polynomial to include

Now we can compare models with different orthogonal polynomials. Using ANOVA, which compare the RSS to see if the decrease in RSS is significant.

fit.1 <- lm(wage~age,data=df)

fit.2 <- lm(wage~poly(df$age,2),data=df)

fit.3 <- lm(wage~poly(df$age,3),data=df)

fit.4 <- lm(wage~poly(df$age,4),data=df)

fit.5 <- lm(wage~poly(df$age,5),data=df)

anova(fit.1,fit.2,fit.3,fit.4,fit.5)| Res.Df | RSS | Df | Sum of Sq | F | Pr(>F) |

|---|---|---|---|---|---|

| 2998 | 5022216 | NA | NA | NA | NA |

| 2997 | 4793430 | 1 | 228786.010 | 143.5931074 | 0.0000000 |

| 2996 | 4777674 | 1 | 15755.694 | 9.8887559 | 0.0016792 |

| 2995 | 4771604 | 1 | 6070.152 | 3.8098134 | 0.0510462 |

| 2994 | 4770322 | 1 | 1282.563 | 0.8049758 | 0.3696820 |

Note,

- the anova compares the sum of resduals squared.

- the anova follows an F distribution, hence we could apple the critical values

based on the anova we see that the errors change significantly until the 5th degree, hence the decision should be to take the model with order 4 of polynomials.

Notice, that the model will never become worse in sample when complexity is added, as we fit the model more to the data.

Alternative

We could also have obtained the same output using coef() instead of the anove, where we see that teh p-values are the same, also the squared value of t (\(t^2=F\)).

coef(summary(fit.5))## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 111.70361 0.7287647 153.2780243 0.000000e+00

## poly(df$age, 5)1 447.06785 39.9160847 11.2001930 1.491111e-28

## poly(df$age, 5)2 -478.31581 39.9160847 -11.9830341 2.367734e-32

## poly(df$age, 5)3 125.52169 39.9160847 3.1446392 1.679213e-03

## poly(df$age, 5)4 -77.91118 39.9160847 -1.9518743 5.104623e-02

## poly(df$age, 5)5 -35.81289 39.9160847 -0.8972045 3.696820e-01Notice: this is only an alternative when we exclusively have polynomials in the model!

Using ANOVA to assess for best models

The following is another example of using ANOVA where different variables are used:

And recall, that we should never apply p-values of variables in a model, to decide which that should be included.

NOTE; this approach only works when the models are nested, meanin that the overall variables are the same, hence M2 could not have regian for instance, they all need to have the same overall variable

fit.1 = lm(wage~education +age ,data=df)

fit.2 = lm(wage~education +poly(age ,2) ,data=df)

fit.3 = lm(wage~education +poly(age ,3) ,data=df)

anova(fit.1,fit.2,fit.3)| Res.Df | RSS | Df | Sum of Sq | F | Pr(>F) |

|---|---|---|---|---|---|

| 2994 | 3867992 | NA | NA | NA | NA |

| 2993 | 3725395 | 1 | 142597.10 | 114.696898 | 0.0000000 |

| 2992 | 3719809 | 1 | 5586.66 | 4.493588 | 0.0341043 |

What are we looking for?

- What model lowers the RSS significantly.

Using CV

We could also have chosen the order of polynomials using cross validation.

library(boot)

set.seed(19)

cv.error = rep (0, 5)

for (i in 1:5)

{

fit.i=glm(wage~poly(age,i),data=Wage) # notice glm here in conjunction with cv.glm function

cv.error[i]=cv.glm(Wage, fit.i, K=10)$delta[1] #K fold CV

}

cv.error # the CV errors of the five polynomials models## [1] 1674.979 1600.176 1595.913 1594.003 1596.304Concl: A 5 order model is not justified as the it starts increasing. Also we see that the 4th order d, is the best, which corresponds with previous findings.

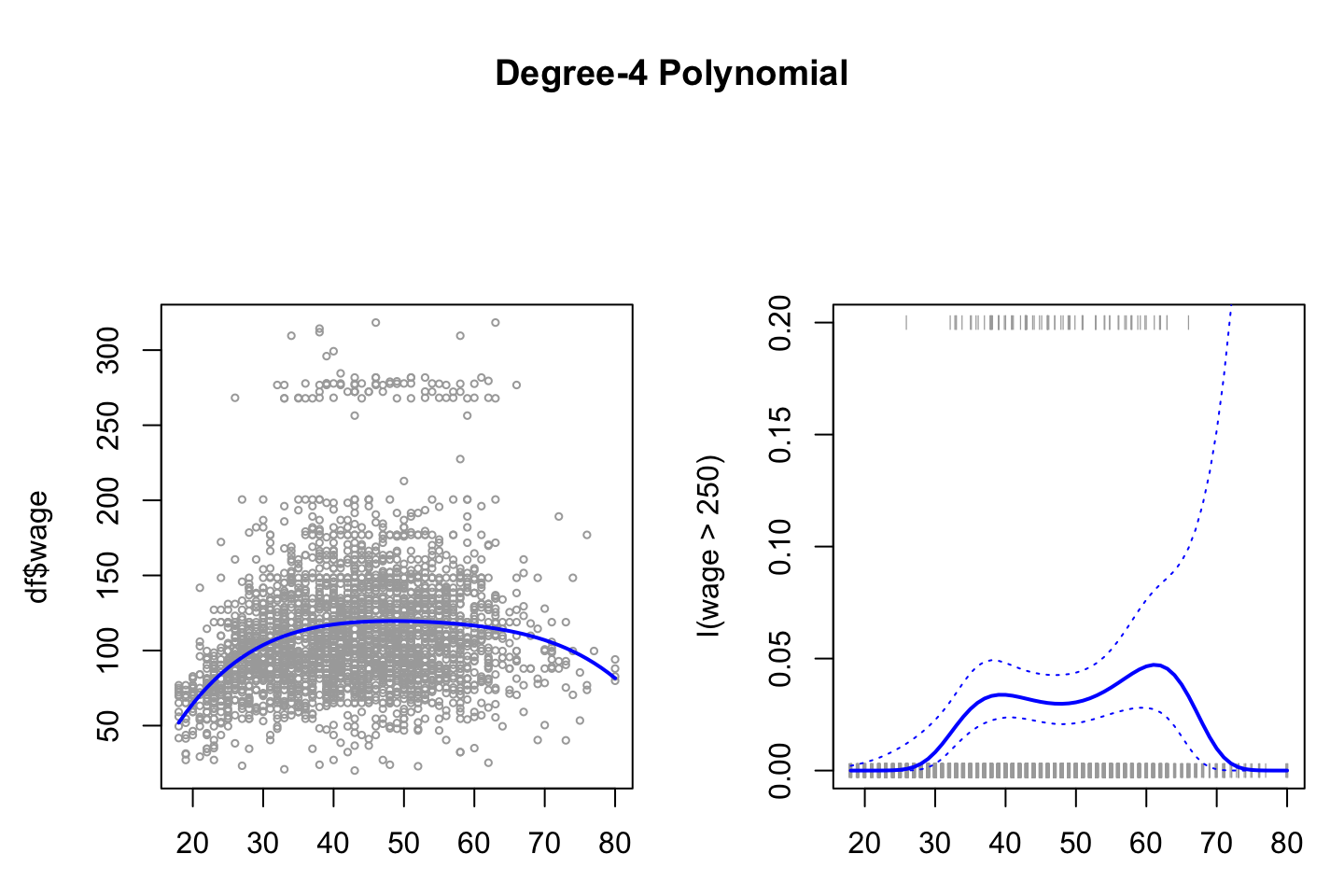

2.3.1.2 Logarithmic model

The procedure is per se the same, but now we are working with a probabilistic model instead of. Hence the outcome must be binary. Thus, it is decided to predict whether a persons wage is higher or lower than 250.

fit <- glm(I(wage > 250) ~ poly(age,4) #Note the use of I()

,data = df

,family = binomial)Note, that again I() is used, where the expression is evaluated on the fly, one could naturally also had made a vector of the classes.

Note, by default glm() will transform TRUE and FALSE to respectively 1 and 0.

Now we can make predictions, which are logits, these can be used for much, hence later we will transform them into probabilities.

preds = predict(fit

,newdata = list(age=age.grid)

,se.fit = TRUE)

# We could have added type = response to get probabilities

preds$fit[1:10]#First 10 logits## 1 2 3 4 5 6 7

## -18.438190 -16.395452 -14.560646 -12.919746 -11.459196 -10.165904 -9.027249

## 8 9 10

## -8.031077 -7.165700 -6.419899To make confidence intevals for Pr(Y = 1|X), i.e.

\[\begin{equation} Pr(Y=1|X)= \frac{exp(X\beta)}{1+exp(X\beta)} \tag{2.5} \end{equation}\]

Where \(X\beta\) can be explained by:

\[\begin{equation} log(\frac{Pr(Y=1|X)}{1-Pr(Y=1|X)})=X\beta \tag{2.6} \end{equation}\]

Hence we must first calculate \(X\beta\) to find Pr(Y=1|X).

#Making Prbabilities

pfit = exp(preds$fit)/(1+exp(preds$fit)) #See equation above

#X beta

se.bands.logit = cbind(preds$fit+2*preds$se.fit #Upper level

,preds$fit-2*preds$se.fit) #Lower level

#Pr(Y = 1|X)

se.bands = exp(se.bands.logit)/(1+exp(se.bands.logit))Remember that 2 SE = 95%, thus with the confidence levels we expect to contain 95% of the data.

Notice, that the posterior probabilities could also have been found by using predict(), see the following:

preds = predict (fit

,newdata = list(age = age.grid)

,type = "response" #Getting probabilities instead of logits

,se.fit = TRUE)NOTICE: for some reason this will lead to wrong confidence intervals (Hastie et al. 2013, 292), thus we prefer the regular approach, as shown before

Now we can make the right hand plot, so we can compare with continous result.

par(mfrow = c(1,2)

,mar = c(3,4.5,4.5,1.1) #Controls the margins

,oma = c(0,0,4,0)) #Controls the margins

#Copy from earlier to combine plots

fit <- lm(wage ~ poly(age,4) #Orthogonal polynomials

,data = df)

preds <- predict(object = fit

,newdata = list(age = age.grid)

,se.fit = TRUE)

plot(x = df$age,y = df$wage

,xlim = agelims

,cex = 0.5 #Size of dots

,col = "darkgrey")

title("Degree-4 Polynomial",outer = TRUE)

lines(x = age.grid,y = preds$fit

,lwd = 2

,col = "blue")

#The new plot

plot(x = age,y = I(wage >250)

,xlim = agelims

,type ="n"

,ylim = c(0,.2))

points(jitter(age)

,I((wage>250)/5)

,cex = .5

,pch = "|"

,col = "darkgrey")

lines(x = age.grid,y = pfit

,lwd = 2

,col= "blue")

matlines(x = age.grid

,y = se.bands

,lwd = 1

,col = "blue"

,lty = 3)

We see on the right hand panel that the all the observations that have a wage above 250 is in the top and all those below hare in the bottom of the visualization. Although at the tail, we aren’t able to conclude much, as confidence interval is really high, hence it can both be high and low earners.

jitter() is merely an approach to avoid observations to overlap each other.

2.3.1.3 Step function

To fit the step function we must do:

- Define the cuts,

cut()is able to automatically pick cutpoints. One could also usebreak()to define where the cuts should be. - Train the model. Notice, that

lm()will automatically create dummy variables for the ranges.

{table(cut(df$age,4)) %>% print()

fit <- lm(wage ~ cut(df$age,4)

,data = df)

coef(summary(fit)) %>% print()}##

## (17.9,33.5] (33.5,49] (49,64.5] (64.5,80.1]

## 750 1399 779 72

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 94.158392 1.476069 63.789970 0.000000e+00

## cut(df$age, 4)(33.5,49] 24.053491 1.829431 13.148074 1.982315e-38

## cut(df$age, 4)(49,64.5] 23.664559 2.067958 11.443444 1.040750e-29

## cut(df$age, 4)(64.5,80.1] 7.640592 4.987424 1.531972 1.256350e-01We see that the p value of the cuts are significant, not that we can use the p-values for much.

Notice, that the first range is the base level, thus it is also left out. We can then use the intercept as the average wage for all in the range of up to 33.5 years.

Hence for a 40 year old person, the model will say that he has an wage of 94 + 24 = 118

rm(list = ls())2.3.2 Splines

The different approaches to splines are presented in the following.

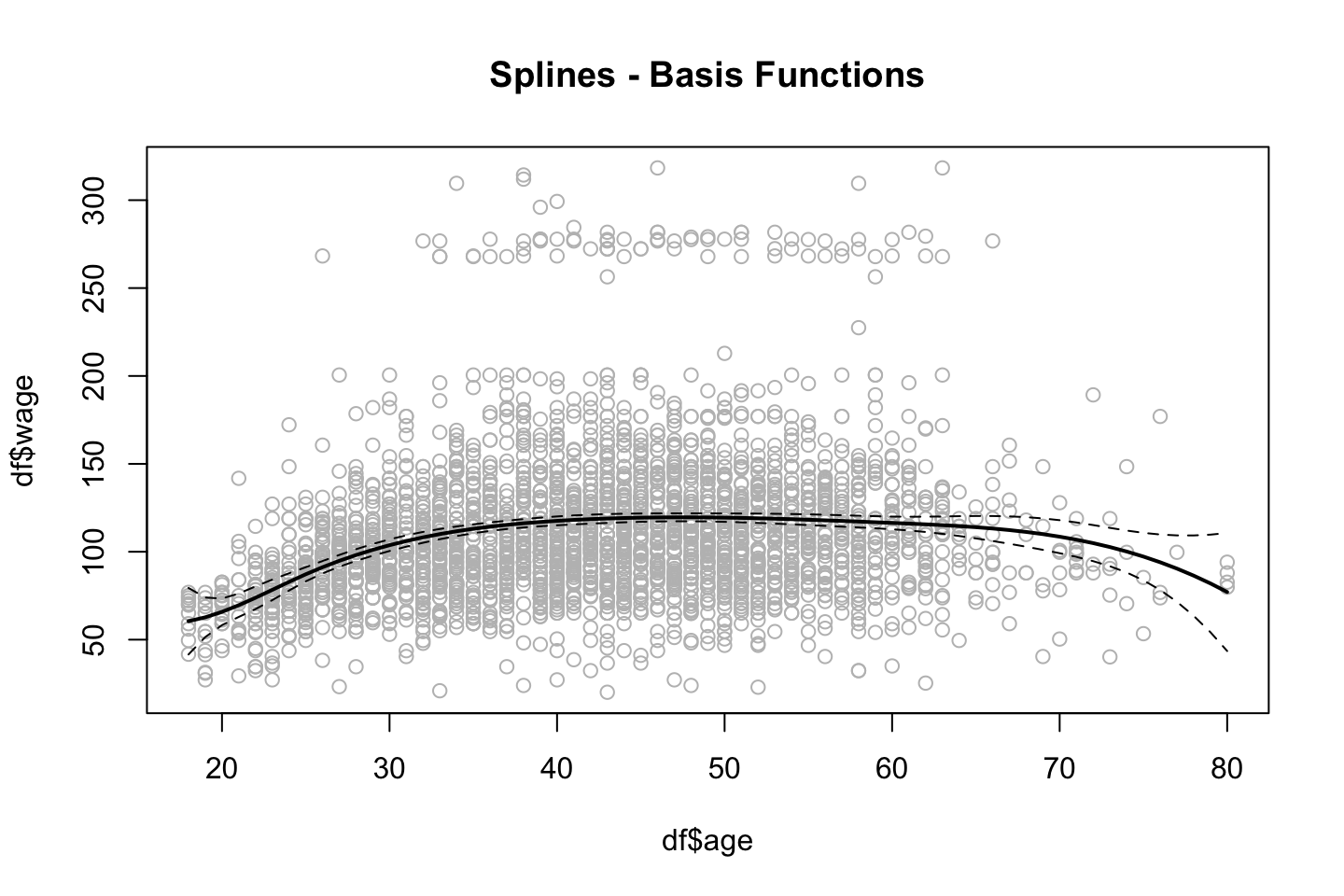

2.3.2.1 Basis Function Splines

library(ISLR)

df <- Wage

library(splines)

agelims <- range(df$age) #The min and max

age.grid <- seq(from = agelims[1],to = agelims[2]) #Creating a counter within the rangeThe splines library contain what we need. We introduce the following functions:

bs(): Basis functions for splines. Generates entire matrix of basis functions for splines with the specified set of knots.ns(): Natural splines.smooth.spline(): Used when fitting smoothing splines.loess(): When fitting local regression.

Note, that by default the splines will be choosen to be 3, this can also be found in the function documentation.

par(mfrow = c(1,1),oma = c(0,0,0,0))

fit.bs <- lm(wage ~ bs(age,knots = c(25,40,60)) #we just chose the knots randomly

,data = df)

pred.bs <- predict(fit.bs

,newdata = list(age = age.grid)

,se.fit = TRUE)

plot(df$age

,df$wage

,col = "gray")

lines(age.grid

,pred.bs$fit

,lwd = 2)

lines(age.grid

,pred.bs$fit+2*pred.bs$se

,lty = "dashed")

lines(age.grid

,pred.bs$fit-2*pred.bs$se

,lty = "dashed")

title("Splines - Basis Functions")

We see that the splines have been fitted to the data and notice that the tails have wider confidence intervals.

We can get the amount of degrees of freedom by calling the dim()function.

{

#Specifying the knots

dim(bs(age,knots = c(25,40,60))) %>% print()

#df can be specified instead of knots

dim(bs(age,df = 6)) %>% print()

}## [1] 3000 6

## [1] 3000 6We see that the two alternatives produce the same results.

Notice, that there are packages that will optimize the amount of knots.

We can assess where the bs() placed the knots, by calling the attr().

attr(bs(age,df=6),"knots")## 25% 50% 75%

## 33.75 42.00 51.00In this case, R chose the 25%, 50% and 75% quantiles.

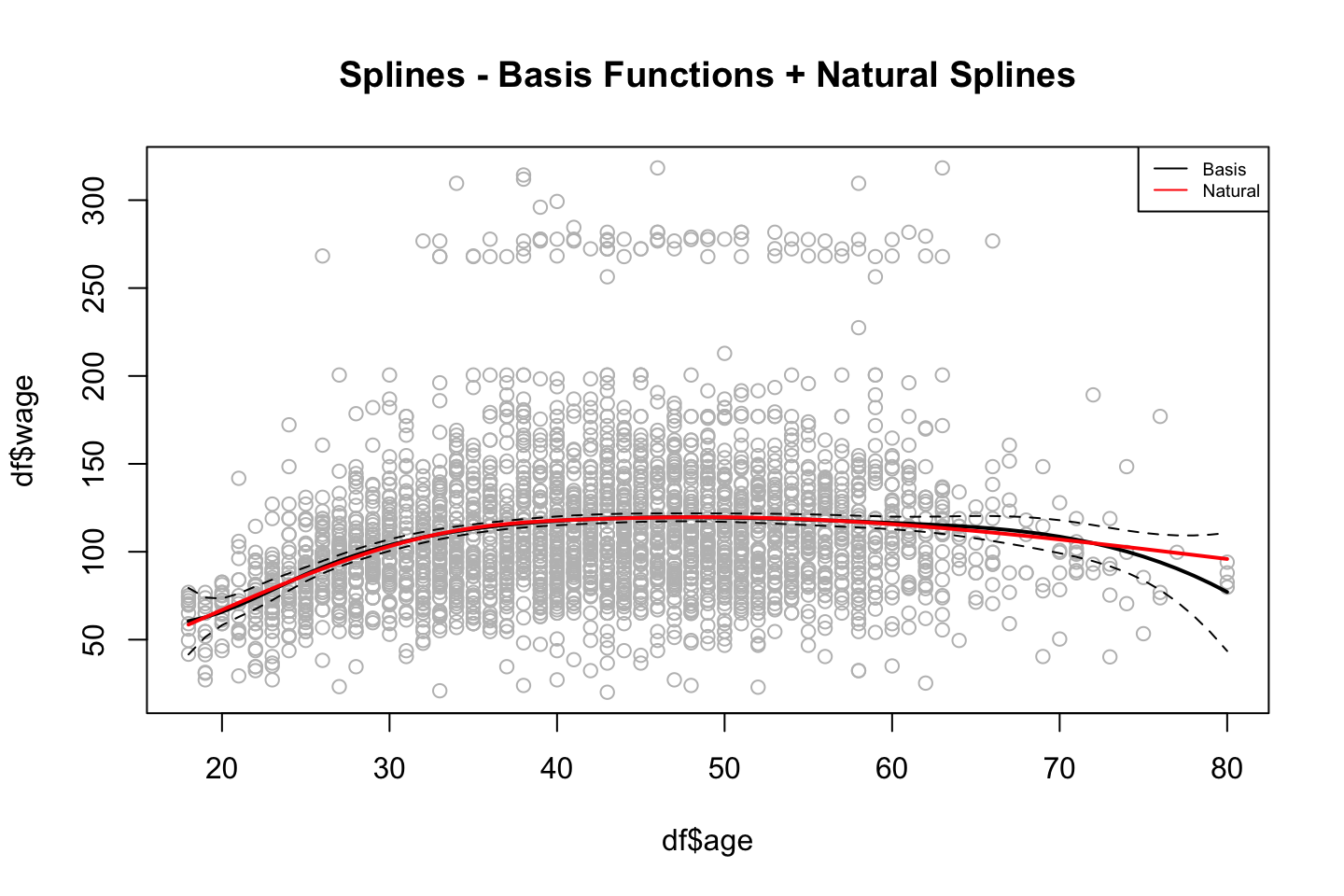

2.3.2.2 Natural Splines

It similar to bs(), but it has an additional condition. I did not really get it.

The fitting procedure is the same, but now we just use ns() instead of bs().

fit.ns = lm(wage ~ ns(age

,df = 4 #Note, as with bs() we could have specified the knots instead of.

)

,data = df)

pred.ns = predict(fit.ns

,newdata = list(age=age.grid)

,se.fit = TRUE)

#Copy of old plot

plot(df$age

,df$wage

,col = "gray")

lines(age.grid

,pred.bs$fit

,lwd = 2)

lines(age.grid

,pred.bs$fit+2*pred.bs$se

,lty = "dashed")

lines(age.grid

,pred.bs$fit-2*pred.bs$se

,lty = "dashed")

#Adding natural splines

lines(age.grid

,pred.ns$fit

,col ="red"

,lwd =2)

title("Splines - Basis Functions + Natural Splines")

legend("topright",c("Basis","Natural"),lty = 1,col = c("Black","Red"),cex = 0.6)

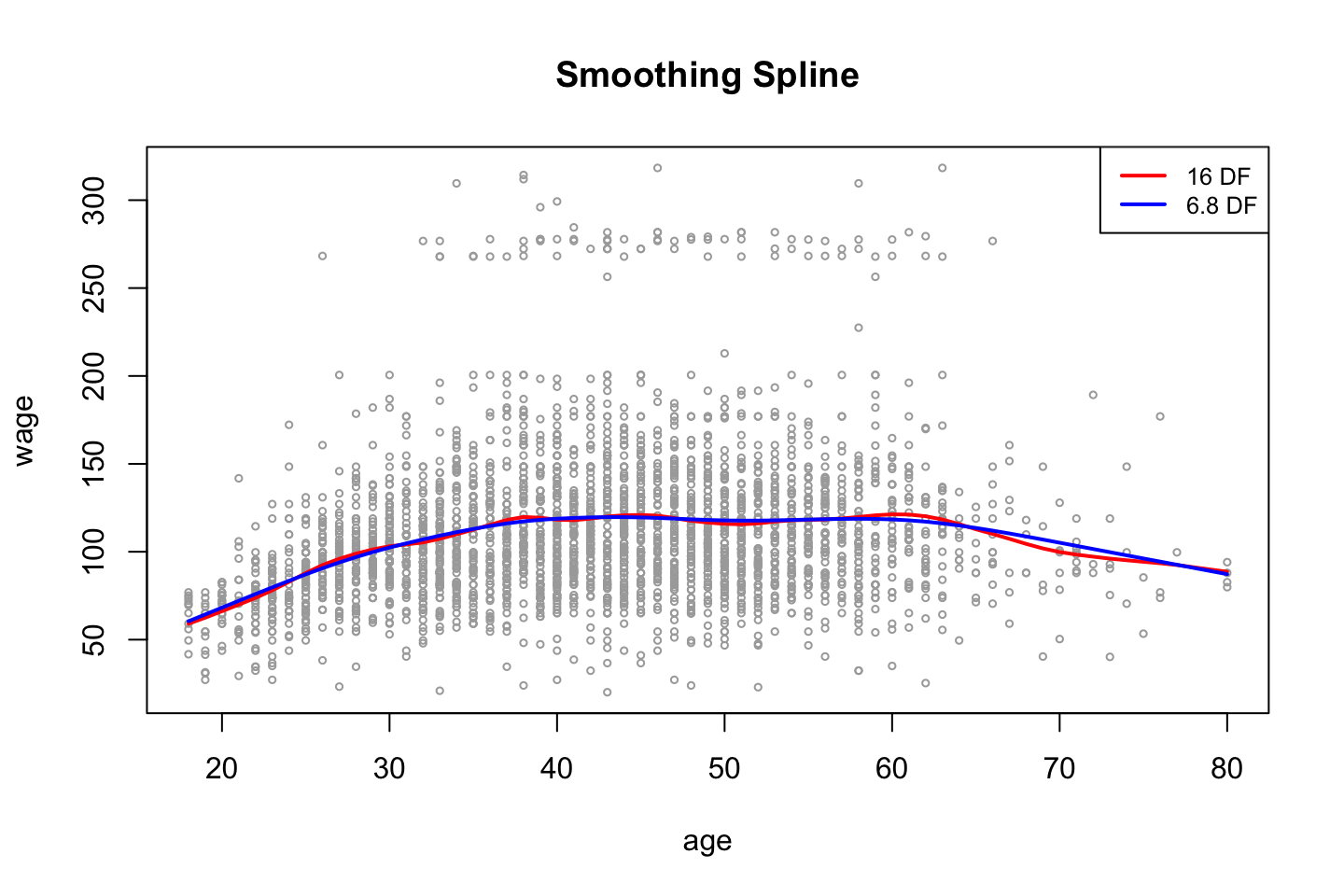

2.3.2.3 Smooth Splines

As we discovered in the first part of the chapter, it sets a knot at each observation, and then we will penalize the function with a lamda (\(\lambda\)), to avoid overfitting.

The code show the procedure.

#Hardcoding degrees of freedom

fit.ss <- smooth.spline(x = df$age,y = df$wage

,df = 16) #Remember that we must impose constraints

#Choosing smoothing param with CV

fit.ss2 <- smooth.spline (df$age

,df$wage

,cv = TRUE) #we choose cv instead of fixed amount of df

fit.ss2$df## [1] 6.794596We get sparsity hence we have degrees of freedom of 6.8. That is due to the tuning parameter which was found by the cross validation proces. We can find the specific lambda value with the following:

fit.ss2$lambda## [1] 0.02792303plot(age,wage

,xlim = agelims

,cex = .5

,col = "darkgrey")

title("Smoothing Spline")

lines(fit.ss,col = "red",lwd = 2)

lines(fit.ss2,col = "blue",lwd =2)

legend("topright",legend = c("16 DF","6.8 DF")

,col = c("red","blue")

,lty = 1

,lwd = 2

,cex = .8)

As expected, we see that the more complex model (highest amount of df) is the more flexible model.

Note: tuning parameter = \(\lambda\), where the CV seeks to choose the parameter that leads to the lowest error and return the df that leads to this level.

2.3.2.4 Local Regression

Recall that local regression makes a linear regression for the observations that are close to the observation under evaluation (\(x_0\)).

Thus we have to specify the span, the larger the span the smoother the fit, as we will include more observations.

NB: locfit library can also be used for fitting local regress

plot(x = df$age,y = df$wage

,xlim = agelims

,cex = .5

,col = "darkgrey")

title ("Local Regression")

fit.lr <- loess(wage ~ age

,span = .2 #Degree of smoothing / neighborhood to be included

,data = df)

fit.lr2 <- loess(wage ~ age

,span = .5 #Degree of smoothing / neighborhood to be included

,data = df)

lines(x = age.grid,y = predict(object = fit.lr,newdata = data.frame(age=age.grid))

,col = "red"

,lwd = 2)

lines(x = age.grid,y = predict(object = fit.lr2,newdata = data.frame(age=age.grid))

,col =" blue"

,lwd = 2)

legend(x = "topright"

,legend = c("Span = 0.2","Span = 0.5")

,col=c("red","blue")

,lty = 1

,lwd = 2

,cex = .8)

From the plot we also see that the model with the largest span has the smoothest fit.

2.3.3 GAMs

We want to predict wage, where year, age and education (as categorical) as predictors.

2.3.3.1 With only natural splines

According to the Hastie et al. (2013), 294, this is just a bunch of linear functions, hence we can merely apply lm(), see the following.

gam.m1 <- lm(wage ~ ns(year,df = 4) + ns(age,df = 5) + education #NOTICE, that we just use lm()

,data = df)

summary(gam.m1)##

## Call:

## lm(formula = wage ~ ns(year, df = 4) + ns(age, df = 5) + education,

## data = df)

##

## Residuals:

## Min 1Q Median 3Q Max

## -120.513 -19.608 -3.583 14.112 214.535

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 46.949 4.704 9.980 < 2e-16 ***

## ns(year, df = 4)1 8.625 3.466 2.488 0.01289 *

## ns(year, df = 4)2 3.762 2.959 1.271 0.20369

## ns(year, df = 4)3 8.127 4.211 1.930 0.05375 .

## ns(year, df = 4)4 6.806 2.397 2.840 0.00455 **

## ns(age, df = 5)1 45.170 4.193 10.771 < 2e-16 ***

## ns(age, df = 5)2 38.450 5.076 7.575 4.78e-14 ***

## ns(age, df = 5)3 34.239 4.383 7.813 7.69e-15 ***

## ns(age, df = 5)4 48.678 10.572 4.605 4.31e-06 ***

## ns(age, df = 5)5 6.557 8.367 0.784 0.43328

## education2. HS Grad 10.983 2.430 4.520 6.43e-06 ***

## education3. Some College 23.473 2.562 9.163 < 2e-16 ***

## education4. College Grad 38.314 2.547 15.042 < 2e-16 ***

## education5. Advanced Degree 62.554 2.761 22.654 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 35.16 on 2986 degrees of freedom

## Multiple R-squared: 0.293, Adjusted R-squared: 0.2899

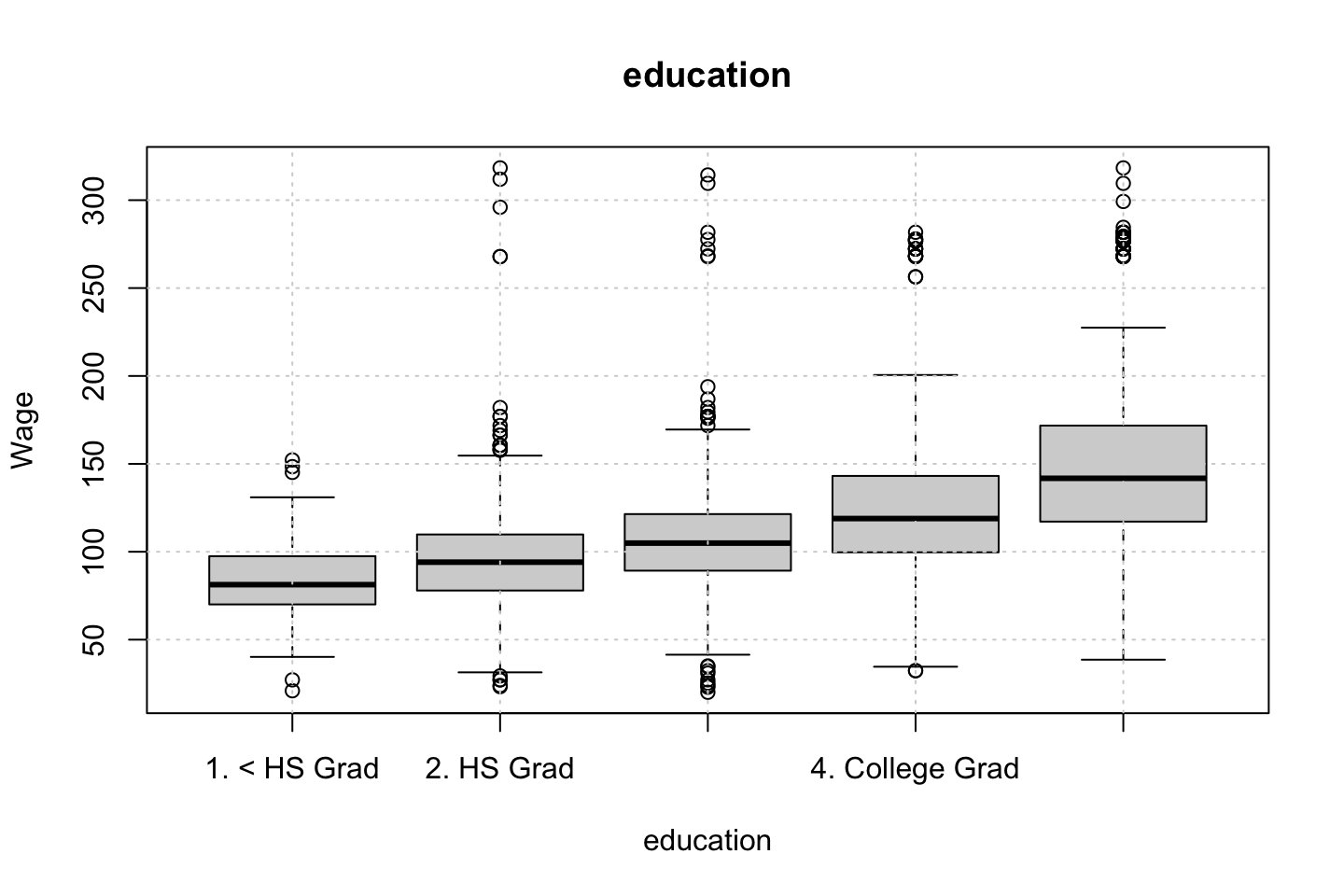

## F-statistic: 95.2 on 13 and 2986 DF, p-value: < 2.2e-16From the summary we see the variables that have been created and also the factor levels for education.

Again, we don’t have to interprete the coefficients, we just need to look at the shape.

2.3.3.2 With different splines

Now we have to apply the package gam.

This is the best approach.

library(gam)We can also construct a GAM model, that contains smoothing splines, that is done by calling s(). Where year and age will be included with up to 4 and 5 degrees of freedom.

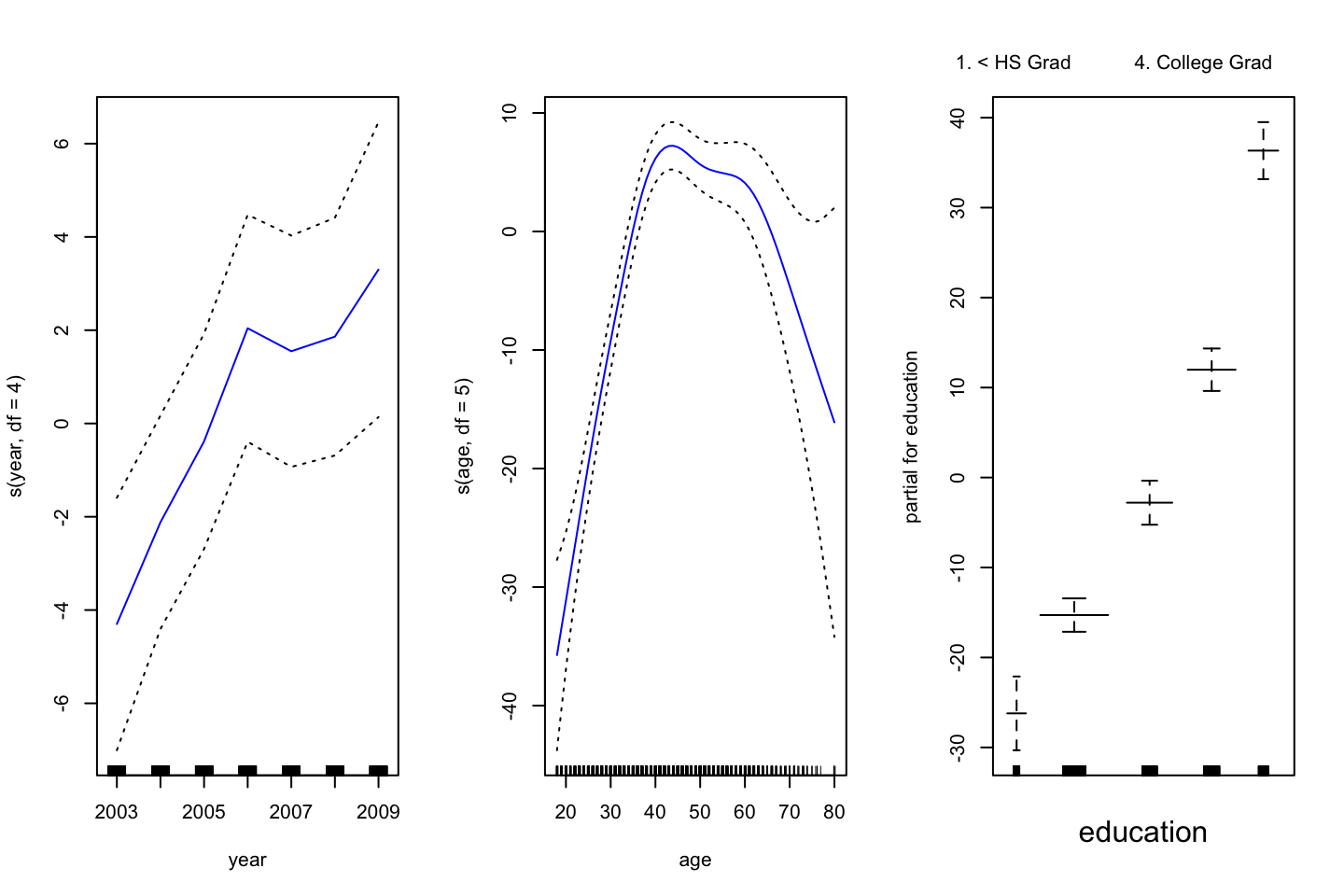

gam.m3 <- gam(wage ~ s(year,df = 4) + s(age,df = 5) + education

,data = df)Remember, that GAM fits each variable while holding all other variables fixed. The actual fitting procedure is called backfitting, and fits variables by repeatedly updating the fit for each predictor (Hastie et al. 2013, 284–85). Hence, we create plots to interprete how.

par(mfrow = c(1,3))

plot(gam.m3 #Note, automatically identifies the GAM object, hence plots for each variable

,se = TRUE

,col ="blue")

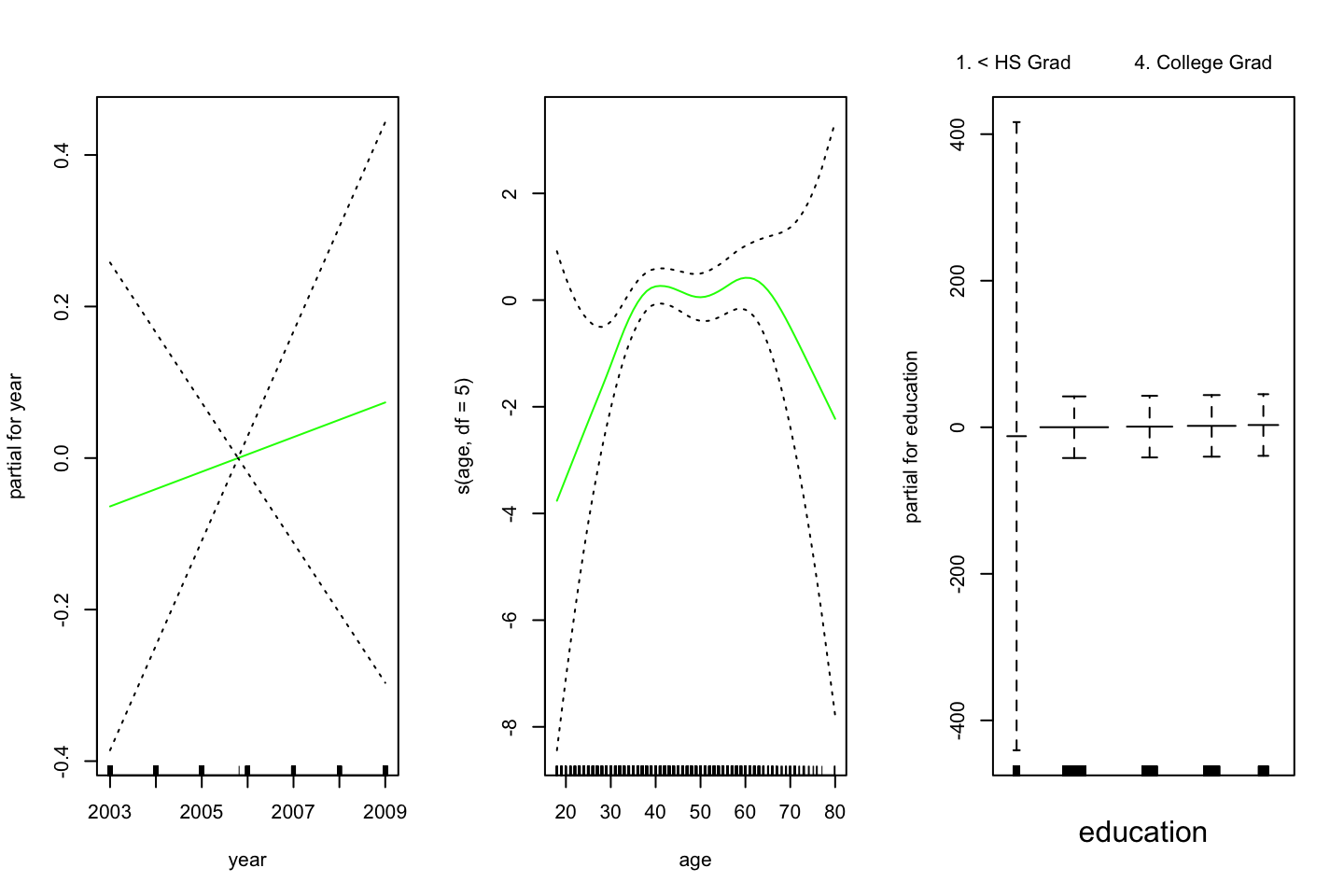

(#fig:GAMPlotLab7.8.3)GAM plot and intepretation

Interpreting the plot: Recall that the plots assumes that we hold the other variables fixed, hence we see the following:

- Left: We see that holding education and age fixed, the wage tends to increase over the years, that is quite natural, e.g., because of inflation.

- Center: Holding year and education fixed, we see that the wage tends to be highest in the middle region around 40-45 years of age. That is also quite intuitive that the wage first increase and then decreasing as the person gets closer to the retirement age.

- Right: Holding year and age fixed, we see that the higher education you have, the higher will your wage be.

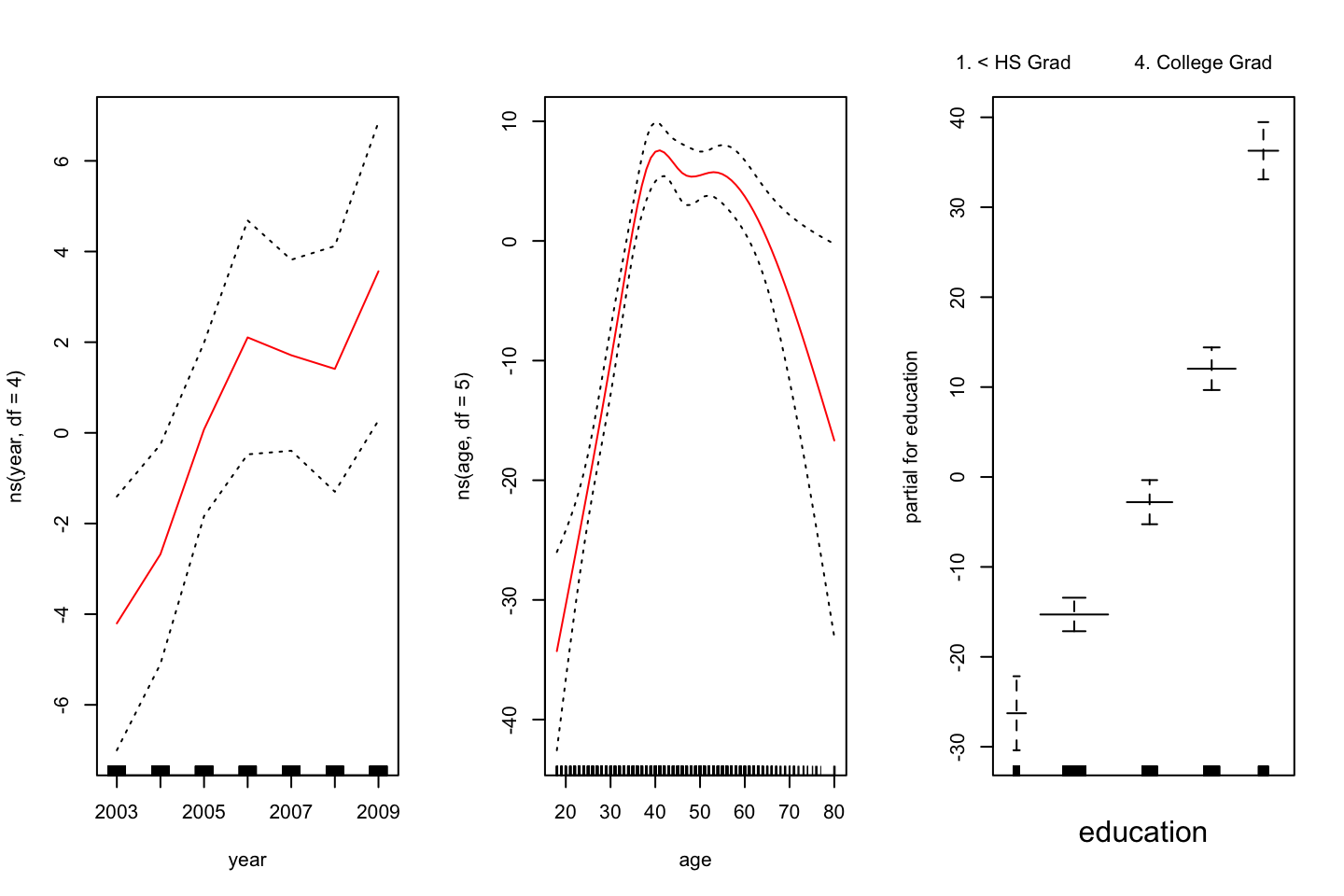

par(mfrow = c(1,3))

plot.Gam(gam.m1

,se = TRUE

,col = "red")

Figure 2.3: GAM of natural splines

Notice, that this plot looks very similar to @(fig:GAMPlotLab7.8.3).

This command could naturally also be used for the other GAM object, it is just that plot() does not automatically identify, that it is in fact intended to be interpretet as a GAM.

2.3.3.3 But what variables to include?

It looks as is year is rather linear. To make this assessment, we can apply an ANOVA test of the different combinations. Hence:

Note, the first model is nested in the second model (has the same variables), hence we can use ANOVA

#Excluding year

gam.m1 <- gam(wage ~ s(age,df = 5) + education

,data = df)

#Including year, but as a linear

gam.m2 <- gam(wage ~ year + s(age,df = 5) + education

,data = df)

anova(gam.m1,gam.m2,gam.m3,test = "F")| Resid. Df | Resid. Dev | Df | Deviance | F | Pr(>F) |

|---|---|---|---|---|---|

| 2990 | 3711731 | NA | NA | NA | NA |

| 2989 | 3693842 | 1.000000 | 17889.243 | 14.477130 | 0.0001447 |

| 2986 | 3689770 | 2.999989 | 4071.134 | 1.098212 | 0.3485661 |

We see that performance is significantly better going from model 1 to model 2, but on a five percent level, we are able to say, that we don’t gain anything with the third model, which is most complex model.

Thus, the linear constellation of year, with polynomials on age + education as factors, appear to be the best performing model.

With this in mind, it is interesting to assess the summary of the complex model:

summary(gam.m3)##

## Call: gam(formula = wage ~ s(year, df = 4) + s(age, df = 5) + education,

## data = df)

## Deviance Residuals:

## Min 1Q Median 3Q Max

## -119.43 -19.70 -3.33 14.17 213.48

##

## (Dispersion Parameter for gaussian family taken to be 1235.69)

##

## Null Deviance: 5222086 on 2999 degrees of freedom

## Residual Deviance: 3689770 on 2986 degrees of freedom

## AIC: 29887.75

##

## Number of Local Scoring Iterations: NA

##

## Anova for Parametric Effects

## Df Sum Sq Mean Sq F value Pr(>F)

## s(year, df = 4) 1 27162 27162 21.981 0.000002877 ***

## s(age, df = 5) 1 195338 195338 158.081 < 2.2e-16 ***

## education 4 1069726 267432 216.423 < 2.2e-16 ***

## Residuals 2986 3689770 1236

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Anova for Nonparametric Effects

## Npar Df Npar F Pr(F)

## (Intercept)

## s(year, df = 4) 3 1.086 0.3537

## s(age, df = 5) 4 32.380 <2e-16 ***

## education

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1Looking at the section: “Anova for Nonparametric Effects,” we see that the smoothing spline on year, is not significant, hence it supports the conclusion from above, that we are better off, including the year as a linear variable.

Now we can make predictions.

#Predictions with linear year, non linear age and factors of education

preds <-predict(gam.m2

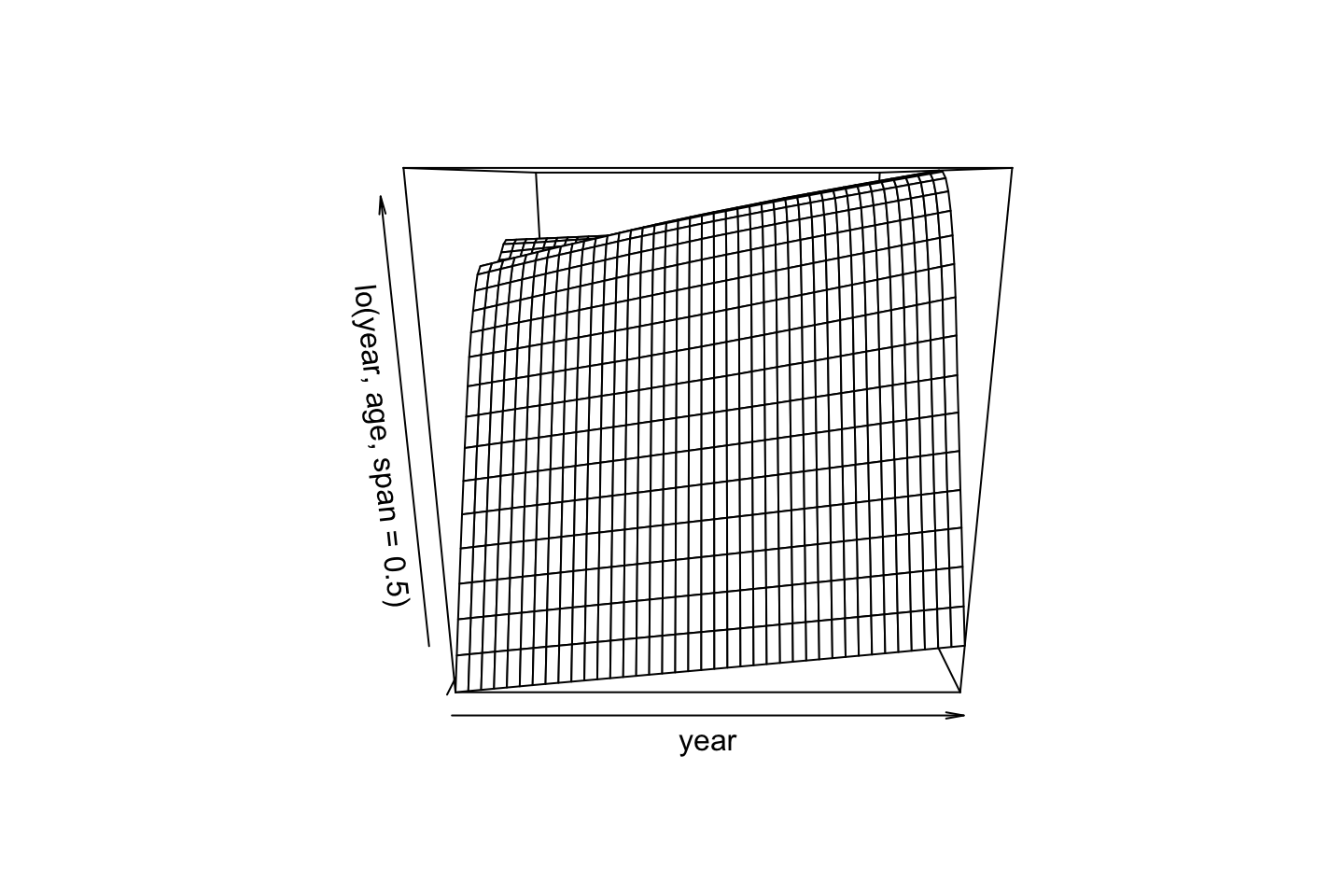

,newdata = df)2.3.3.4 GAM with local regression

We are also able to make GAM on other building blocks, for instance local regression, that will be shown in the following

For some reason the following cant be run.

#GAM with local regression

gam.lo <- gam(wage ~ s(df$year,df = 4) + lo(df$age,span = 0.7) + education

,data = df)

# plot.Gam(gam.lo #For some reason it cant be plotted

# ,se = TRUE

# ,col = "green")Making interactions in the local regression:

gam.lo.i <- gam(wage ~ lo(year,age,span = 0.5) + education

,data = df)

library(akima)

plot(gam.lo.i)

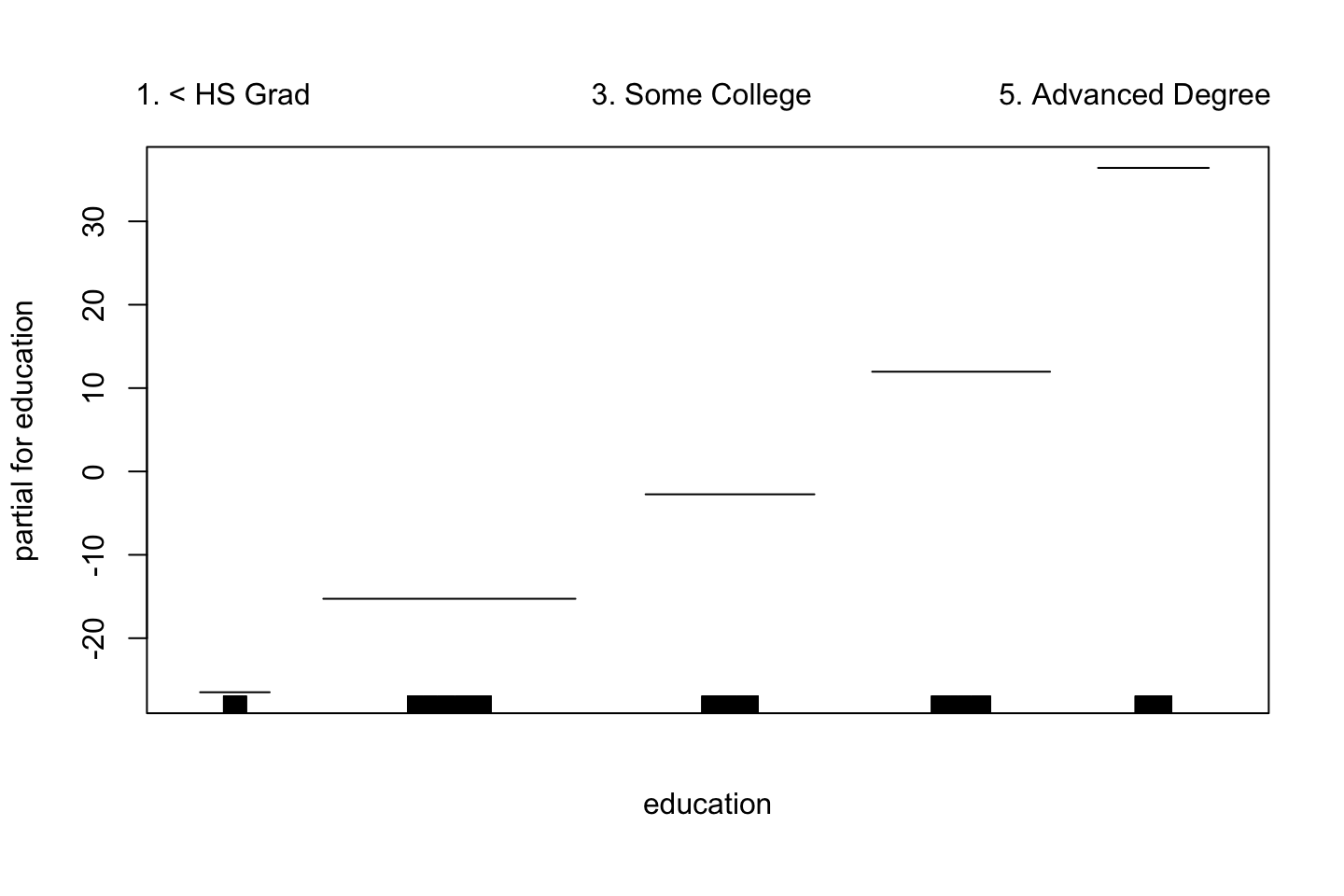

2.3.3.5 Logistic Regression

Plotting logistic regression GAM, here we can apply I() as previous used, to make the expression on the fly.

gam.lr <- gam(I(wage > 250) ~ year + s(age,df = 5) + education

,family = binomial

,data = df)

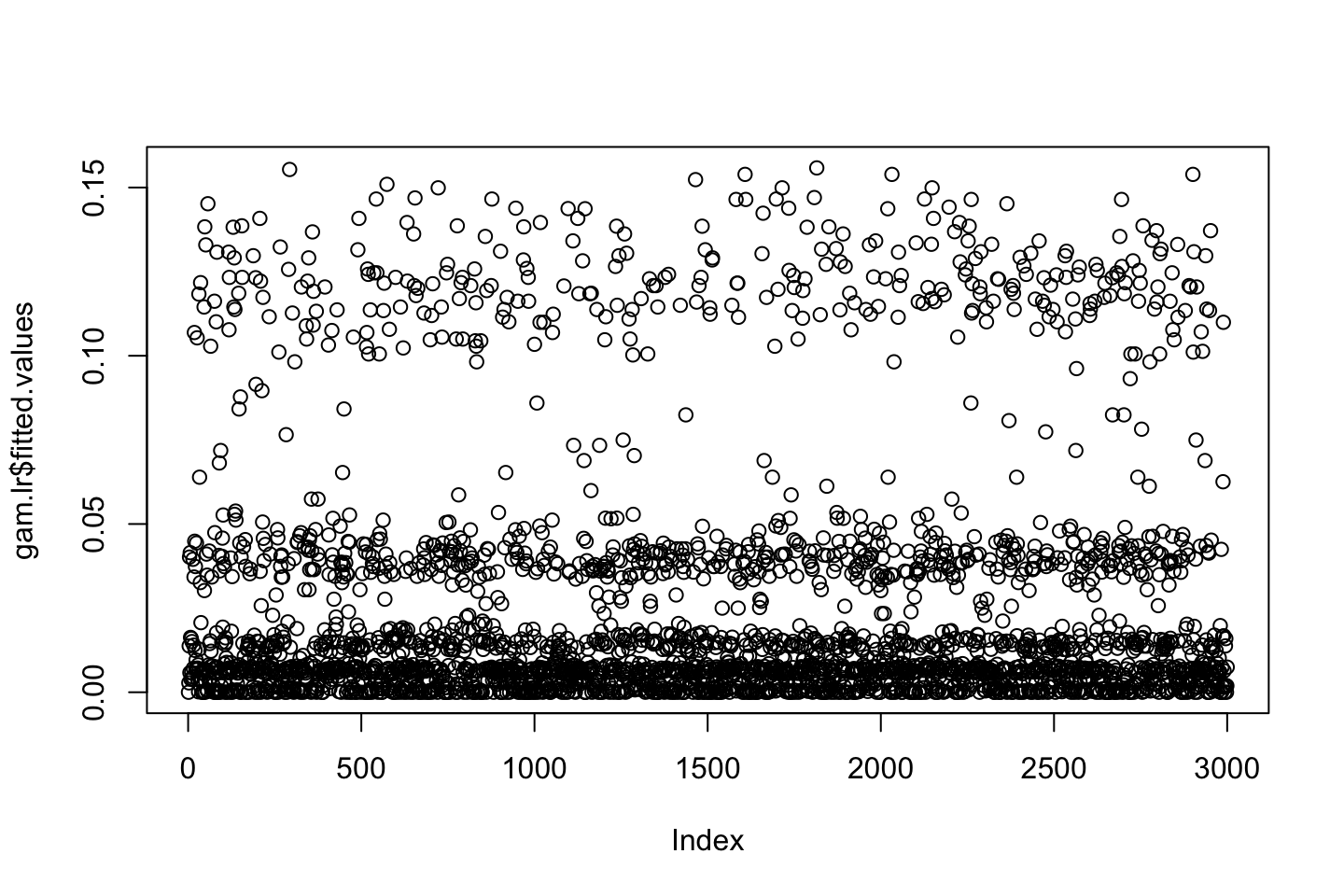

par(mfrow =c(1,3))

plot(gam.lr,se=T,col =" green ")

One could interprete the plot and assess each window to see how the variable influence the decision wether the observation is above or below. Remember that the outcome can be seen as probabilities, these can also be plotted to be shown the spread:

par(mfrow = c(1,1))

plot(gam.lr$fitted.values)

From th plot, we see that there is a tendency that the lower the education the lower the wage, the following table show how the high earners are distributed.

table(education,I(wage > 250))##

## education FALSE TRUE

## 1. < HS Grad 268 0

## 2. HS Grad 966 5

## 3. Some College 643 7

## 4. College Grad 663 22

## 5. Advanced Degree 381 45We see that there are no people with less than high school degree that earns more than 250.

To get more sensible result, we can remove the observations with a low degree, this will also show a more sensible result for the other degrees, see the following.

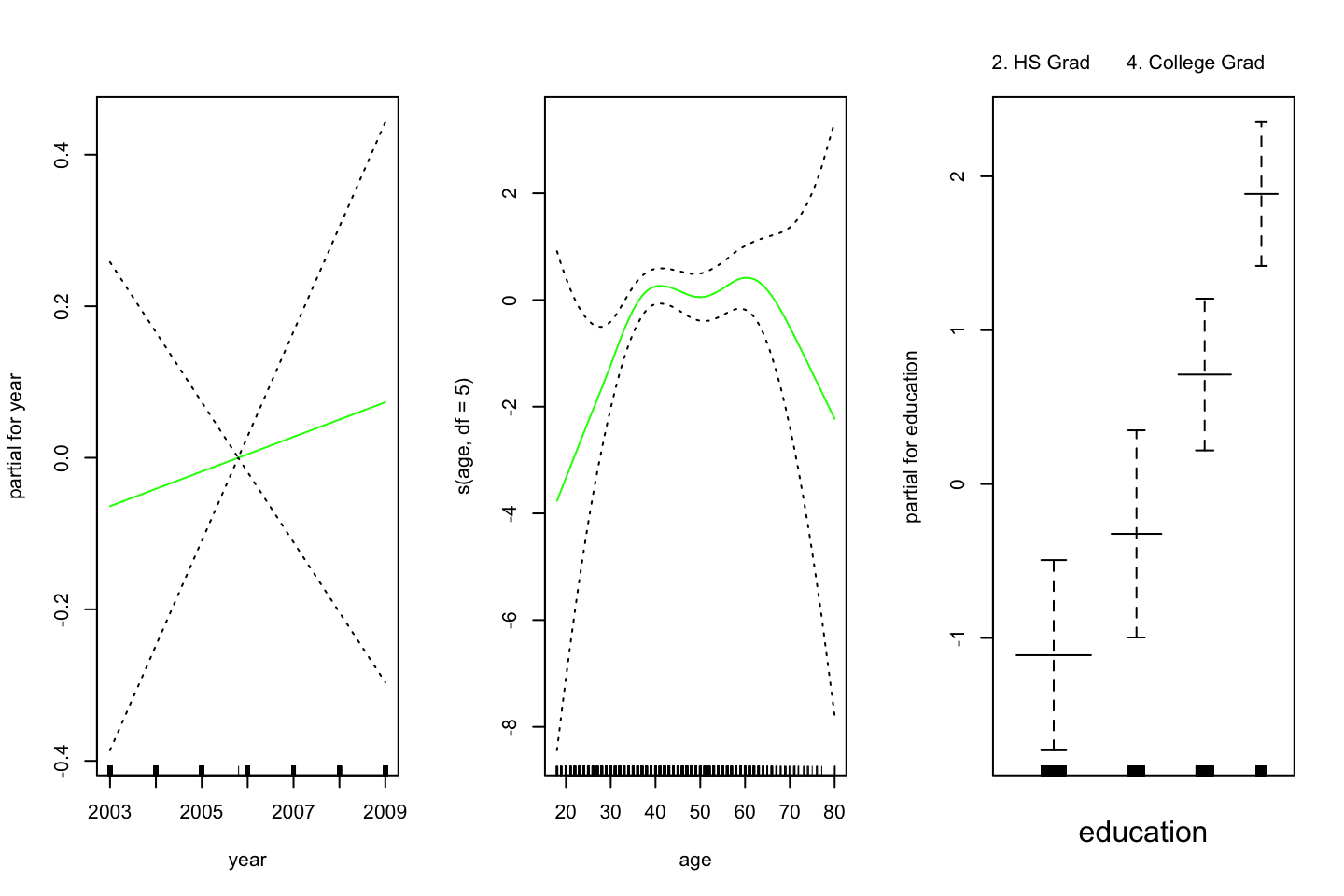

gam.lr.s = gam(I(wage > 250) ~ year + s(age,df = 5) + education

,family = binomial

,data = df

,subset = (education != "1. < HS Grad")) #removing people in the lowest group of education.

par(mfrow = c(1,3))

plot(gam.lr.s

,se = TRUE #Standard errors

,col =" green ")

Do we need a nonlinear term for year? Use anova for comparing the previous model with a model that includes a smooth spline of year with df=4

We can do an ANOVA, but please notice, we use Chi Square now.

gam.y.s = gam(I(wage>250) ~ s(year, 4) + s(age,5) + education,family=binomial,data = df,subset=(education!="1. < HS Grad"))

anova(gam.lr.s,gam.y.s, test="Chisq") # Chi-square test as Dep variable is categorical| Resid. Df | Resid. Dev | Df | Deviance | Pr(>Chi) |

|---|---|---|---|---|

| 2722 | 602.4588 | NA | NA | NA |

| 2719 | 601.5718 | 2.999982 | 0.8869514 | 0.8285731 |

We do not need a non-linear term for year.

2.4 Exercises

2.4.1 Exercise 6

Purpose, to practice polynomial regression and step functions

library(ISLR)

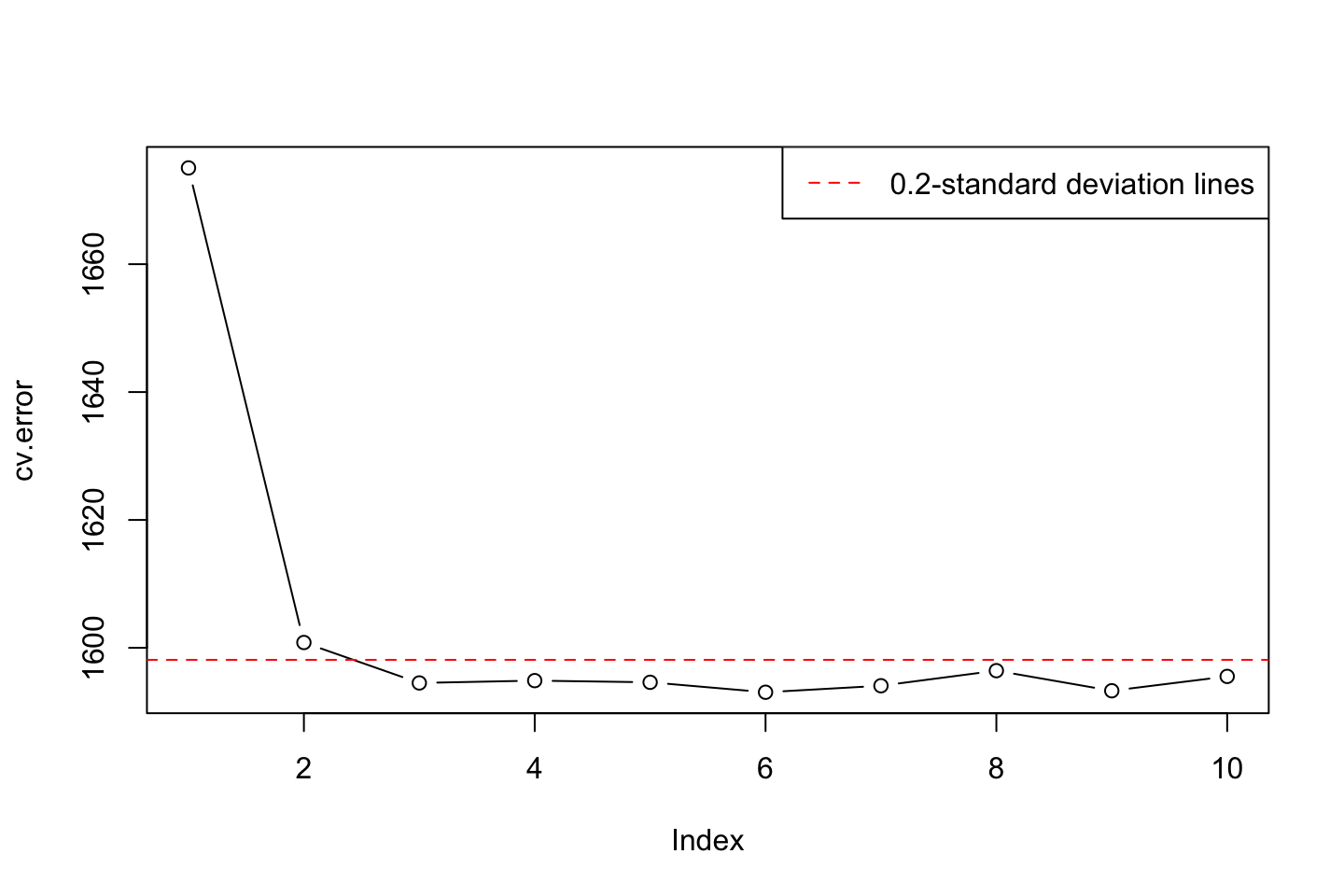

df <- Wage2.4.1.1 6.a Polynomial Regression

We use orthogonal polynomials in the modeling process as we know that these are slightly better than raw polynomials due to the fact that this tend to avoid collinearity.

Training the model

library(boot) #For the cv.glm() function

set.seed(1337)

cv.error = rep (0,10)

for (i in seq(from = 1,to = length(cv.error),by = 1)) {

#Training

fit.i <- glm(wage ~ poly(age,i),data = df) # notice glm here in conjunction with cv.glm function

#Performing cross validation

cv.error[i] <- cv.glm(data = df,glmfit = fit.i,K = 10)$delta[1] #K fold CV, delta = prediction errer i.e. MSE

}

#Printing the

cv.error # MSE the CV errors of the five polynomials models## [1] 1675.056 1600.832 1594.505 1594.872 1594.608 1593.053 1594.069 1596.428

## [9] 1593.284 1595.530The vector above are all of the prediction errors computed in the loop.

which.min(cv.error)## [1] 6We see that the fifth prediction appear to yield the lowest MSE, but is it significantly different than e.g. forth or third order polynomial?

fit.1 <- glm(wage ~ poly(age,1),data = df)

fit.2 <- glm(wage ~ poly(age,2),data = df)

fit.3 <- glm(wage ~ poly(age,3),data = df)

fit.4 <- glm(wage ~ poly(age,4),data = df)

fit.5 <- glm(wage ~ poly(age,5),data = df)

anova(fit.1,fit.2,fit.3,fit.4,fit.5,test = "F")| Resid. Df | Resid. Dev | Df | Deviance | F | Pr(>F) |

|---|---|---|---|---|---|

| 2998 | 5022216 | NA | NA | NA | NA |

| 2997 | 4793430 | 1 | 228786.010 | 143.5931074 | 0.0000000 |

| 2996 | 4777674 | 1 | 15755.694 | 9.8887559 | 0.0016792 |

| 2995 | 4771604 | 1 | 6070.152 | 3.8098134 | 0.0510462 |

| 2994 | 4770322 | 1 | 1282.563 | 0.8049758 | 0.3696820 |

We can only use these as the models are nested as the variables are the same

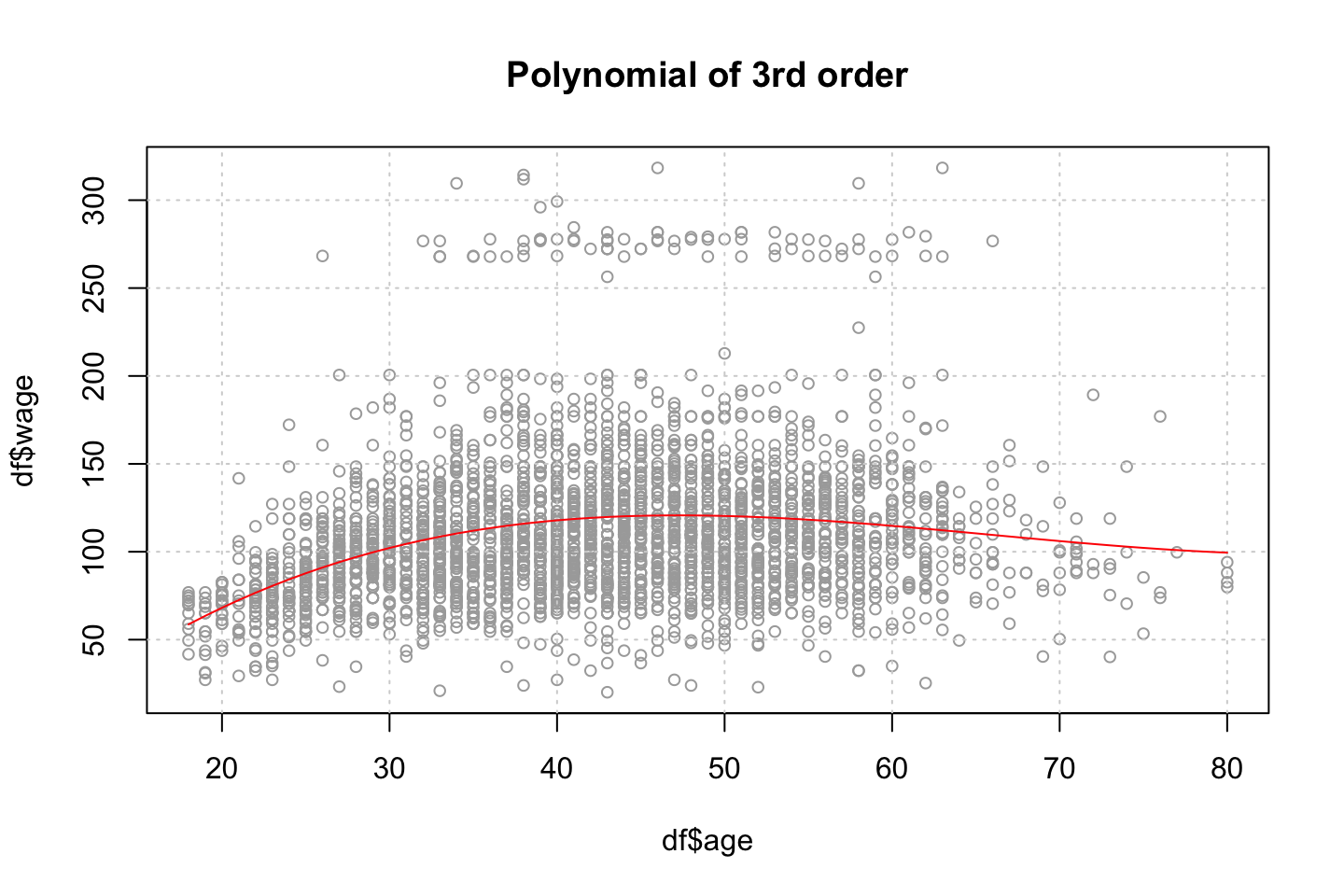

Using the F test, we see that on a five percent level the 4th polynomial is not justified, but close to. This argues that we should select the third order of polynomials as that is the last where there is statistical evidence for lowering the residuals.

Thus we select a model with three polynomials. Plotting the errors, we also see that there does not happen much after the third polynomial. We also plotted the standard errors and thus we are able to select based on this.

plot(cv.error,type = "b")

min.point = min(cv.error)

sd.points = sd(cv.error)

abline(h=min.point + 0.2 * sd.points, col="red", lty="dashed") #0.2 is just a rule of thumb, could be anything

abline(h=min.point - 0.2 * sd.points, col="red", lty="dashed")

legend("topright", "0.2-standard deviation lines", lty="dashed", col="red")

Thus, there is even more information supporting selecting three degrees of freedom.

Plotting the polynomial regression

This is done with the following procedure:

- Make a grid counting IDV (Age)

- Make predictions

- Make a plot with the variables

- Fit a line onto the predictions

- Perhaps calculate confidence levels and plot these

#Grid of X

age.grid <- seq(from = min(df$age),to = max(df$age),by = 1)

#Predictions

preds <- predict(object = fit.3

,newdata = list(age = age.grid) #Renaming age.grid to age

,se.fit = TRUE) #We want to produce confidence levels

#Plotting

plot(x = df$age,y = df$wage,col = "darkgrey",cex = 0.8)

grid()

lines(x = age.grid #We need to define the grid, otherwise the fit will not be alligned with the data

,y = preds$fit

,col = "red")

title("Polynomial of 3rd order")

2.4.1.2 6.b Step function

cuts <- 4

#Cutting the x variable

table(cut(df$age

,breaks = cuts))##

## (17.9,33.5] (33.5,49] (49,64.5] (64.5,80.1]

## 750 1399 779 72 #' Note, this only shows where the cuts lie and how many there are in each

#Fitting the step function

fit.step <- lm(wage ~ cut(df$age,4)

,data = df)

coef(summary(fit.step))## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 94.158392 1.476069 63.789970 0.000000e+00

## cut(df$age, 4)(33.5,49] 24.053491 1.829431 13.148074 1.982315e-38

## cut(df$age, 4)(49,64.5] 23.664559 2.067958 11.443444 1.040750e-29

## cut(df$age, 4)(64.5,80.1] 7.640592 4.987424 1.531972 1.256350e-01We see that the the first cut (bin with people up to 33,5) have been left out. That is because they are contained in the intercept.

Now we can fit the step function

library(stats)

#Predictions

preds <- predict(object = fit.step

,newdata = list(age = age.grid)) #Renaming age.grid to age

#Plotting

# plot(x = df$age,y = df$wage,col = "darkgrey",cex = 0.8)

# grid()

# lines(age.grid

# ,preds

# ,col = "red")

# title("Step function of 3rd order")I need to check what she is doing, one could perhaps manually order the

2.4.2 Exercise 7

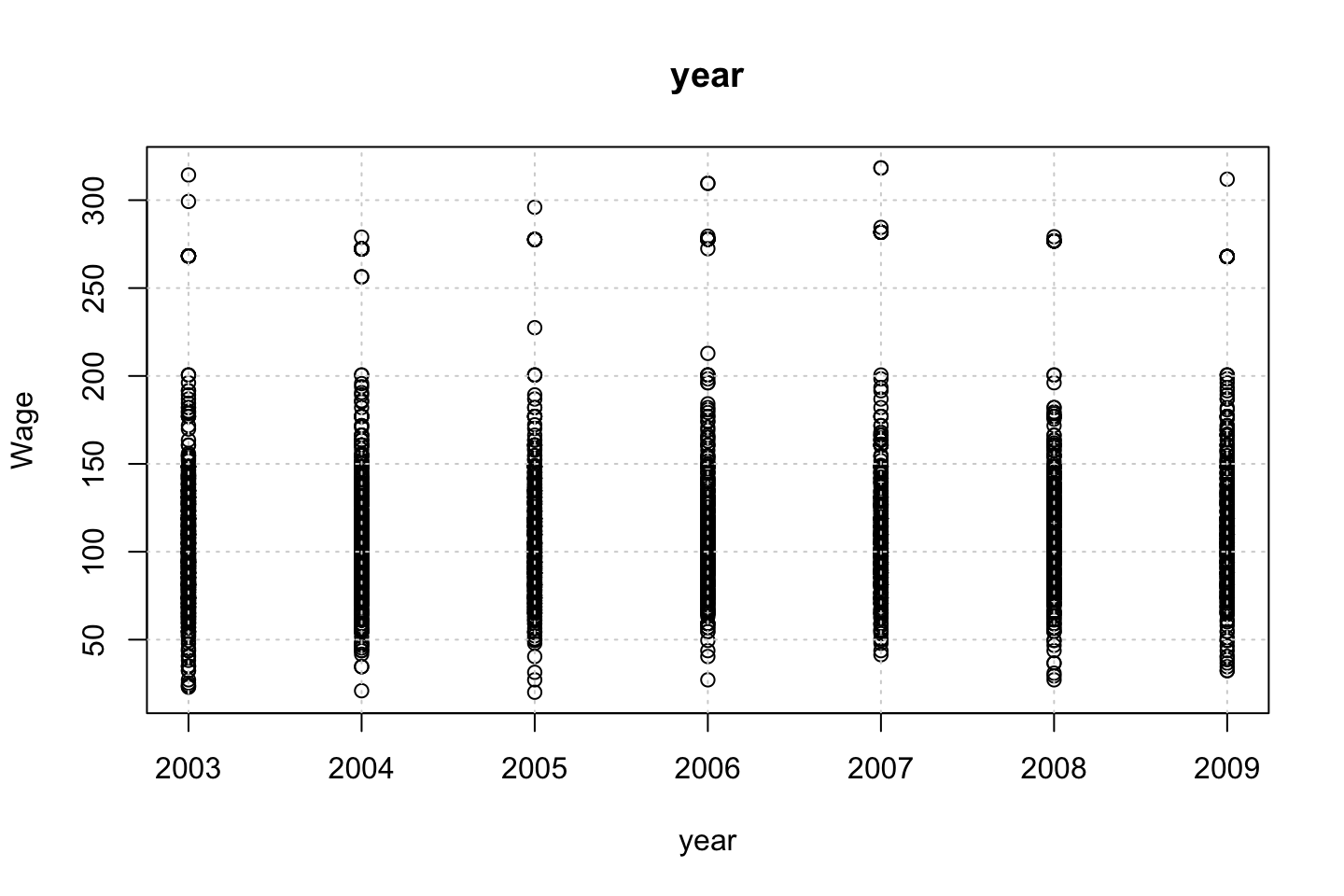

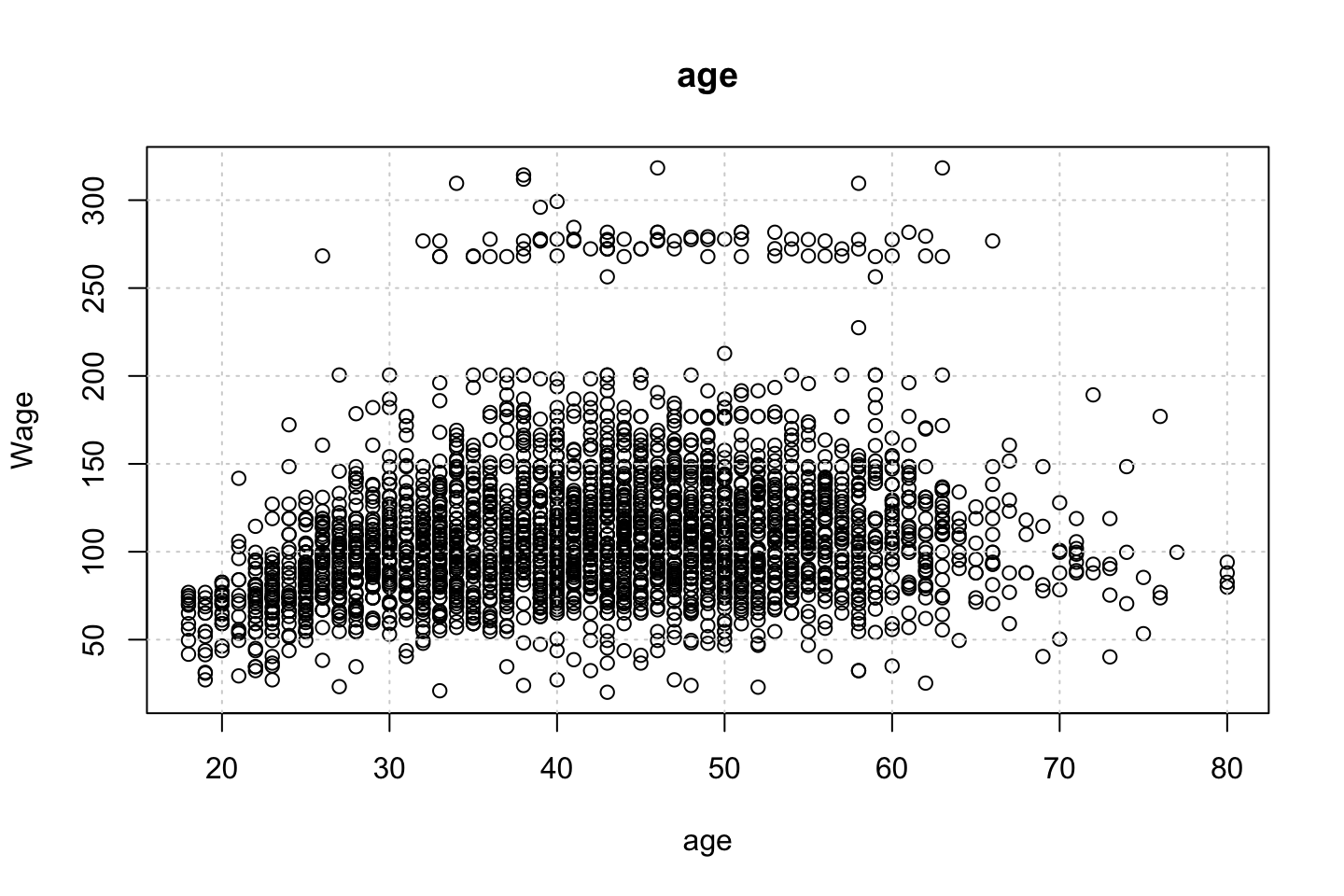

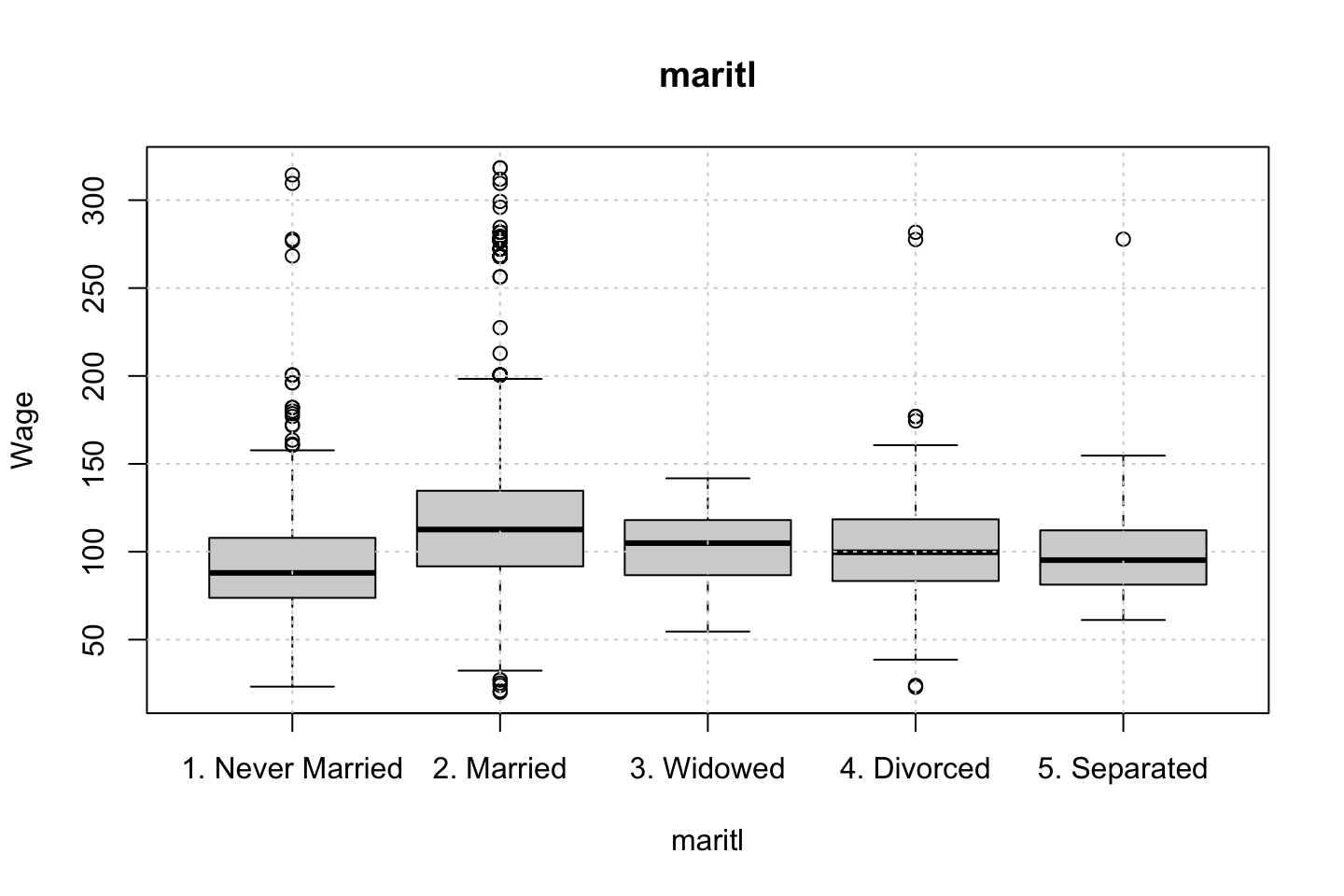

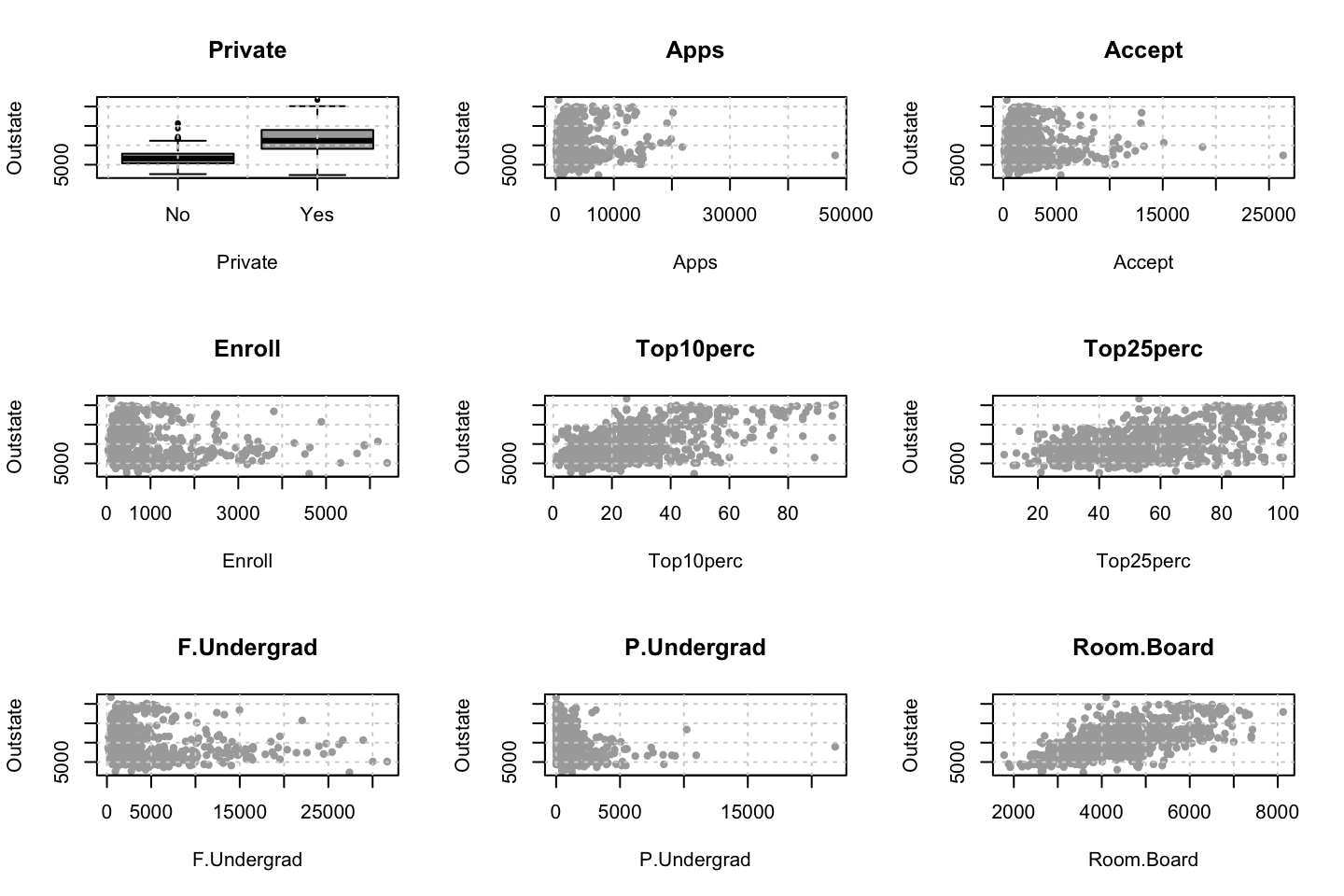

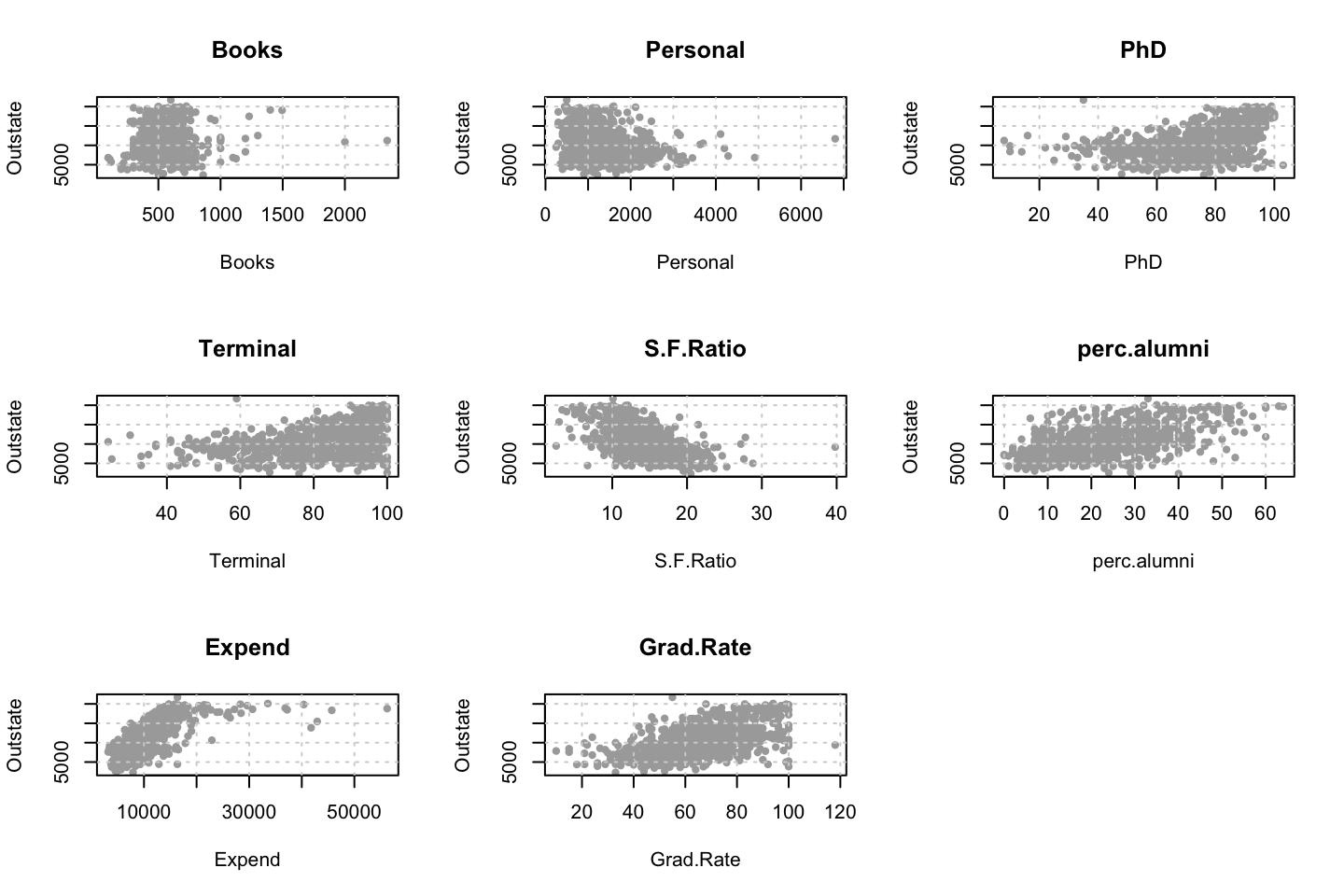

df <- WageEvaluating features other features to see how age respond hereon.

We can plot the variables agains each other, to see how they interact.

library(dplyr)

for (i in 1:10) {

plot(y = df$wage,x = df[,i],xlab = names(df)[i],ylab = "Wage")

grid()

names(df)[i] %>% title()

}

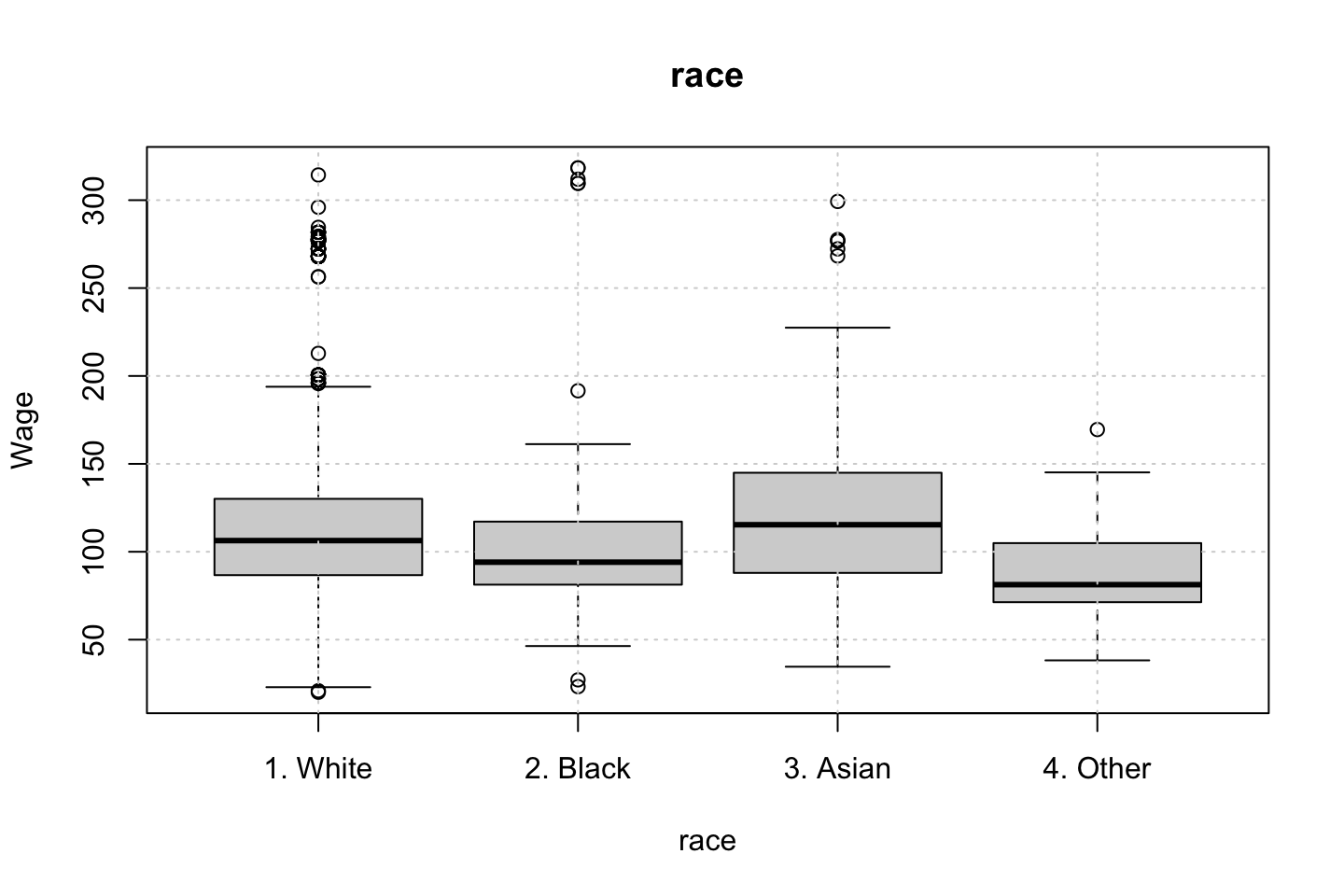

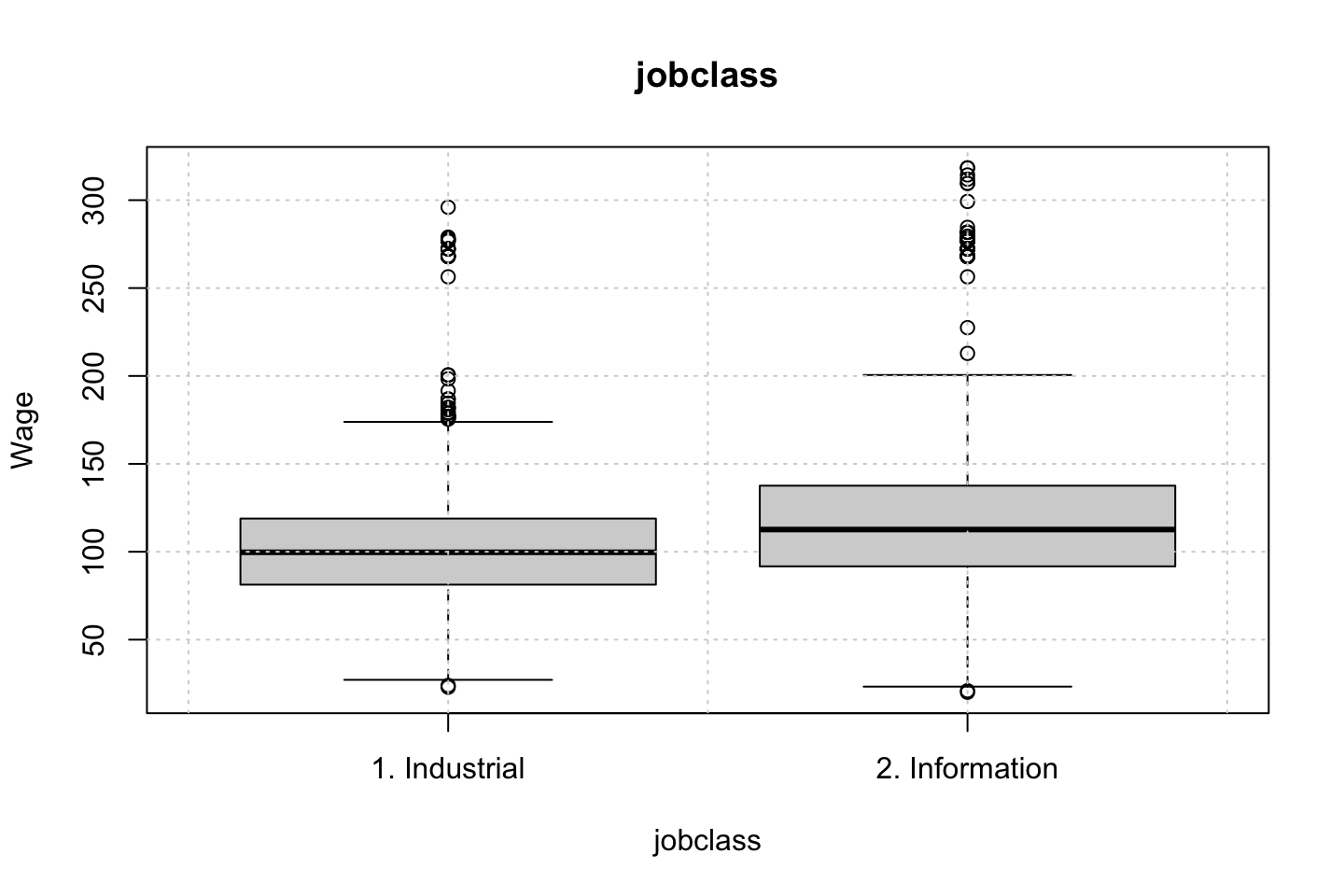

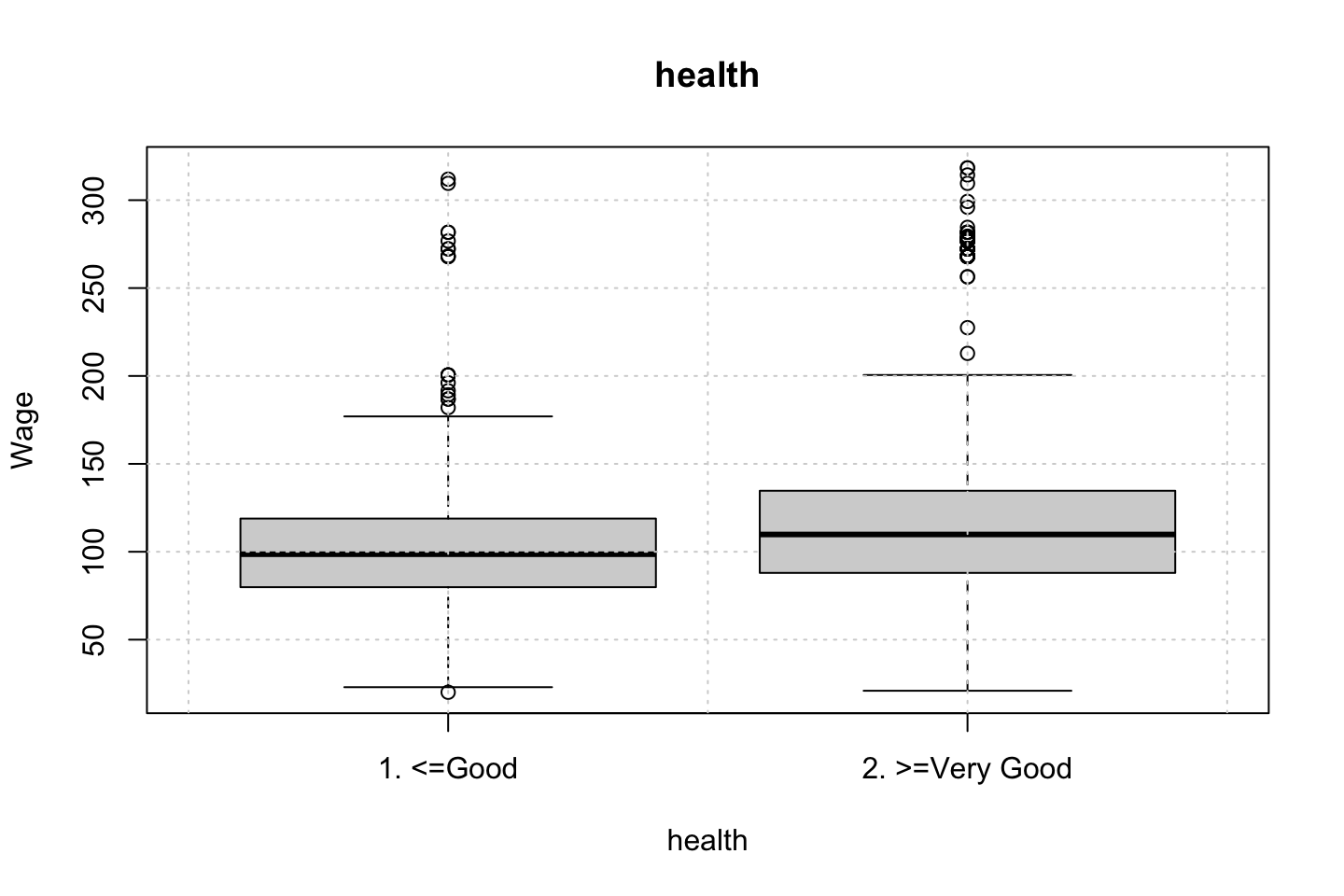

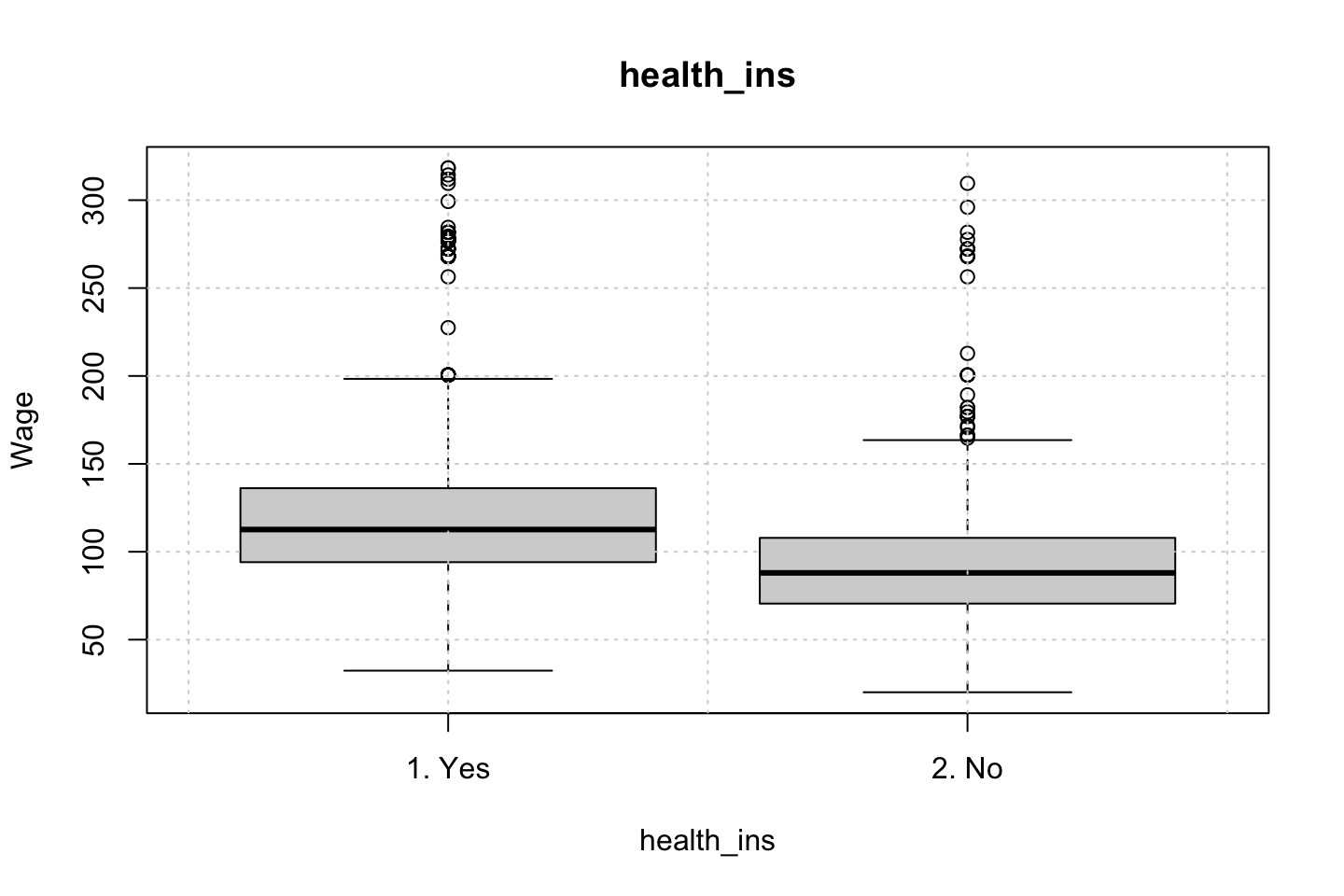

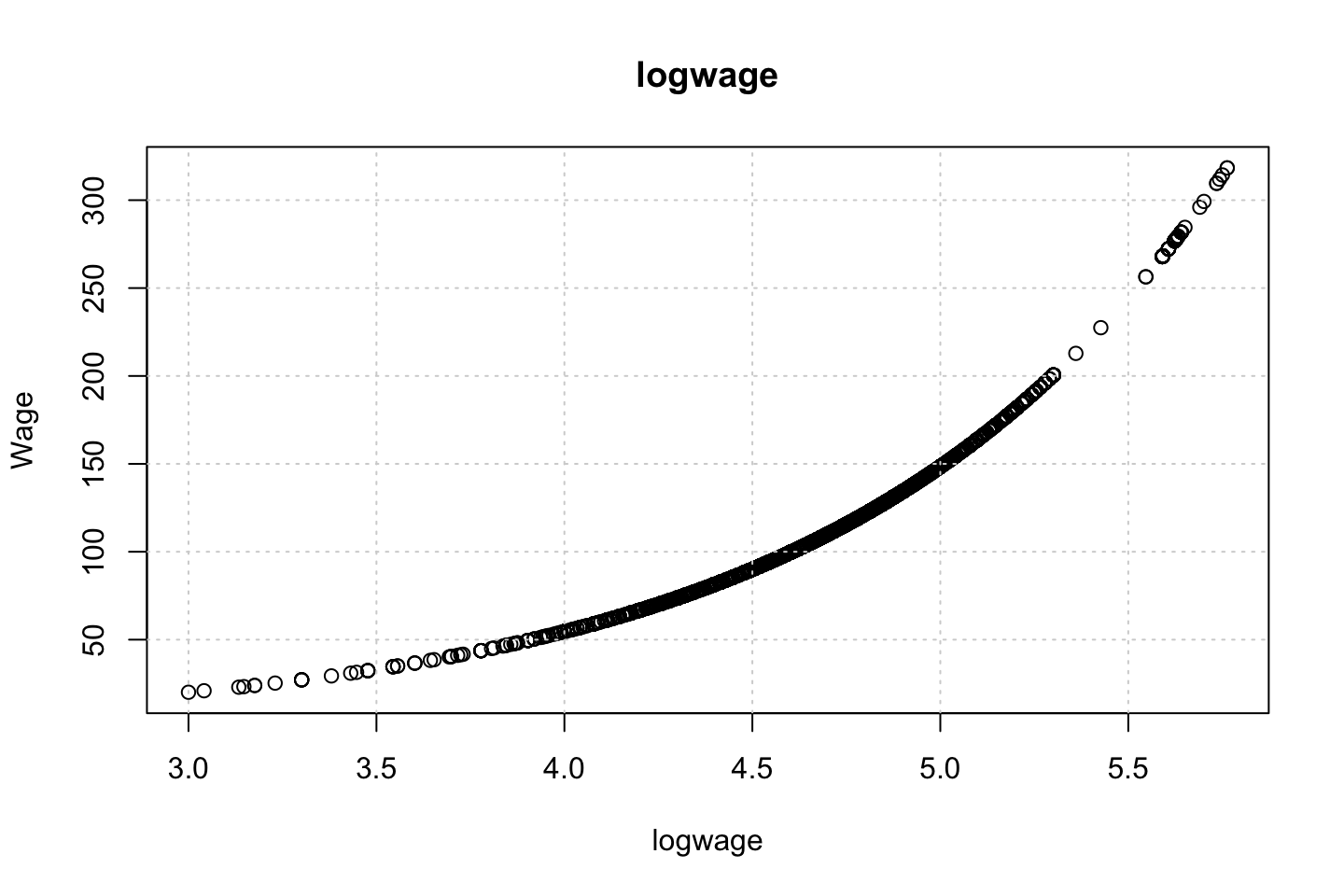

Looking at race, it appears as if there is some relationship between race and wage the same with maritial status. Region only has values in one category, jobclass appear to visually have different means. The same goes for health and health insurance. Naturally log of wage has a non linear relationship with wage. Although the variable is the same, thus it cant be used for much to predict wage levels.

Since all the variables of interest, and we haven’t worked with are all categorical, then we can’t really do any polynomial regression with the data, as they are all factors.

What one could do is a mutlivariate linear model with different factors, or step functions or perhaps GAM where a continous varaible with polynomials are included.

Therefore, I will not elaborate much more on this.

Ana made three different models, notice, that these are linear models, as the polynomial regression is not able to handle this.

fit1 = lm(wage ~ maritl, data = df)

deviance(fit1) # here deviance = RSS## [1] 4858941

fit2 = lm(wage ~ jobclass, data = df)

deviance(fit2)## [1] 4998547

fit3 = lm(wage ~ maritl + jobclass, data = df)

deviance(fit3)# Select model fit3 (smallest deviance)## [1] 4654752

summary(fit3)##

## Call:

## lm(formula = wage ~ maritl + jobclass, data = df)

##

## Residuals:

## Min 1Q Median 3Q Max

## -107.108 -22.689 -5.749 16.445 212.492

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 85.315 1.679 50.818 < 2e-16 ***

## maritl2. Married 25.356 1.776 14.279 < 2e-16 ***

## maritl3. Widowed 8.137 9.178 0.887 0.37541

## maritl4. Divorced 9.664 3.166 3.052 0.00229 **

## maritl5. Separated 7.189 5.539 1.298 0.19441

## jobclass2. Information 16.523 1.442 11.460 < 2e-16 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 39.43 on 2994 degrees of freedom

## Multiple R-squared: 0.1086, Adjusted R-squared: 0.1072

## F-statistic: 72.98 on 5 and 2994 DF, p-value: < 2.2e-16We can assess the groups with the contrasts function.

# To interpret first identify which is ref category

contrasts(Wage$maritl) # Never Married is the reference category## 2. Married 3. Widowed 4. Divorced 5. Separated

## 1. Never Married 0 0 0 0

## 2. Married 1 0 0 0

## 3. Widowed 0 1 0 0

## 4. Divorced 0 0 1 0

## 5. Separated 0 0 0 1contrasts(Wage$jobclass) # Industrial is the reference category## 2. Information

## 1. Industrial 0

## 2. Information 1Anova can also show the deviances etc. but notice, these does not appear to be neste d(JK note)?????

- The answer, fit 1 and fit 2 are nested into fit 3. Thus we dont compare fit 1 and fit 2, as these are not nested.

anova(fit1,fit2,fit3)| Res.Df | RSS | Df | Sum of Sq | F | Pr(>F) |

|---|---|---|---|---|---|

| 2995 | 4858941 | NA | NA | NA | NA |

| 2998 | 4998547 | -3 | -139606 | 29.93215 | 0 |

| 2994 | 4654752 | 4 | 343795 | 55.28341 | 0 |

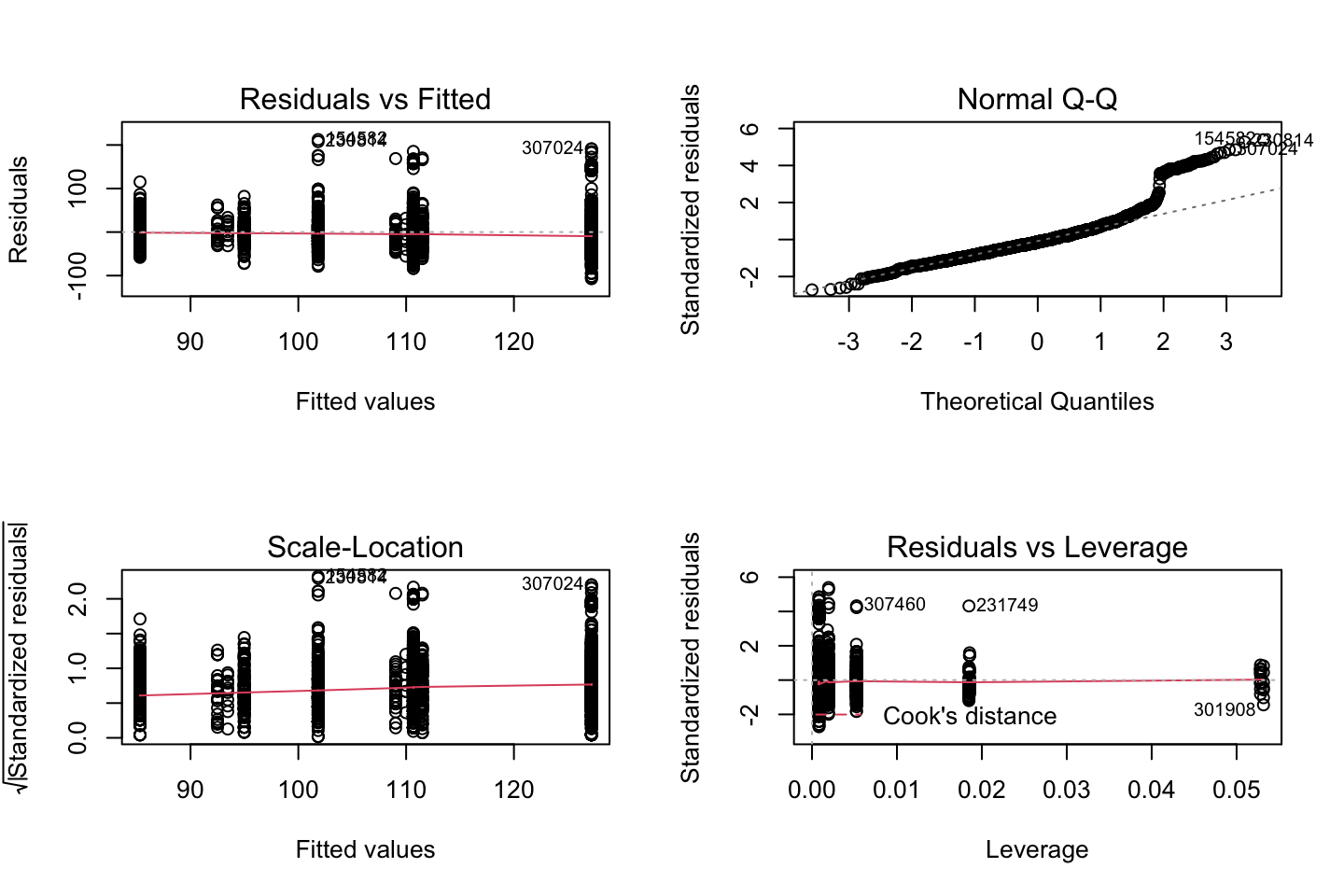

Now we can check the residuals

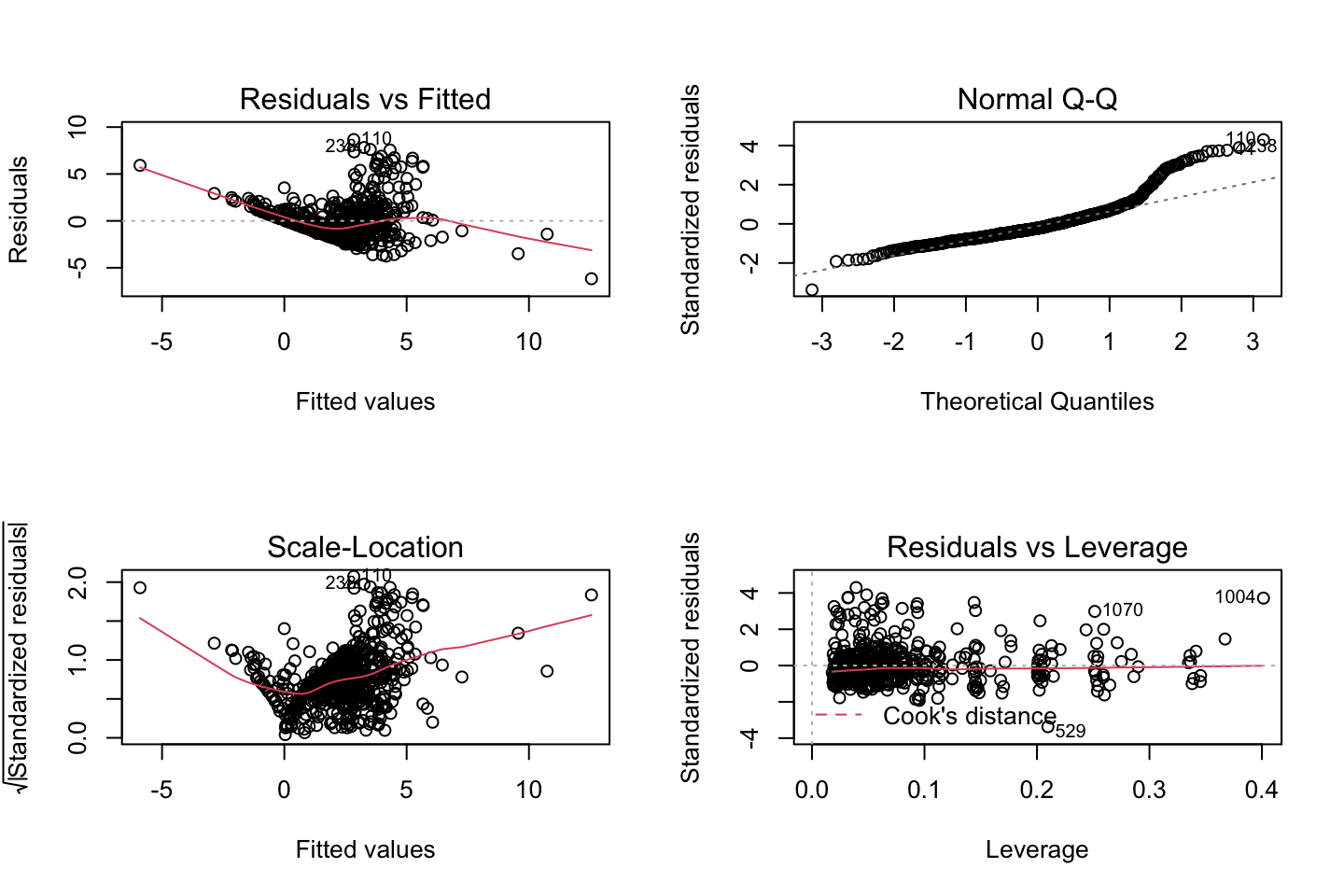

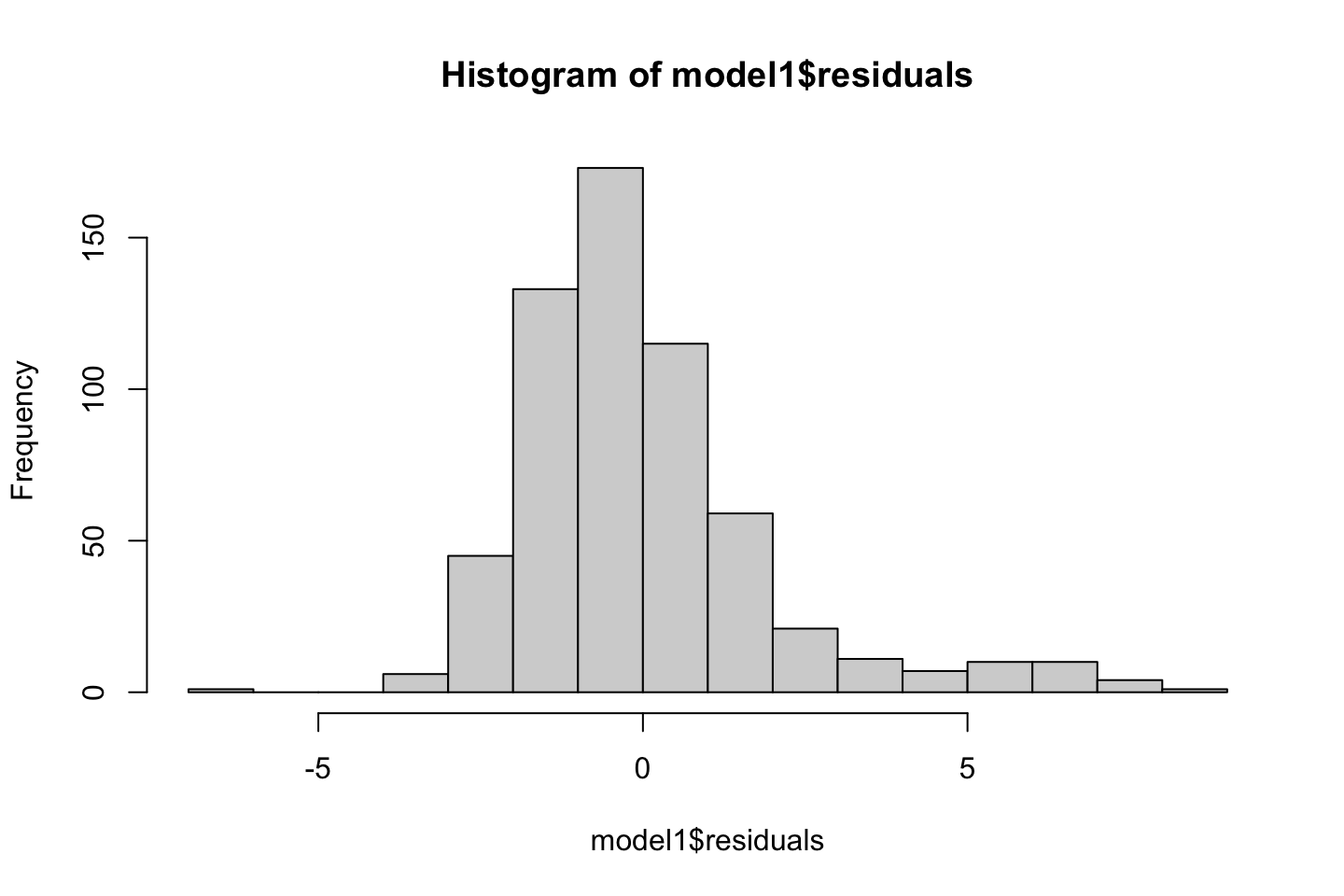

par(mfrow = c(2,2))

plot(fit3)

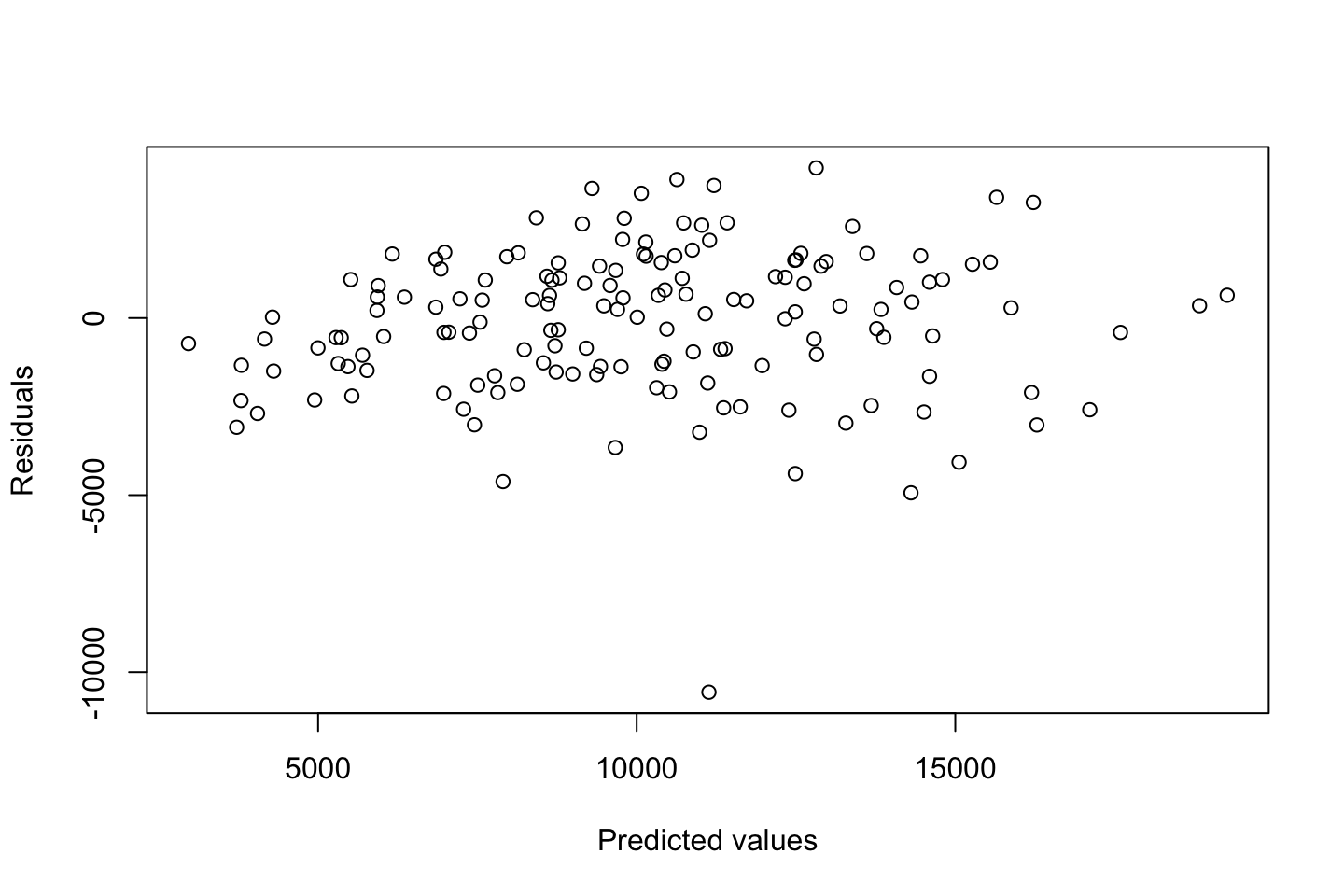

Looking at:

- The top left of the plot (residuals vs fitted), we would like these to be around 0.

- The top right, we want them to have a linear shape. This looks odd

Based on this, the model may be questionable. The solution:

- Exclude the extreme values

- Finding a variable that account for them.

2.4.3 Exercise 8

df <- AutoAre we able to predict how old a car is based on the variables at hand?

Hence year = DV

Name contains a lot of value, let us only use the first word, as that appear to be the brand. Therefore a loop is created to correct all the misspelled names.

brand <- strsplit(x = as.character(df$name),split = " ")

brand.name <- as.vector(rep(0,length(brand)))

for (i in c(1:length(brand))) {

brand.name[i] <- brand[[i]][1]

}

table(brand.name)## brand.name

## amc audi bmw buick cadillac

## 27 7 2 17 2

## capri chevroelt chevrolet chevy chrysler

## 1 1 43 3 6

## datsun dodge fiat ford hi

## 23 28 8 48 1

## honda maxda mazda mercedes mercedes-benz

## 13 2 10 1 2

## mercury nissan oldsmobile opel peugeot

## 11 1 10 4 8

## plymouth pontiac renault saab subaru

## 31 16 3 4 4

## toyota toyouta triumph vokswagen volkswagen

## 25 1 1 1 15

## volvo vw

## 6 6

misspelled <- matrix(byrow = TRUE,ncol = 2

,data = c("mercedes","mercedes-benz"

,"toyouta","toyota"

,"chevroelt","chevrolet"

,"maxda","mazda"

,"vokswagen","volkswagen"

,"vw","volkswagen"))

index <- as.vector("")

n <- 0

bn.list <- as.list(0)

brand.name.recent <- brand.name

for (i in c(misspelled[,1])) {

n <- n + 1

index <- rep(FALSE,length(brand.name))

index[brand.name == i] <- TRUE

bn.list[[n]] <- replace(x = brand.name.recent,list = index,values = misspelled[n,2])

brand.name.recent <- replace(x = brand.name.recent,list = index,values = misspelled[n,2])

}

df <- cbind(df[,-9],as.factor(bn.list[[6]]))

names(df)[names(df) == 'bn.list[[6]]'] <- "brand.name"Also we must convert origin to a factor.

df$origin <- as.factor(df$origin)Checking correlations.

The following can be run to see all the combinations

# par(mfrow = c(1,1))

# for (i in 1:dim(mm)[2]) {

# plot(y = df$year,x = mm[,i],xlab = names(mm)[i],ylab = "Year")

# grid()

# colnames(mm)[i] %>% title()

# }Before training the model, we can partition the data to test the model out of sample

set.seed(1337)

train.size <- round(x = nrow(df)*0.8,digits = 0) #Setting the training size

train.index <- sample(x = c(1:nrow(df)),size = train.size) #setting seed and creating vector for index

mm <- model.matrix(year ~ .,data = df)[,-1] #tried to make it mm first, to get rid of having variables that were in one partition but not the other.

year <- df$year

train.df <- as.data.frame(cbind(year,mm[train.index,])) #crating the training set

test.df <- as.data.frame(cbind(year,mm[-train.index,])) #creating the testing setlibrary(gam)

gam.m1 <- gam(year ~ s(train.df$mpg,df = 5) + s(train.df$cylinders,df = 5) + s(train.df$displacement,df = 5) + s(train.df$horsepower,df = 5) + s(train.df$weight,df = 5) + s(train.df$acceleration,df = 5) + .

,data = train.df)

summary(gam.m1)##

## Call: gam(formula = year ~ s(train.df$mpg, df = 5) + s(train.df$cylinders,

## df = 5) + s(train.df$displacement, df = 5) + s(train.df$horsepower,

## df = 5) + s(train.df$weight, df = 5) + s(train.df$acceleration,

## df = 5) + ., data = train.df)

## Deviance Residuals:

## Min 1Q Median 3Q Max

## -5.5818 -2.0705 -0.2068 2.0660 6.0843

##

## (Dispersion Parameter for gaussian family taken to be 8.889)

##

## Null Deviance: 2675.86 on 313 degrees of freedom

## Residual Deviance: 2266.693 on 255.0004 degrees of freedom

## AIC: 1631.771

##

## Number of Local Scoring Iterations: NA

##

## Anova for Parametric Effects

## Df Sum Sq Mean Sq F value

## s(train.df$mpg, df = 5) 1 10.71 10.707 1.2045

## s(train.df$cylinders, df = 5) 1 0.00 0.002 0.0003

## s(train.df$displacement, df = 5) 1 2.44 2.441 0.2747

## s(train.df$horsepower, df = 5) 1 0.13 0.134 0.0151

## s(train.df$weight, df = 5) 1 5.93 5.926 0.6667

## s(train.df$acceleration, df = 5) 1 1.52 1.517 0.1706

## origin2 1 16.05 16.050 1.8056

## origin3 1 11.10 11.097 1.2484

## `\\`as.factor(bn.list[[6]])\\`audi` 1 6.53 6.526 0.7342

## `\\`as.factor(bn.list[[6]])\\`bmw` 1 32.67 32.668 3.6751

## `\\`as.factor(bn.list[[6]])\\`buick` 1 8.31 8.307 0.9345

## `\\`as.factor(bn.list[[6]])\\`cadillac` 1 0.85 0.854 0.0960

## `\\`as.factor(bn.list[[6]])\\`capri` 1 0.00 0.003 0.0004

## `\\`as.factor(bn.list[[6]])\\`chevrolet` 1 0.23 0.230 0.0258

## `\\`as.factor(bn.list[[6]])\\`chevy` 1 0.00 0.003 0.0004

## `\\`as.factor(bn.list[[6]])\\`chrysler` 1 46.30 46.301 5.2088

## `\\`as.factor(bn.list[[6]])\\`datsun` 1 18.32 18.321 2.0611

## `\\`as.factor(bn.list[[6]])\\`dodge` 1 2.70 2.702 0.3040

## `\\`as.factor(bn.list[[6]])\\`fiat` 1 1.89 1.895 0.2132

## `\\`as.factor(bn.list[[6]])\\`ford` 1 25.85 25.847 2.9077

## `\\`as.factor(bn.list[[6]])\\`hi` 1 0.34 0.337 0.0379

## `\\`as.factor(bn.list[[6]])\\`honda` 1 3.40 3.401 0.3826

## `\\`as.factor(bn.list[[6]])\\`mazda` 1 4.99 4.986 0.5609

## `\\`as.factor(bn.list[[6]])\\`mercedes-benz` 1 0.01 0.014 0.0016

## `\\`as.factor(bn.list[[6]])\\`mercury` 1 0.31 0.313 0.0352

## `\\`as.factor(bn.list[[6]])\\`nissan` 1 3.68 3.684 0.4145

## `\\`as.factor(bn.list[[6]])\\`oldsmobile` 1 18.12 18.124 2.0389

## `\\`as.factor(bn.list[[6]])\\`opel` 1 0.30 0.303 0.0341

## `\\`as.factor(bn.list[[6]])\\`peugeot` 1 1.79 1.794 0.2018

## `\\`as.factor(bn.list[[6]])\\`plymouth` 1 0.53 0.527 0.0593

## `\\`as.factor(bn.list[[6]])\\`pontiac` 1 4.37 4.374 0.4921

## `\\`as.factor(bn.list[[6]])\\`renault` 1 16.53 16.533 1.8600

## `\\`as.factor(bn.list[[6]])\\`saab` 1 0.10 0.103 0.0115

## `\\`as.factor(bn.list[[6]])\\`subaru` 1 0.00 0.001 0.0001

## `\\`as.factor(bn.list[[6]])\\`volkswagen` 1 0.63 0.634 0.0713

## Residuals 255 2266.69 8.889

## Pr(>F)

## s(train.df$mpg, df = 5) 0.27346

## s(train.df$cylinders, df = 5) 0.98665

## s(train.df$displacement, df = 5) 0.60068

## s(train.df$horsepower, df = 5) 0.90226

## s(train.df$weight, df = 5) 0.41496

## s(train.df$acceleration, df = 5) 0.67989

## origin2 0.18023

## origin3 0.26491

## `\\`as.factor(bn.list[[6]])\\`audi` 0.39233

## `\\`as.factor(bn.list[[6]])\\`bmw` 0.05635 .

## `\\`as.factor(bn.list[[6]])\\`buick` 0.33460

## `\\`as.factor(bn.list[[6]])\\`cadillac` 0.75690

## `\\`as.factor(bn.list[[6]])\\`capri` 0.98494

## `\\`as.factor(bn.list[[6]])\\`chevrolet` 0.87244

## `\\`as.factor(bn.list[[6]])\\`chevy` 0.98454

## `\\`as.factor(bn.list[[6]])\\`chrysler` 0.02330 *

## `\\`as.factor(bn.list[[6]])\\`datsun` 0.15233

## `\\`as.factor(bn.list[[6]])\\`dodge` 0.58186

## `\\`as.factor(bn.list[[6]])\\`fiat` 0.64469

## `\\`as.factor(bn.list[[6]])\\`ford` 0.08937 .

## `\\`as.factor(bn.list[[6]])\\`hi` 0.84586

## `\\`as.factor(bn.list[[6]])\\`honda` 0.53679

## `\\`as.factor(bn.list[[6]])\\`mazda` 0.45459

## `\\`as.factor(bn.list[[6]])\\`mercedes-benz` 0.96840

## `\\`as.factor(bn.list[[6]])\\`mercury` 0.85133

## `\\`as.factor(bn.list[[6]])\\`nissan` 0.52028

## `\\`as.factor(bn.list[[6]])\\`oldsmobile` 0.15454

## `\\`as.factor(bn.list[[6]])\\`opel` 0.85372

## `\\`as.factor(bn.list[[6]])\\`peugeot` 0.65362

## `\\`as.factor(bn.list[[6]])\\`plymouth` 0.80775

## `\\`as.factor(bn.list[[6]])\\`pontiac` 0.48365

## `\\`as.factor(bn.list[[6]])\\`renault` 0.17383

## `\\`as.factor(bn.list[[6]])\\`saab` 0.91452

## `\\`as.factor(bn.list[[6]])\\`subaru` 0.99320

## `\\`as.factor(bn.list[[6]])\\`volkswagen` 0.78966

## Residuals

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Anova for Nonparametric Effects

## Npar Df Npar F Pr(F)

## (Intercept)

## s(train.df$mpg, df = 5) 4 0.97458 0.4219

## s(train.df$cylinders, df = 5) 3 0.63467 0.5933

## s(train.df$displacement, df = 5) 4 1.72358 0.1452

## s(train.df$horsepower, df = 5) 4 1.13935 0.3384

## s(train.df$weight, df = 5) 4 0.69772 0.5941

## s(train.df$acceleration, df = 5) 4 0.93342 0.4451

## mpg

## cylinders

## displacement

## horsepower

## weight

## acceleration

## origin2

## origin3

## `\\`as.factor(bn.list[[6]])\\`audi`

## `\\`as.factor(bn.list[[6]])\\`bmw`

## `\\`as.factor(bn.list[[6]])\\`buick`

## `\\`as.factor(bn.list[[6]])\\`cadillac`

## `\\`as.factor(bn.list[[6]])\\`capri`

## `\\`as.factor(bn.list[[6]])\\`chevrolet`

## `\\`as.factor(bn.list[[6]])\\`chevy`

## `\\`as.factor(bn.list[[6]])\\`chrysler`

## `\\`as.factor(bn.list[[6]])\\`datsun`

## `\\`as.factor(bn.list[[6]])\\`dodge`

## `\\`as.factor(bn.list[[6]])\\`fiat`

## `\\`as.factor(bn.list[[6]])\\`ford`

## `\\`as.factor(bn.list[[6]])\\`hi`

## `\\`as.factor(bn.list[[6]])\\`honda`

## `\\`as.factor(bn.list[[6]])\\`mazda`

## `\\`as.factor(bn.list[[6]])\\`mercedes-benz`

## `\\`as.factor(bn.list[[6]])\\`mercury`

## `\\`as.factor(bn.list[[6]])\\`nissan`

## `\\`as.factor(bn.list[[6]])\\`oldsmobile`

## `\\`as.factor(bn.list[[6]])\\`opel`

## `\\`as.factor(bn.list[[6]])\\`peugeot`

## `\\`as.factor(bn.list[[6]])\\`plymouth`

## `\\`as.factor(bn.list[[6]])\\`pontiac`

## `\\`as.factor(bn.list[[6]])\\`renault`

## `\\`as.factor(bn.list[[6]])\\`saab`

## `\\`as.factor(bn.list[[6]])\\`subaru`

## `\\`as.factor(bn.list[[6]])\\`toyota`

## `\\`as.factor(bn.list[[6]])\\`triumph`

## `\\`as.factor(bn.list[[6]])\\`volkswagen`

## `\\`as.factor(bn.list[[6]])\\`volvo`It appears as if non of the parameters are good predictors.

Then one could try out other models, or perhaps it is just very difficult with the data at hand to predict the year of the car.

2.4.4 Exercise 9

library(MASS)

df <- Boston

df <- as.data.frame(cbind(df$nox,df$dis))

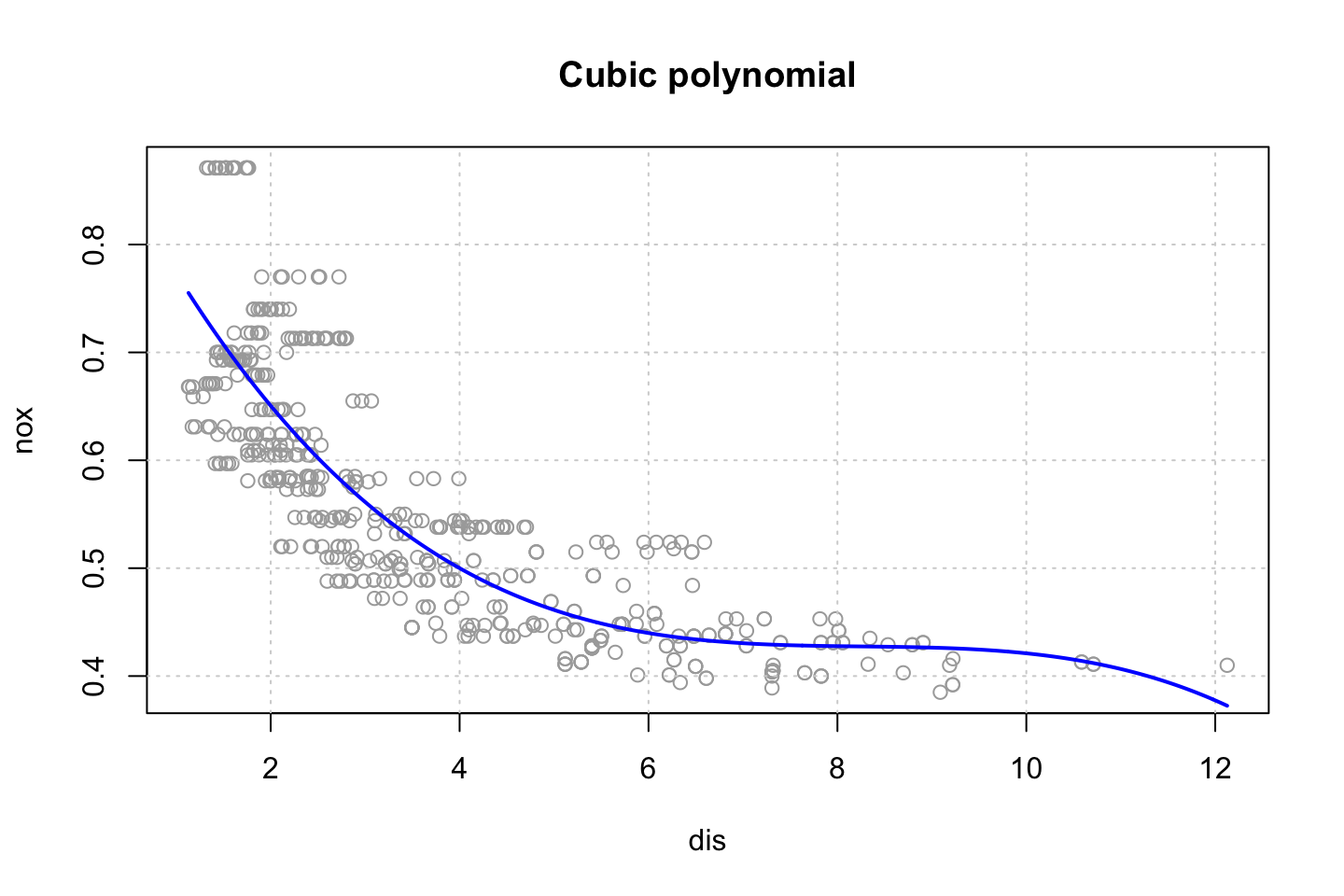

colnames(df) <- c("nox","dis")2.4.4.1 (a) using poly function to fit cubic polynomial regression

fit.poly <- lm(nox ~ poly(dis,3),data = df)

summary(fit.poly)##

## Call:

## lm(formula = nox ~ poly(dis, 3), data = df)

##

## Residuals:

## Min 1Q Median 3Q Max

## -0.121130 -0.040619 -0.009738 0.023385 0.194904

##

## Coefficients:

## Estimate Std. Error t value Pr(>|t|)

## (Intercept) 0.554695 0.002759 201.021 < 2e-16 ***

## poly(dis, 3)1 -2.003096 0.062071 -32.271 < 2e-16 ***

## poly(dis, 3)2 0.856330 0.062071 13.796 < 2e-16 ***

## poly(dis, 3)3 -0.318049 0.062071 -5.124 0.000000427 ***

## ---

## Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

##

## Residual standard error: 0.06207 on 502 degrees of freedom

## Multiple R-squared: 0.7148, Adjusted R-squared: 0.7131

## F-statistic: 419.3 on 3 and 502 DF, p-value: < 2.2e-16Remember that we are not interested in the coefficients as they are misleading, thus we want to look at the shape.

The table above is mostly presented for explanatory reasons.

As we are interested in the curve, we can fit that.

#Defining range

dislims <- range(df$dis)

n <- (dislims[2]-dislims[1])/nrow(df)

dis.grid <- seq(from = dislims[1],to = dislims[2],by = n)

#Predictions for the plot

preds <- predict(object = fit.poly,newdata = list(dis = dis.grid))

#Plotting

plot(nox ~ dis, data = df, col = "darkgrey")

grid()

lines(x = dis.grid,y = preds, col = "blue",lwd = 2)

title("Cubic polynomial")

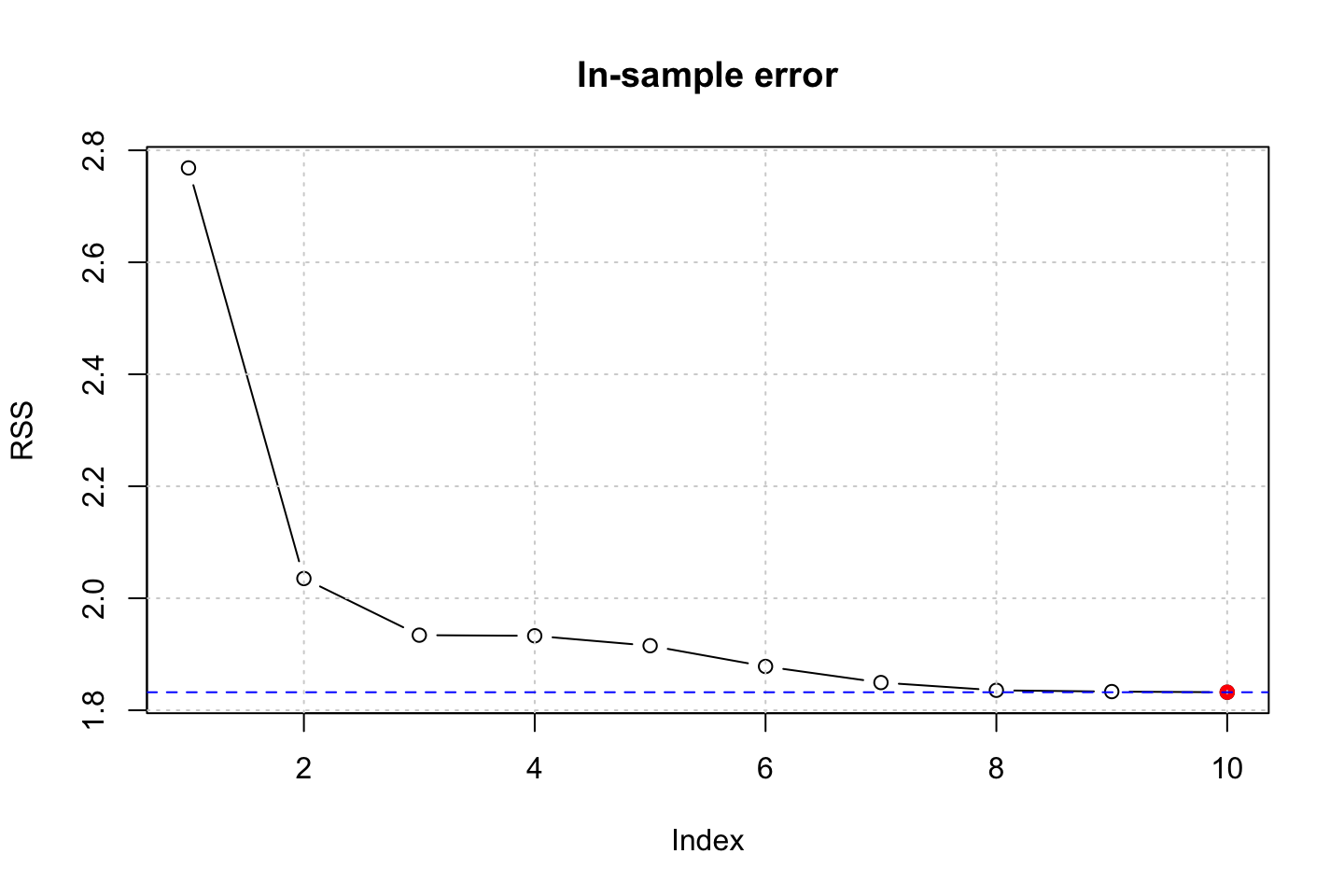

2.4.4.2 (b) Plotting polynomial fits for a range of polynomials

models <- list()

RSS <- 0

for (d in 1:10) {

models[[d]] <- lm(nox ~ poly(dis,d),data = df)

RSS[d] <- sum(residuals(models[[d]])^2)

}

plot(RSS,type = "b")

points(x = which.min(RSS),y = RSS[which.min(RSS)],col = "red",pch = 19)

grid()

abline(h = min(RSS),col = "blue",lty = 2)

title("In-sample error")

We see that the RSS decrease with complexity, that it as expected, as we fit to the in sample data. We could do this with a partition of the data to see out of performance instead.

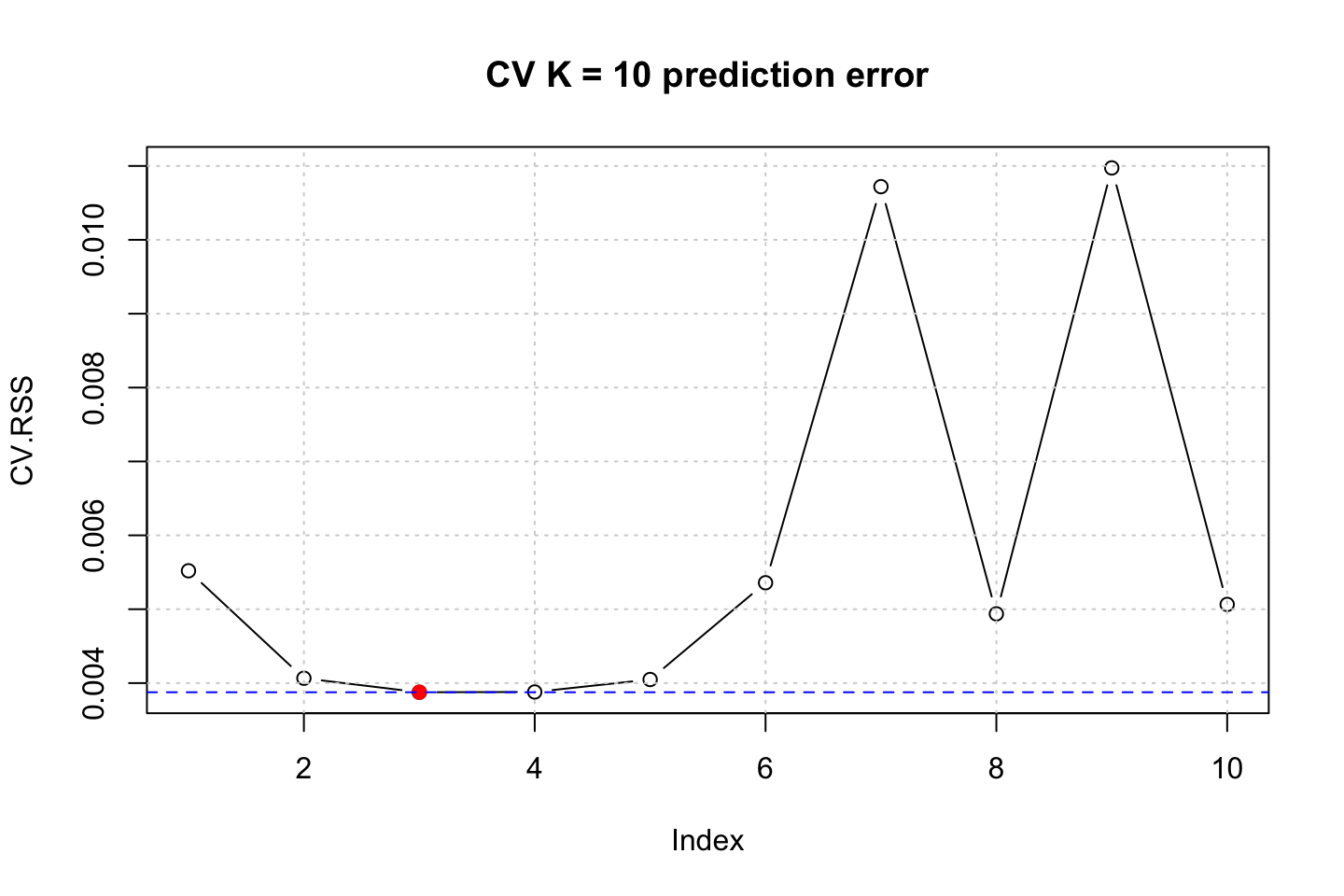

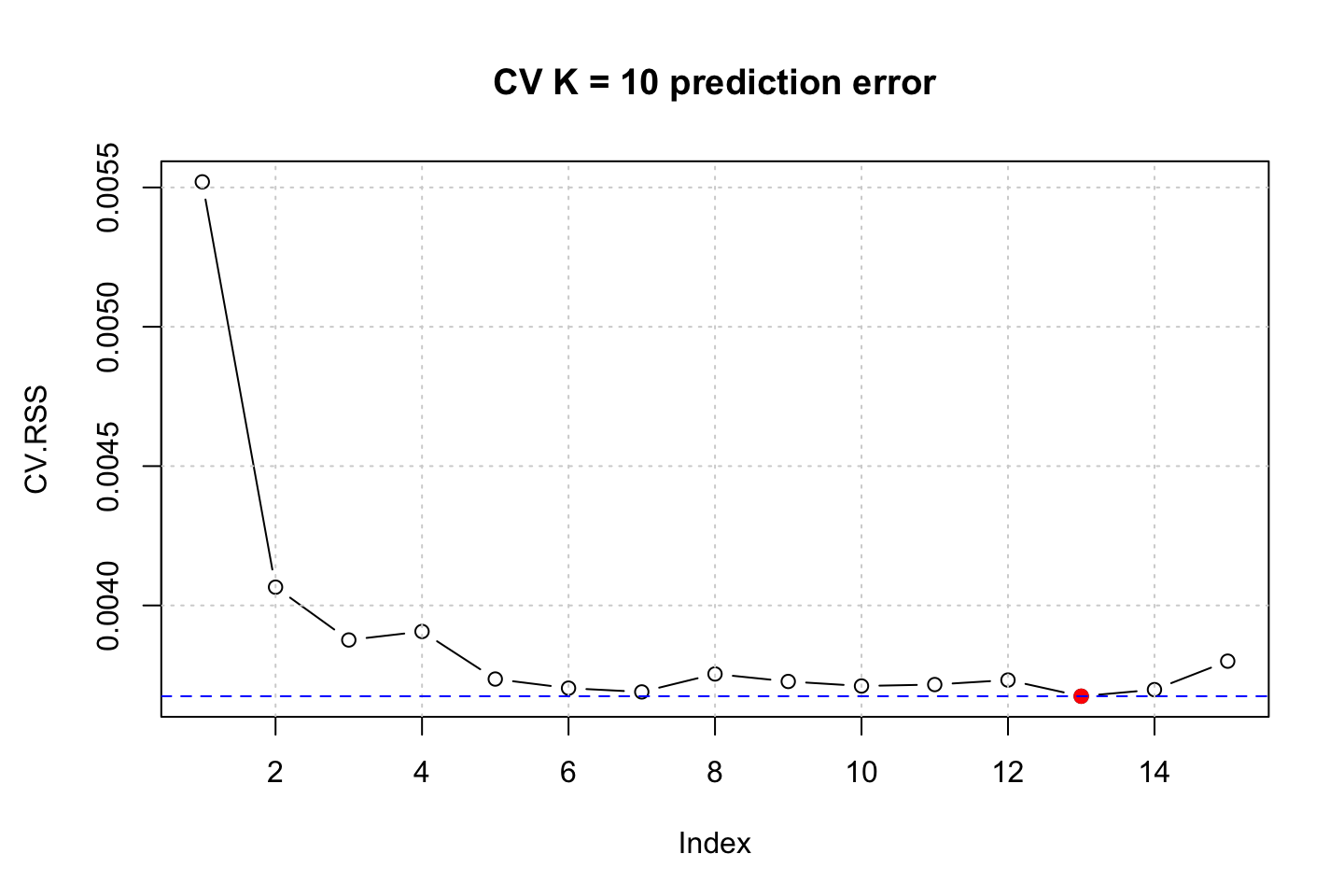

2.4.4.3 (c) Using CV to select best degree of d

Here we run a loop with cross validation to see how the different order of d performs. As the partitions are randomly selected, we preduce 10 simulations to see which orders that tend to occur most often.

models <- list()

RSS <- 0

CV.RSS <- 0

CV.RSS.sim <- 0

for (i in 1:20) {

for (d in 1:10) {

models[[d]] <- glm(nox ~ poly(dis,d),data = df)

RSS[d] <- sum(residuals(models[[d]])^2)

CV.RSS[d] <- cv.glm(data = df,glmfit = models[[d]],K = 10)$delta[2] #Delta = prediction error (adjusted)

}

CV.RSS.sim[i] <- which.min(CV.RSS)

}

#Plotting prediction error

plot(CV.RSS,type = "b")

points(x = which.min(CV.RSS),y = CV.RSS[which.min(CV.RSS)],col = "red",pch = 19)

grid()

abline(h = min(CV.RSS),col = "blue",lty = 2)

title("CV K = 10 prediction error")

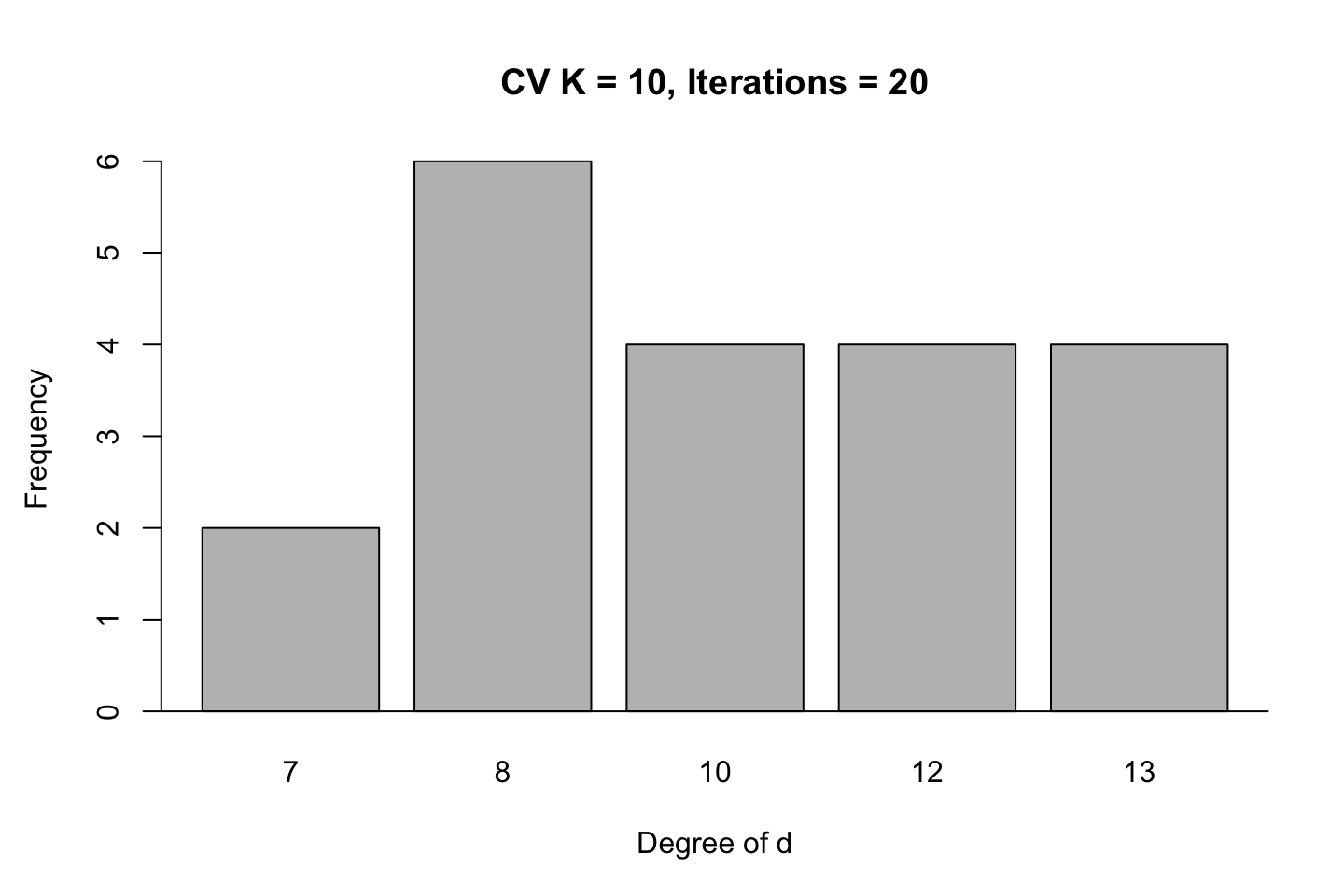

#Plotting simulations

barplot(table(CV.RSS.sim),xlab = "Degree of d",ylab = "Frequency")

abline(h = 0)

title("CV K = 10, Iterations = 20")

In these simulations we see that the best fit is likely to be with using .

It is actually quite interesting that a model with 10 degrees of d is as competitive as 4 in this example, although the cubic model is far superior than the other models.

2.4.4.4 (d) Use bs() to fit a regression spline

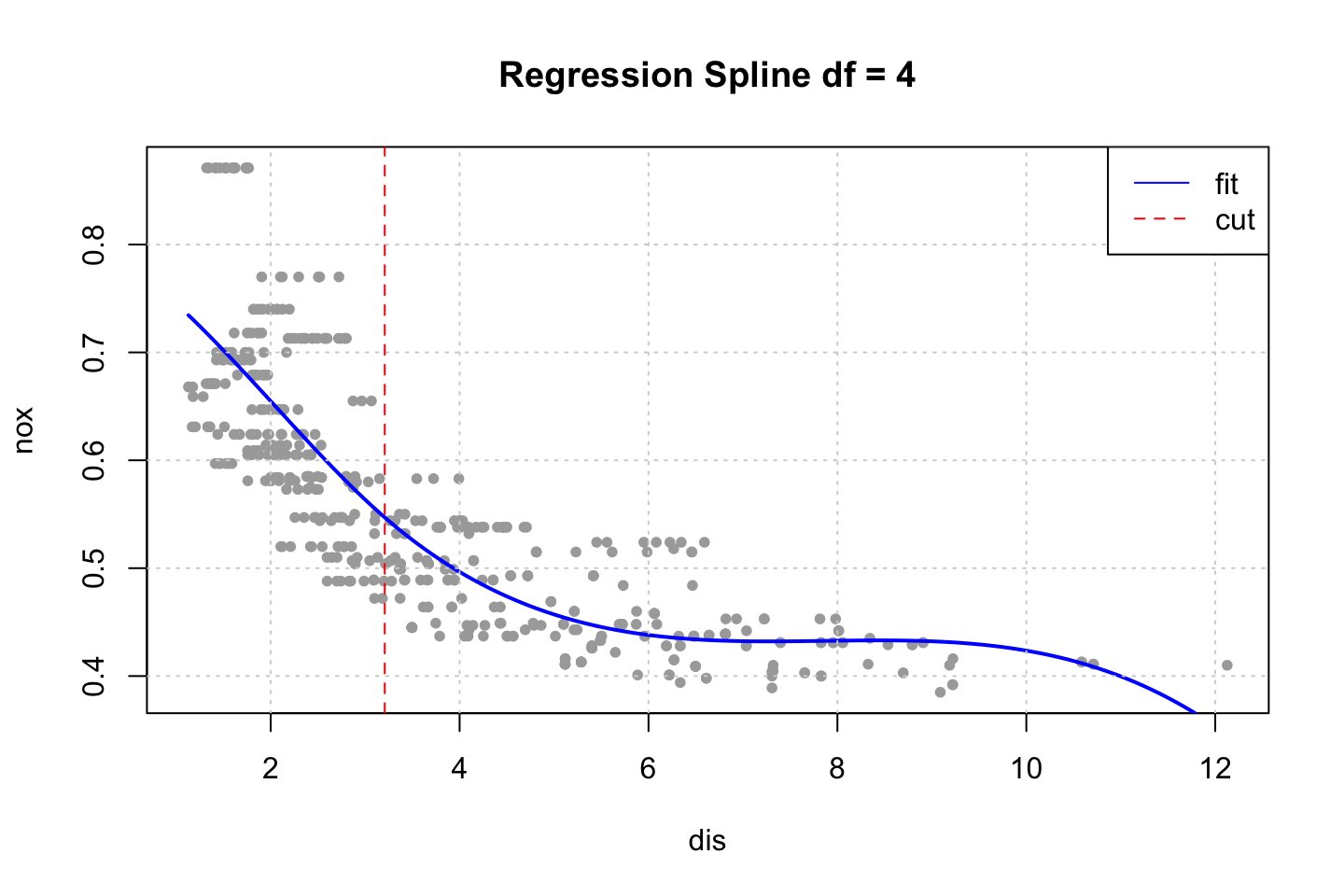

par(mfrow = c(1,1))

fit.bs <- lm(nox ~ bs(dis,df = 4),data = df) #Note as degree is not defined, default = 3

preds <- predict(object = fit.bs,newdata = list(dis = dis.grid))

plot(x = df$dis,y = df$nox,col = "darkgrey",pch = 20,ylab = "nox",xlab = "dis")

lines(x = dis.grid,y = preds,col = "blue",lwd = 2)

grid()

title("Regression Spline df = 4")

abline(v = 3.20745,col = "red",lty = 2) #This is the cut, found in next chunk

legend(x = "topright",legend = c("fit","cut"),lty = 1:2,col = c("blue","red"))

Notice that we merely specified the amount of df that we wanted. The function merely specified them automatically. We can interpret these, by using dim() and attr().

print(dim(bs(df$dis,df = 4)))## [1] 506 4attr(bs(df$dis,df = 4),"knots")## 50%

## 3.20745We see that a model with 4 degrees of freedom yields one cut. Where the model put this at 50%, hence the first half (up to 3.20745). For simplicity, this cut has been added to the plot above, to show where the spline is split.

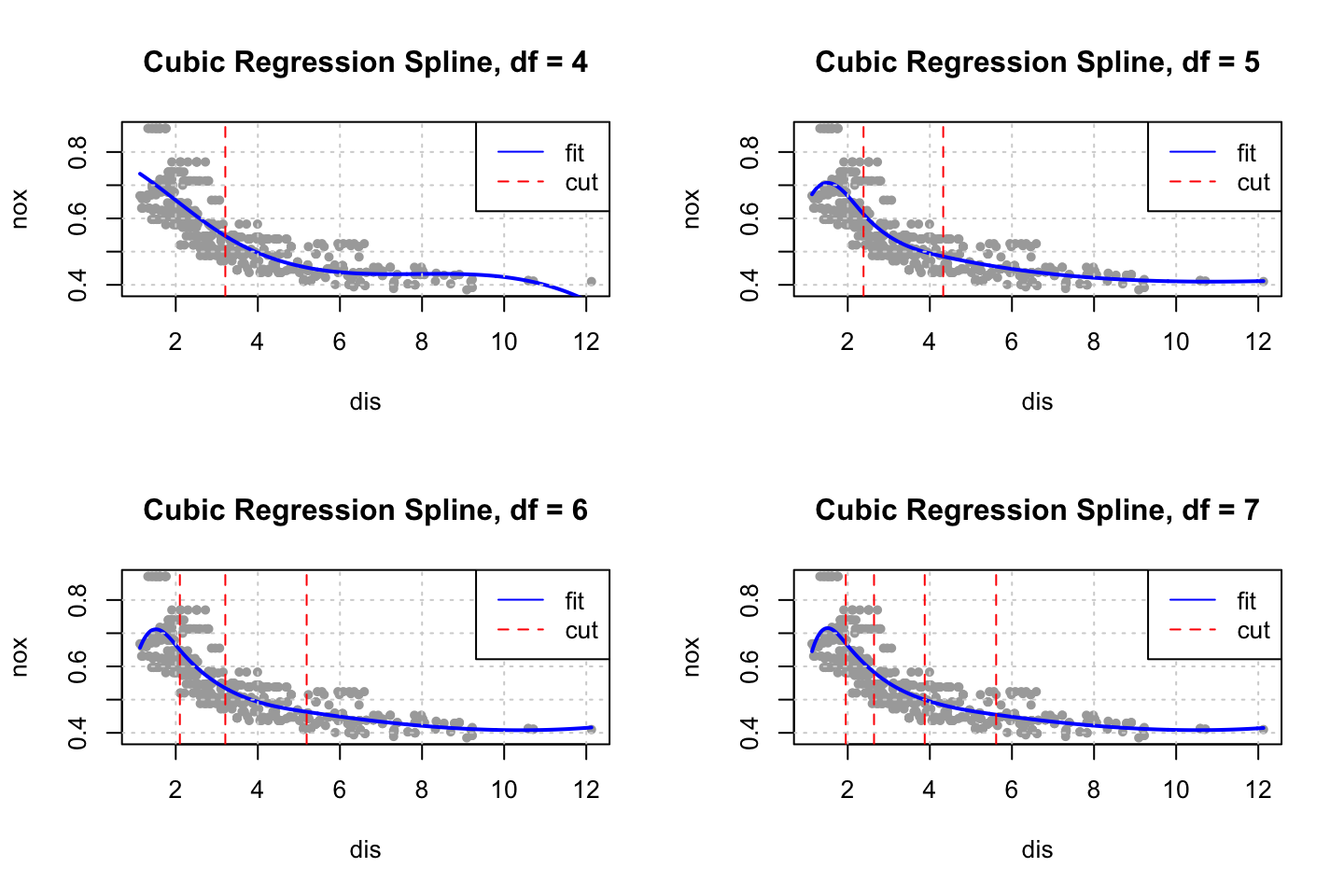

2.4.4.5 (e) Now fit a regression spline

par(mfrow = c(2,2))

for (d in 4:7) {

#The fit + preds

fit.bs <- lm(nox ~ bs(dis,df = d,degree = 3),data = df)

preds <- predict(object = fit.bs,newdata = list(dis = dis.grid))

#Cut

cut <- attr(bs(df$dis,df = d),"knots")

#Plot

plot(x = df$dis,y = df$nox,col = "darkgrey",pch = 20,ylab = "nox",xlab = "dis")

lines(x = dis.grid,y = preds,col = "blue",lwd = 2)

grid()

title(paste("Cubic Regression Spline, df =",d))

abline(v = cut,col = "red",lty = 2) #This is the cut, found in next chunk

legend(x = "topright",legend = c("fit","cut"),lty = 1:2,col = c("blue","red"))

}

We start at four degrees of freedom as a model with only three degrees of freedom, hence cubic regression (three orders of polynomials) = three degrees of freedom (this has to be fact checked).

As we add complexity with knots we also adds degrees of freedom, where we add one degree of freedom for each cut, hence for the cubic spline with 7 degrees of freedom, four cuts and three polynomials (this has to be fact checked).

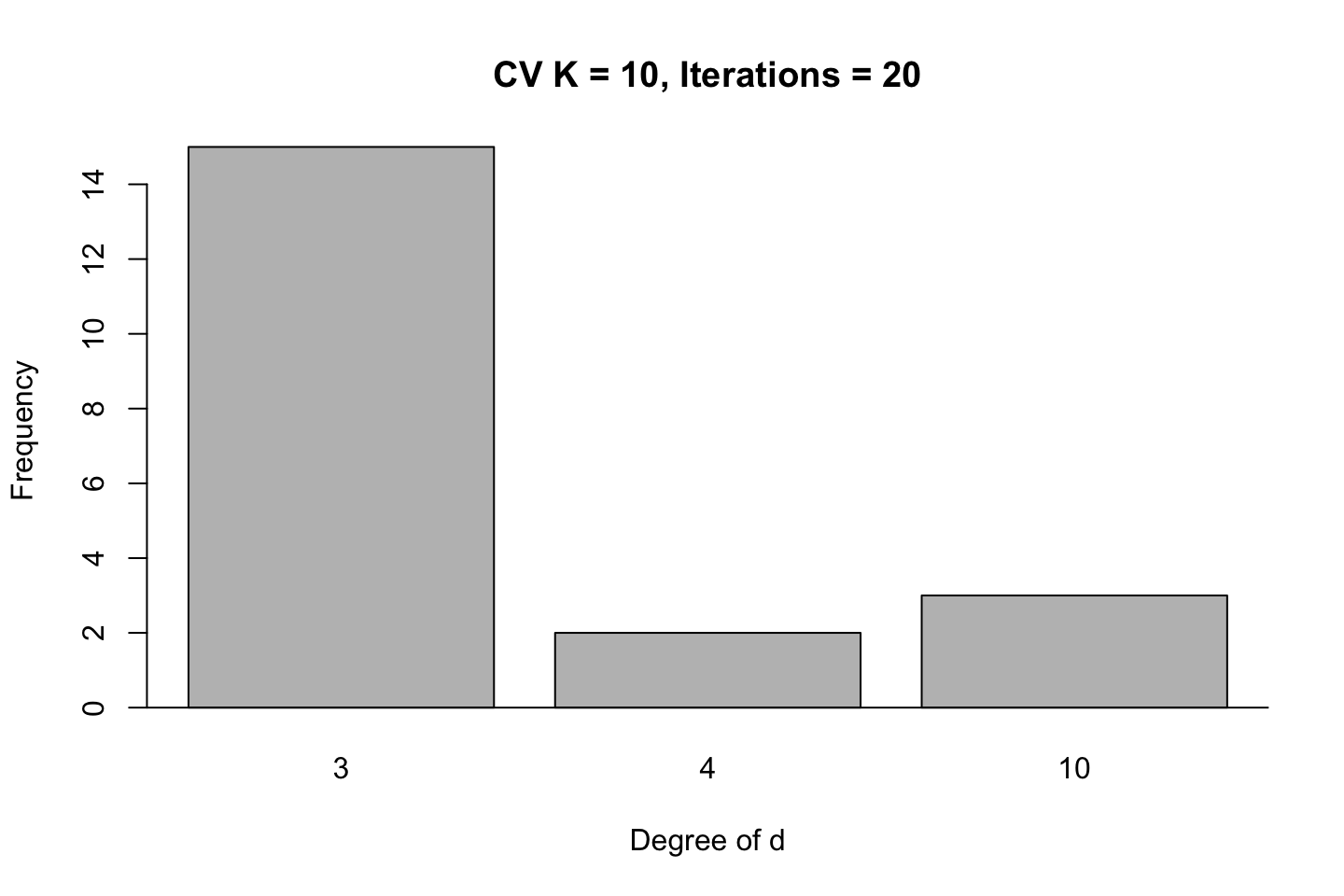

2.4.4.6 (f) Perform cross-validation, to select degrees

models <- list()

RSS <- 0

CV.RSS.sim <- 0

for (i in 1:20) {

for (d in 4:15) {

models[[d]] <- glm(nox ~ bs(dis,df = d,degree = 3),data = df)

RSS[d] <- sum(residuals(models[[d]])^2)

CV.RSS[d] <- cv.glm(data = df,glmfit = models[[d]],K = 10)$delta[2] #Delta = prediction error (adjusted)

}

CV.RSS.sim[i] <- which.min(CV.RSS)

}

par(mfrow = c(1,1),mar = c(5,4.5,4.5,2.1),oma = c(0,0,0,0))

#Plotting prediction error

plot(CV.RSS,type = "b")

points(x = which.min(CV.RSS),y = CV.RSS[which.min(CV.RSS)],col = "red",pch = 19)

grid()

abline(h = min(CV.RSS),col = "blue",lty = 2)

title("CV K = 10 prediction error")

#Plotting simulations

barplot(table(CV.RSS.sim),xlab = "Degree of d",ylab = "Frequency")

abline(h = 0)

title("CV K = 10, Iterations = 20")

First we see the last iteration and the prediction error hereof. Overall we see that it tend to be the rather complex models tend to be

2.4.5 Exercise 10

2.4.5.1 (a) Partitioning the data

#Loading

df <- College

#Partitioning

set.seed(1337)

train.size <- round(x = nrow(df)*0.8,digits = 0) #Setting the training size

train.index <- sample(x = c(1:nrow(df)),size = train.size) #setting seed and creating vector for index

train.df <- df[train.index,] #crating the training set

test.df <- df[-train.index,] #creating the testing set

rm(train.size)

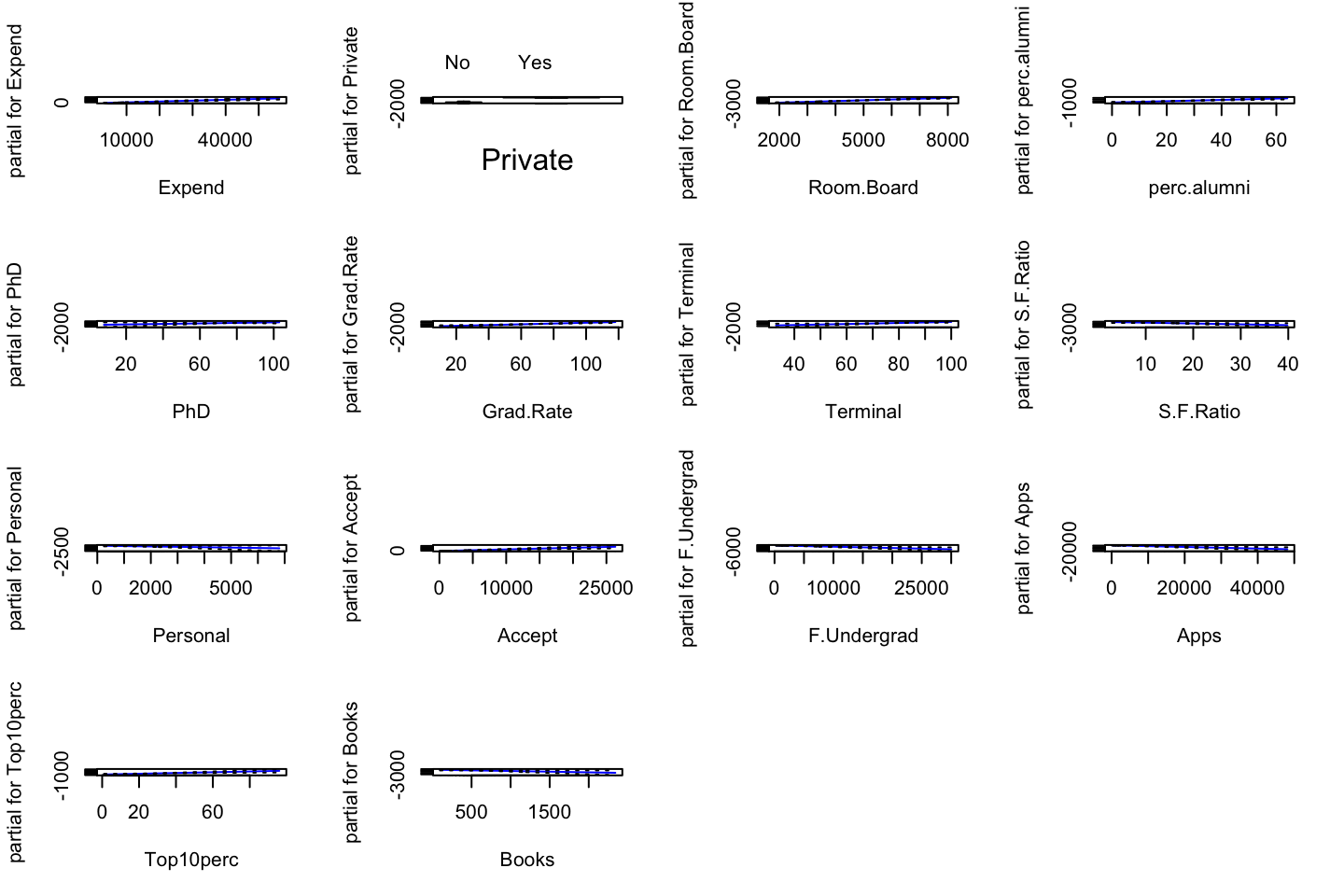

rm(train.index)Finding the best subset using forward selection

reg_null <- lm(Outstate ~ 1,data = train.df) #The null models

reg_full <- lm(Outstate ~ .,data = train.df) #The full model

step.for <- stepAIC(direction = "forward",object = reg_null,trace = TRUE,scope = list(upper = reg_full,lower = reg_null)) #This could also have been done with regsubsets()## Start: AIC=10345.68

## Outstate ~ 1

##

## Df Sum of Sq RSS AIC

## + Expend 1 4629213116 5745572675 9980.1

## + Room.Board 1 4590249421 5784536370 9984.3

## + Grad.Rate 1 3627082940 6747702852 10080.1

## + Top10perc 1 3457381465 6917404327 10095.6

## + perc.alumni 1 3450011987 6924773804 10096.2

## + S.F.Ratio 1 3359826734 7014959058 10104.3

## + Private 1 3075173999 7299611793 10129.0

## + Top25perc 1 2644625673 7730160118 10164.7

## + Terminal 1 2115825269 8258960523 10205.8

## + PhD 1 1886906305 8487879487 10222.8

## + Personal 1 735337851 9639447941 10301.9

## + P.Undergrad 1 571871111 9802914681 10312.4

## + F.Undergrad 1 404016737 9970769054 10323.0

## + Enroll 1 180722874 10194062918 10336.7

## + Apps 1 52841694 10321944097 10344.5

## <none> 10374785791 10345.7

## + Books 1 14584993 10360200799 10346.8

## + Accept 1 90338 10374695453 10347.7

##

## Step: AIC=9980.11

## Outstate ~ Expend

##

## Df Sum of Sq RSS AIC

## + Private 1 1549696145 4195876531 9786.6

## + Room.Board 1 1512070540 4233502135 9792.1

## + Grad.Rate 1 1328830834 4416741842 9818.5

## + perc.alumni 1 1089768014 4655804661 9851.3

## + Personal 1 501146318 5244426358 9925.3

## + S.F.Ratio 1 464809224 5280763451 9929.6

## + F.Undergrad 1 454756490 5290816185 9930.8

## + P.Undergrad 1 349207380 5396365295 9943.1

## + Top10perc 1 346779721 5398792955 9943.4

## + Top25perc 1 345553704 5400018971 9943.5

## + Enroll 1 331862326 5413710349 9945.1

## + Terminal 1 269786468 5475786207 9952.2

## + PhD 1 210830779 5534741896 9958.9

## + Apps 1 110876800 5634695875 9970.0

## + Accept 1 85558833 5660013842 9972.8

## + Books 1 18850616 5726722060 9980.1

## <none> 5745572675 9980.1

##

## Step: AIC=9786.59

## Outstate ~ Expend + Private

##

## Df Sum of Sq RSS AIC

## + Room.Board 1 876836109 3319040422 9642.8

## + Terminal 1 743790341 3452086190 9667.2

## + Grad.Rate 1 703122598 3492753933 9674.5

## + PhD 1 693220664 3502655866 9676.3

## + perc.alumni 1 408270963 3787605568 9724.9

## + Top25perc 1 401010315 3794866215 9726.1

## + Top10perc 1 319956878 3875919653 9739.3

## + Accept 1 152840390 4043036140 9765.5

## + Personal 1 128047157 4067829374 9769.3

## + Apps 1 118364448 4077512083 9770.8

## + Enroll 1 37847497 4158029034 9783.0

## + S.F.Ratio 1 28944091 4166932439 9784.3

## + F.Undergrad 1 16848576 4179027955 9786.1

## <none> 4195876531 9786.6

## + Books 1 5437161 4190439370 9787.8

## + P.Undergrad 1 3721562 4192154968 9788.0

##

## Step: AIC=9642.78

## Outstate ~ Expend + Private + Room.Board

##

## Df Sum of Sq RSS AIC

## + perc.alumni 1 419740777 2899299645 9560.7

## + Grad.Rate 1 412477957 2906562465 9562.2

## + PhD 1 379305385 2939735036 9569.3

## + Terminal 1 369656216 2949384206 9571.3

## + Top25perc 1 311299881 3007740541 9583.5

## + Top10perc 1 280108774 3038931648 9589.9

## + Personal 1 84780679 3234259743 9628.7

## + Accept 1 42471882 3276568540 9636.8

## + Books 1 35245677 3283794745 9638.1

## + P.Undergrad 1 29148614 3289891808 9639.3

## + S.F.Ratio 1 27660641 3291379781 9639.6

## + Apps 1 24109291 3294931131 9640.2

## + Enroll 1 12561816 3306478606 9642.4

## <none> 3319040422 9642.8

## + F.Undergrad 1 2021439 3317018983 9644.4

##

## Step: AIC=9560.68

## Outstate ~ Expend + Private + Room.Board + perc.alumni

##

## Df Sum of Sq RSS AIC

## + PhD 1 248319695 2650979950 9507.0

## + Terminal 1 241588269 2657711376 9508.6

## + Grad.Rate 1 194250957 2705048688 9519.5

## + Top25perc 1 146504265 2752795380 9530.4

## + Top10perc 1 124783671 2774515974 9535.3

## + Accept 1 68407796 2830891849 9547.8